Mobina Shahbandeh

NaviQAte: Functionality-Guided Web Application Navigation

Sep 16, 2024

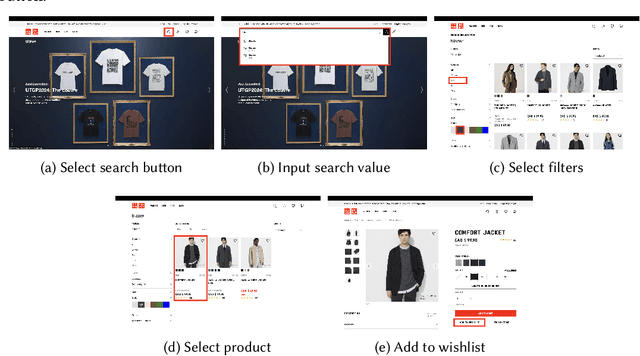

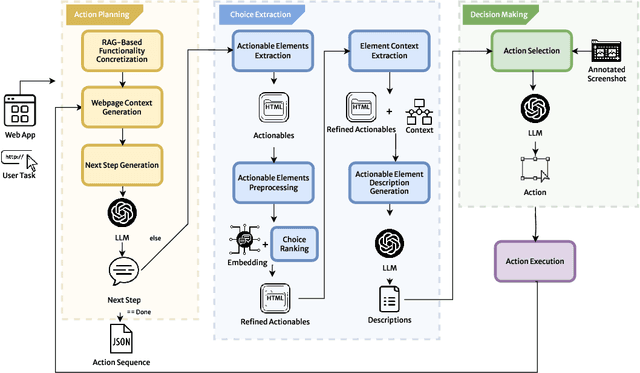

Abstract:End-to-end web testing is challenging due to the need to explore diverse web application functionalities. Current state-of-the-art methods, such as WebCanvas, are not designed for broad functionality exploration; they rely on specific, detailed task descriptions, limiting their adaptability in dynamic web environments. We introduce NaviQAte, which frames web application exploration as a question-and-answer task, generating action sequences for functionalities without requiring detailed parameters. Our three-phase approach utilizes advanced large language models like GPT-4o for complex decision-making and cost-effective models, such as GPT-4o mini, for simpler tasks. NaviQAte focuses on functionality-guided web application navigation, integrating multi-modal inputs such as text and images to enhance contextual understanding. Evaluations on the Mind2Web-Live and Mind2Web-Live-Abstracted datasets show that NaviQAte achieves a 44.23% success rate in user task navigation and a 38.46% success rate in functionality navigation, representing a 15% and 33% improvement over WebCanvas. These results underscore the effectiveness of our approach in advancing automated web application testing.

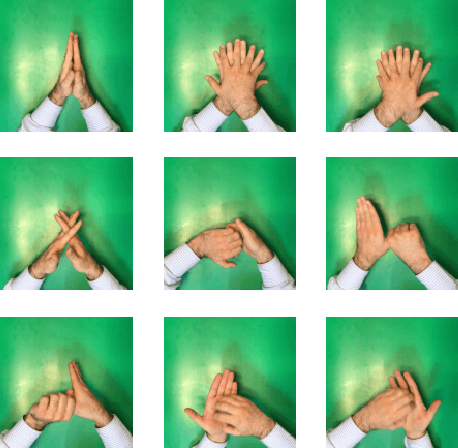

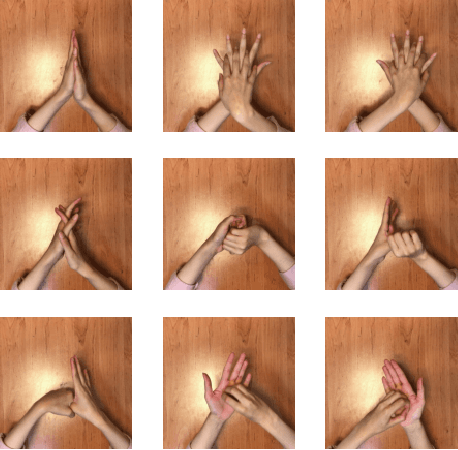

A Deep Learning Based Automated Hand Hygiene Training System

Dec 10, 2021

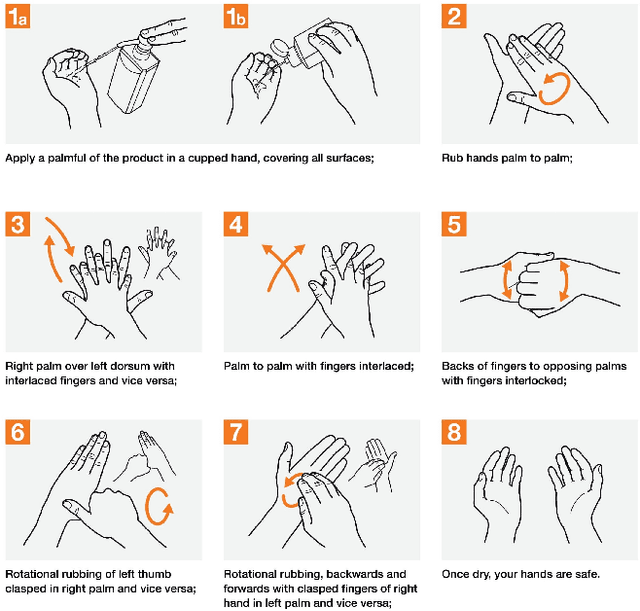

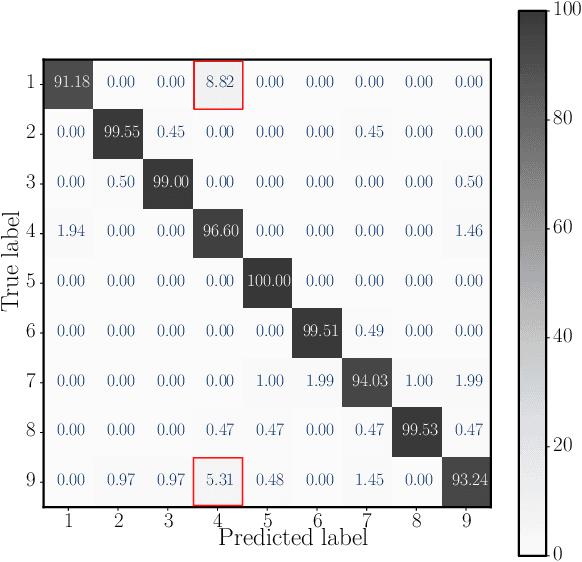

Abstract:Hand hygiene is crucial for preventing viruses and infections. Due to the pervasive outbreak of COVID-19, wearing a mask and hand hygiene appear to be the most effective ways for the public to curb the spread of these viruses. The World Health Organization (WHO) recommends a guideline for alcohol-based hand rub in eight steps to ensure that all surfaces of hands are entirely clean. As these steps involve complex gestures, human assessment of them lacks enough accuracy. However, Deep Neural Network (DNN) and machine vision have made it possible to accurately evaluate hand rubbing quality for the purposes of training and feedback. In this paper, an automated deep learning based hand rub assessment system with real-time feedback is presented. The system evaluates the compliance with the 8-step guideline using a DNN architecture trained on a dataset of videos collected from volunteers with various skin tones and hand characteristics following the hand rubbing guideline. Various DNN architectures were tested, and an Inception-ResNet model led to the best results with 97% test accuracy. In the proposed system, an NVIDIA Jetson AGX Xavier embedded board runs the software. The efficacy of the system is evaluated in a concrete situation of being used by various users, and challenging steps are identified. In this experiment, the average time taken by the hand rubbing steps among volunteers is 27.2 seconds, which conforms to the WHO guidelines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge