Mitchell Neilsen

Seed Kernel Counting using Domain Randomization and Object Tracking Neural Networks

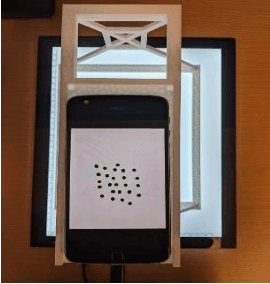

Aug 10, 2023Abstract:High-throughput phenotyping (HTP) of seeds, also known as seed phenotyping, is the comprehensive assessment of complex seed traits such as growth, development, tolerance, resistance, ecology, yield, and the measurement of parameters that form more complex traits. One of the key aspects of seed phenotyping is cereal yield estimation that the seed production industry relies upon to conduct their business. While mechanized seed kernel counters are available in the market currently, they are often priced high and sometimes outside the range of small scale seed production firms' affordability. The development of object tracking neural network models such as You Only Look Once (YOLO) enables computer scientists to design algorithms that can estimate cereal yield inexpensively. The key bottleneck with neural network models is that they require a plethora of labelled training data before they can be put to task. We demonstrate that the use of synthetic imagery serves as a feasible substitute to train neural networks for object tracking that includes the tasks of object classification and detection. Furthermore, we propose a seed kernel counter that uses a low-cost mechanical hopper, trained YOLOv8 neural network model, and object tracking algorithms on StrongSORT and ByteTrack to estimate cereal yield from videos. The experiment yields a seed kernel count with an accuracy of 95.2\% and 93.2\% for Soy and Wheat respectively using the StrongSORT algorithm, and an accuray of 96.8\% and 92.4\% for Soy and Wheat respectively using the ByteTrack algorithm.

Fractional Vegetation Cover Estimation using Hough Lines and Linear Iterative Clustering

Apr 30, 2022

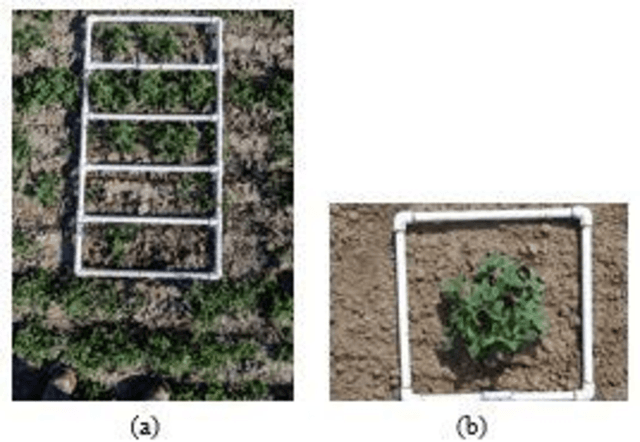

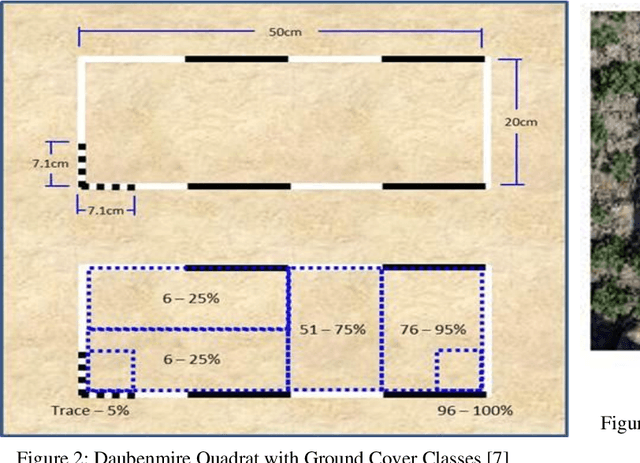

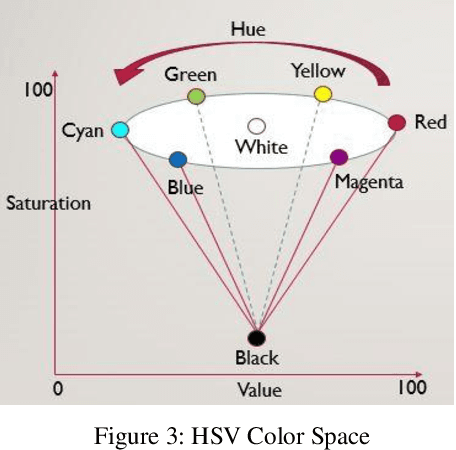

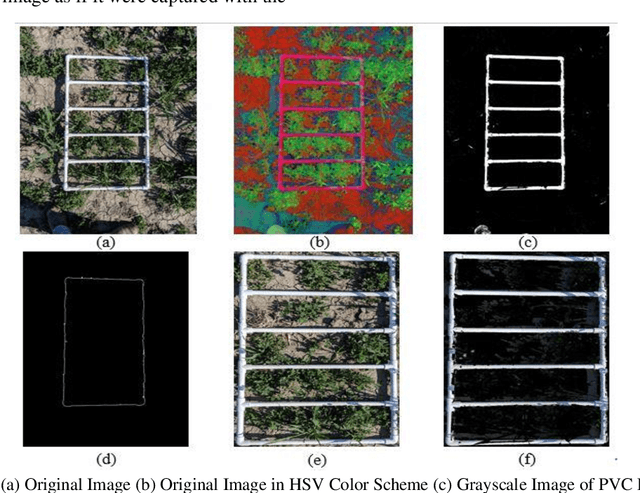

Abstract:A common requirement of plant breeding programs across the country is companion planting -- growing different species of plants in close proximity so they can mutually benefit each other. However, the determination of companion plants requires meticulous monitoring of plant growth. The technique of ocular monitoring is often laborious and error prone. The availability of image processing techniques can be used to address the challenge of plant growth monitoring and provide robust solutions that assist plant scientists to identify companion plants. This paper presents a new image processing algorithm to determine the amount of vegetation cover present in a given area, called fractional vegetation cover. The proposed technique draws inspiration from the trusted Daubenmire method for vegetation cover estimation and expands upon it. Briefly, the idea is to estimate vegetation cover from images containing multiple rows of plant species growing in close proximity separated by a multi-segment PVC frame of known size. The proposed algorithm applies a Hough Transform and Simple Linear Iterative Clustering (SLIC) to estimate the amount of vegetation cover within each segment of the PVC frame. The analysis when repeated over images captured at regular intervals of time provides crucial insights into plant growth. As a means of comparison, the proposed algorithm is compared with SamplePoint and Canopeo, two trusted applications used for vegetation cover estimation. The comparison shows a 99% similarity with both SamplePoint and Canopeo demonstrating the accuracy and feasibility of the algorithm for fractional vegetation cover estimation.

Seed Classification using Synthetic Image Datasets Generated from Low-Altitude UAV Imagery

Oct 06, 2021

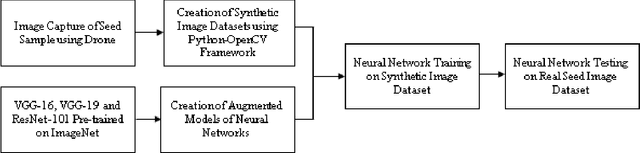

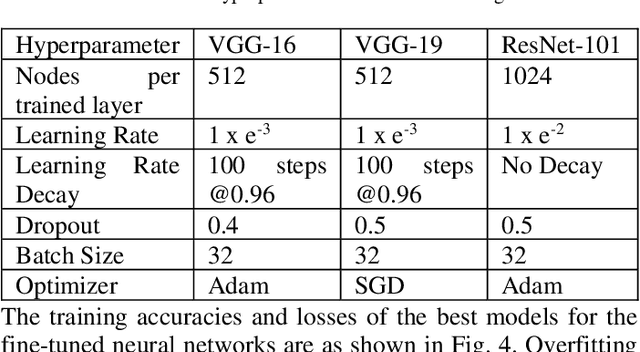

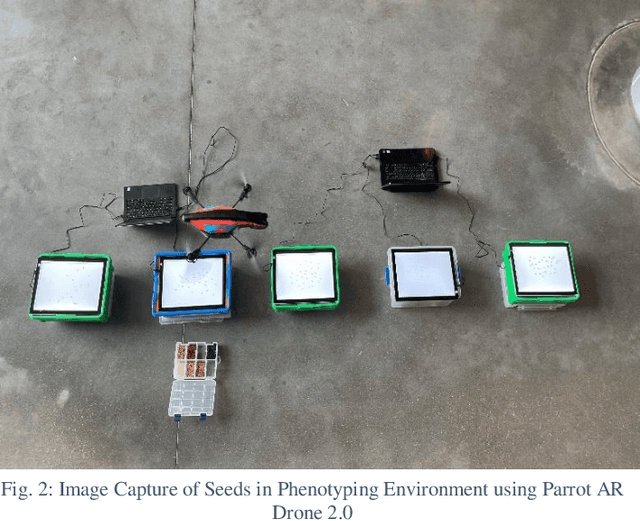

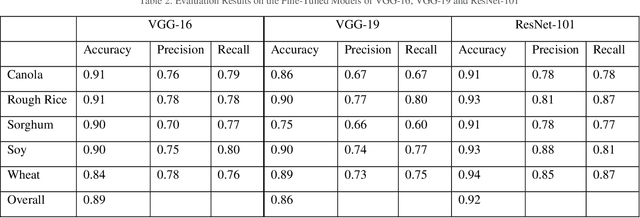

Abstract:Plant breeding programs extensively monitor the evolution of seed kernels for seed certification, wherein lies the need to appropriately label the seed kernels by type and quality. However, the breeding environments are large where the monitoring of seed kernels can be challenging due to the minuscule size of seed kernels. The use of unmanned aerial vehicles aids in seed monitoring and labeling since they can capture images at low altitudes whilst being able to access even the remotest areas in the environment. A key bottleneck in the labeling of seeds using UAV imagery is drone altitude i.e. the classification accuracy decreases as the altitude increases due to lower image detail. Convolutional neural networks are a great tool for multi-class image classification when there is a training dataset that closely represents the different scenarios that the network might encounter during evaluation. The article addresses the challenge of training data creation using Domain Randomization wherein synthetic image datasets are generated from a meager sample of seeds captured by the bottom camera of an autonomously driven Parrot AR Drone 2.0. Besides, the article proposes a seed classification framework as a proof-of-concept using the convolutional neural networks of Microsoft's ResNet-100, Oxford's VGG-16, and VGG-19. To enhance the classification accuracy of the framework, an ensemble model is developed resulting in an overall accuracy of 94.6%.

PiBase: An IoT-based Security System using Raspberry Pi and Google Firebase

Jul 29, 2021

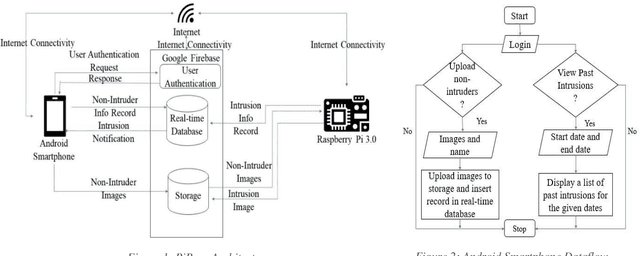

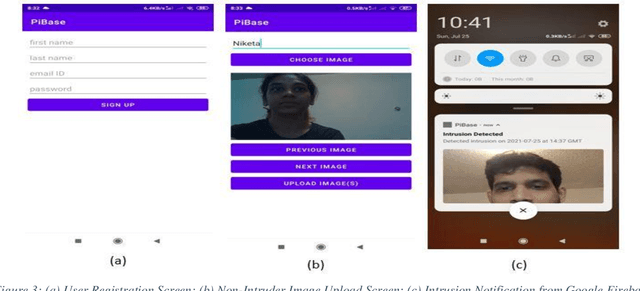

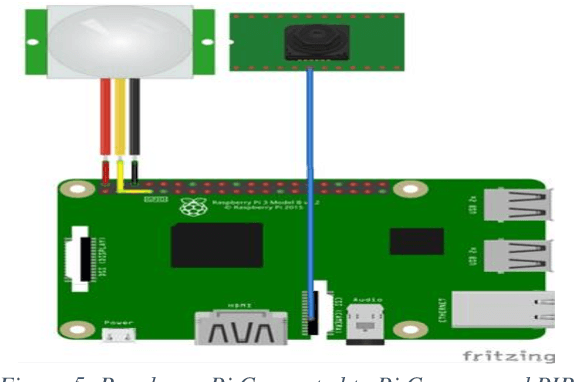

Abstract:Smart environments are environments where digital devices are connected to each other over the Internet and operate in sync. Security is of paramount importance in such environments. This paper addresses aspects of authorized access and intruder detection for smart environments. Proposed is PiBase, an Internet of Things (IoT)-based app that aids in detecting intruders and providing security. The hardware for the application consists of a Raspberry Pi, a PIR motion sensor to detect motion from infrared radiation in the environment, an Android mobile phone and a camera. The software for the application is written in Java, Python and NodeJS. The PIR sensor and Pi camera module connected to the Raspberry Pi aid in detecting human intrusion. Machine learning algorithms, namely Haar-feature based cascade classifiers and Linear Binary Pattern Histograms (LBPH), are used for face detection and face recognition, respectively. The app lets the user create a list of non-intruders and anyone that is not on the list is identified as an intruder. The app alerts the user only in the event of an intrusion by using the Google Firebase Cloud Messaging service to trigger a notification to the app. The user may choose to add the detected intruder to the list of non-intruders through the app to avoid further detections as intruder. Face detection by the Haar Cascade algorithm yields a recall of 94.6%. Thus, the system is both highly effective and relatively low cost.

Classification of Seeds using Domain Randomization on Self-Supervised Learning Frameworks

Mar 29, 2021

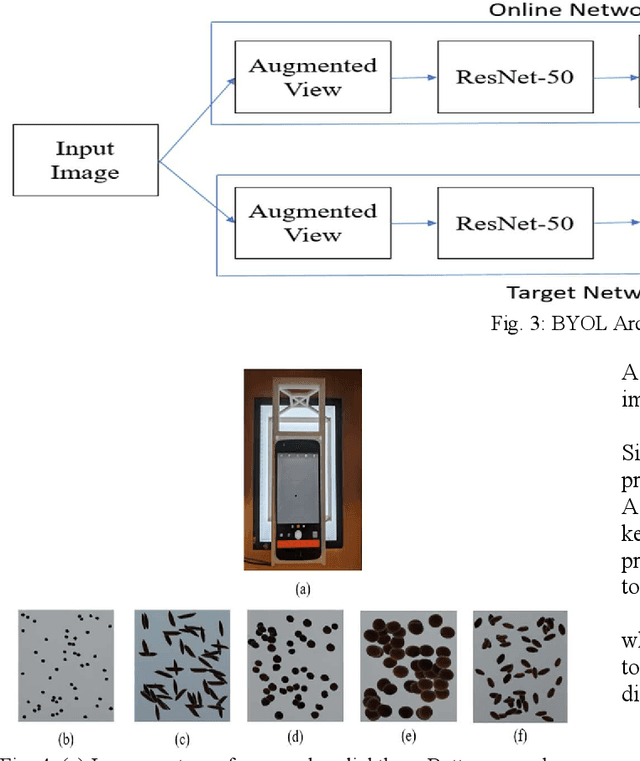

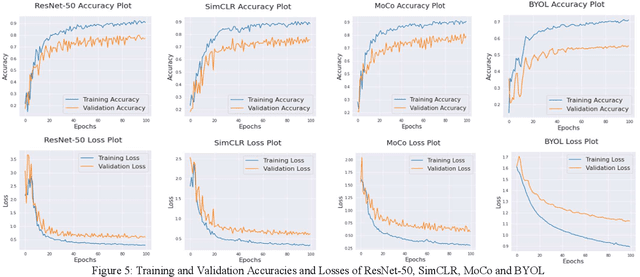

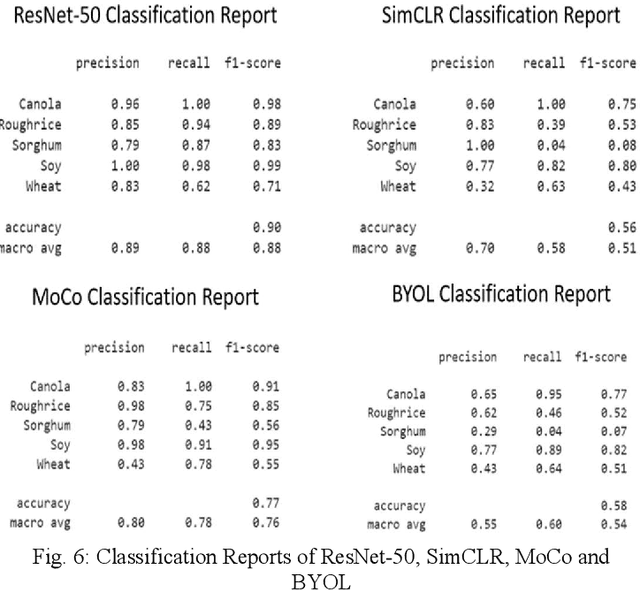

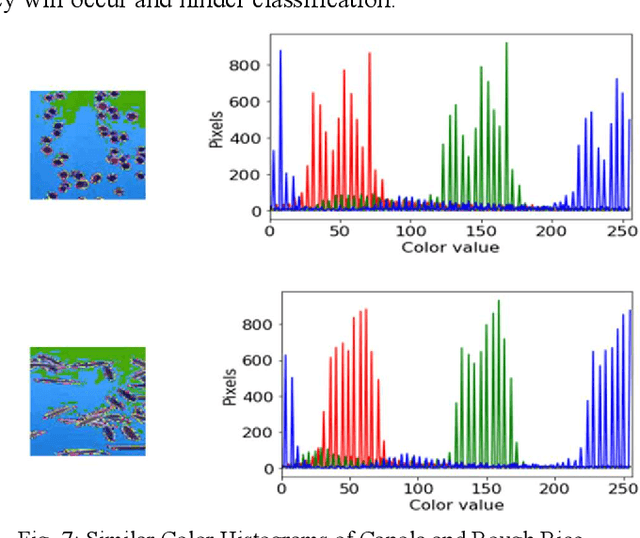

Abstract:The first step toward Seed Phenotyping i.e. the comprehensive assessment of complex seed traits such as growth, development, tolerance, resistance, ecology, yield, and the measurement of pa-rameters that form more complex traits is the identification of seed type. Generally, a plant re-searcher inspects the visual attributes of a seed such as size, shape, area, color and texture to identify the seed type, a process that is tedious and labor-intensive. Advances in the areas of computer vision and deep learning have led to the development of convolutional neural networks (CNN) that aid in classification using images. While they classify efficiently, a key bottleneck is the need for an extensive amount of labelled data to train the CNN before it can be put to the task of classification. The work leverages the concepts of Contrastive Learning and Domain Randomi-zation in order to achieve the same. Briefly, domain randomization is the technique of applying models trained on images containing simulated objects to real-world objects. The use of synthetic images generated from a representational sample crop of real-world images alleviates the need for a large volume of test subjects. As part of the work, synthetic image datasets of five different types of seed images namely, canola, rough rice, sorghum, soy and wheat are applied to three different self-supervised learning frameworks namely, SimCLR, Momentum Contrast (MoCo) and Build Your Own Latent (BYOL) where ResNet-50 is used as the backbone in each of the networks. When the self-supervised models are fine-tuned with only 5% of the labels from the synthetic dataset, results show that MoCo, the model that yields the best performance of the self-supervised learning frameworks in question, achieves an accuracy of 77% on the test dataset which is only ~13% less than the accuracy of 90% achieved by ResNet-50 trained on 100% of the labels.

Seed Phenotyping on Neural Networks using Domain Randomization and Transfer Learning

Dec 24, 2020

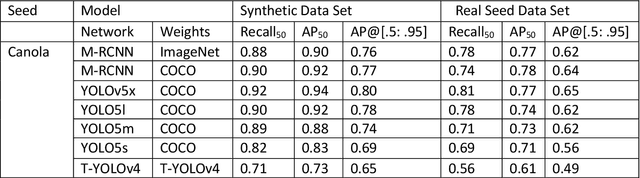

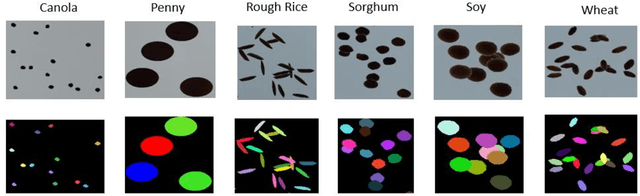

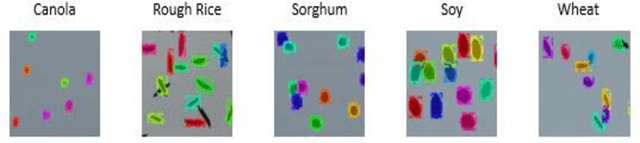

Abstract:Seed phenotyping is the idea of analyzing the morphometric characteristics of a seed to predict the behavior of the seed in terms of development, tolerance and yield in various environmental conditions. The focus of the work is the application and feasibility analysis of the state-of-the-art object detection and localization neural networks, Mask R-CNN and YOLO (You Only Look Once), for seed phenotyping using Tensorflow. One of the major bottlenecks of such an endeavor is the need for large amounts of training data. While the capture of a multitude of seed images is taunting, the images are also required to be annotated to indicate the boundaries of the seeds on the image and converted to data formats that the neural networks are able to consume. Although tools to manually perform the task of annotation are available for free, the amount of time required is enormous. In order to tackle such a scenario, the idea of domain randomization i.e. the technique of applying models trained on images containing simulated objects to real-world objects, is considered. In addition, transfer learning i.e. the idea of applying the knowledge obtained while solving a problem to a different problem, is used. The networks are trained on pre-trained weights from the popular ImageNet and COCO data sets. As part of the work, experiments with different parameters are conducted on five different seed types namely, canola, rough rice, sorghum, soy, and wheat.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge