Mingze Xi

Typing on Any Surface: A Deep Learning-based Method for Real-Time Keystroke Detection in Augmented Reality

Aug 31, 2023

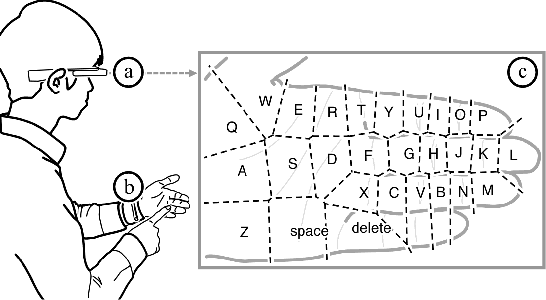

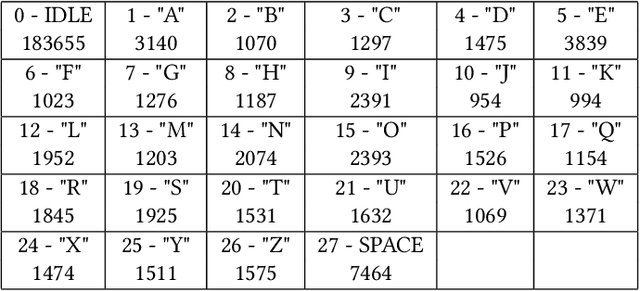

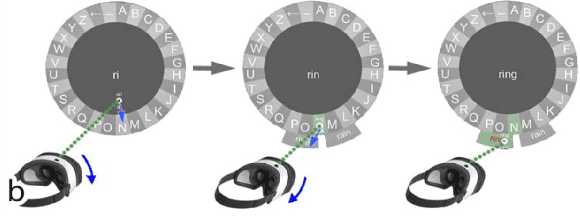

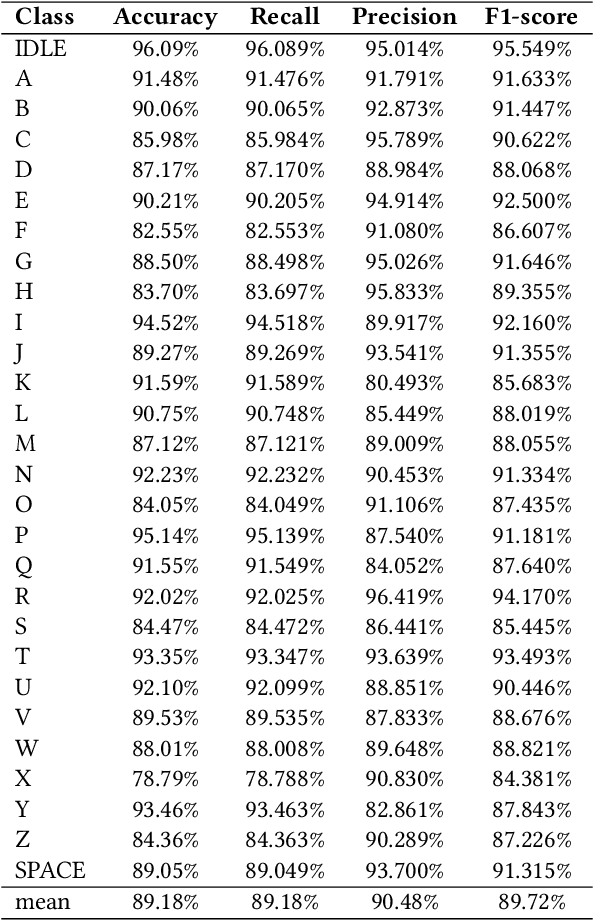

Abstract:Frustrating text entry interface has been a major obstacle in participating in social activities in augmented reality (AR). Popular options, such as mid-air keyboard interface, wireless keyboards or voice input, either suffer from poor ergonomic design, limited accuracy, or are simply embarrassing to use in public. This paper proposes and validates a deep-learning based approach, that enables AR applications to accurately predict keystrokes from the user perspective RGB video stream that can be captured by any AR headset. This enables a user to perform typing activities on any flat surface and eliminates the need of a physical or virtual keyboard. A two-stage model, combing an off-the-shelf hand landmark extractor and a novel adaptive Convolutional Recurrent Neural Network (C-RNN), was trained using our newly built dataset. The final model was capable of adaptive processing user-perspective video streams at ~32 FPS. This base model achieved an overall accuracy of $91.05\%$ when typing 40 Words per Minute (wpm), which is how fast an average person types with two hands on a physical keyboard. The Normalised Levenshtein Distance also further confirmed the real-world applicability of that our approach. The promising results highlight the viability of our approach and the potential for our method to be integrated into various applications. We also discussed the limitations and future research required to bring such technique into a production system.

Smart Headset, Computer Vision and Machine Learning for Efficient Prawn Farm Management

Oct 14, 2022

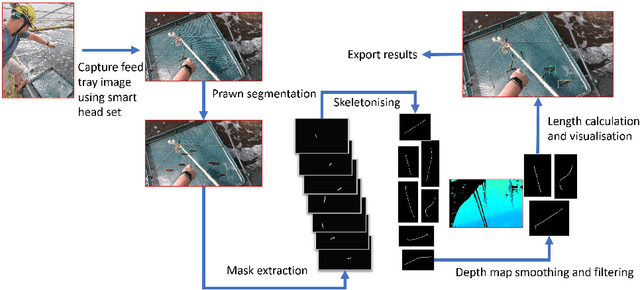

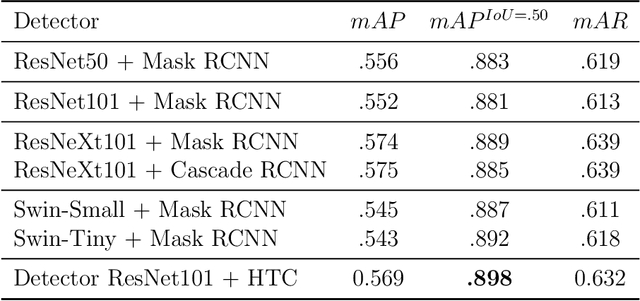

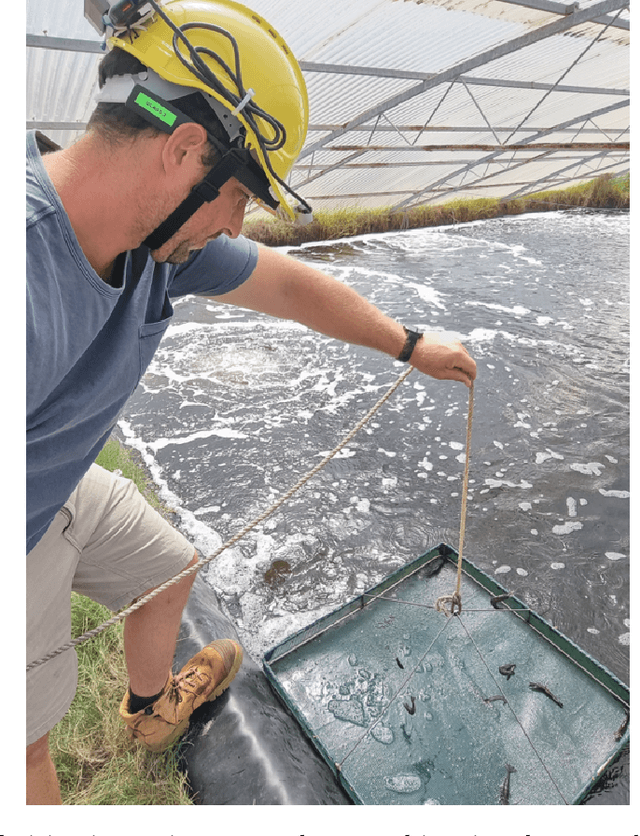

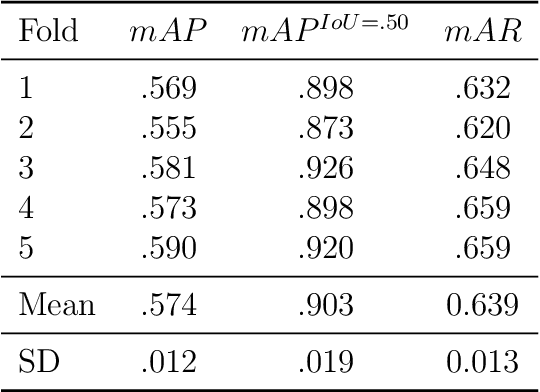

Abstract:Understanding the growth and distribution of the prawns is critical for optimising the feed and harvest strategies. An inadequate understanding of prawn growth can lead to reduced financial gain, for example, crops are harvested too early. The key to maintaining a good understanding of prawn growth is frequent sampling. However, the most commonly adopted sampling practice, the cast net approach, is unable to sample the prawns at a high frequency as it is expensive and laborious. An alternative approach is to sample prawns from feed trays that farm workers inspect each day. This will allow growth data collection at a high frequency (each day). But measuring prawns manually each day is a laborious task. In this article, we propose a new approach that utilises smart glasses, depth camera, computer vision and machine learning to detect prawn distribution and growth from feed trays. A smart headset was built to allow farmers to collect prawn data while performing daily feed tray checks. A computer vision + machine learning pipeline was developed and demonstrated to detect the growth trends of prawns in 4 prawn ponds over a growing season.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge