Mikaella Keller

To Word Senses and Beyond: Inducing Concepts with Contextualized Language Models

Jun 28, 2024

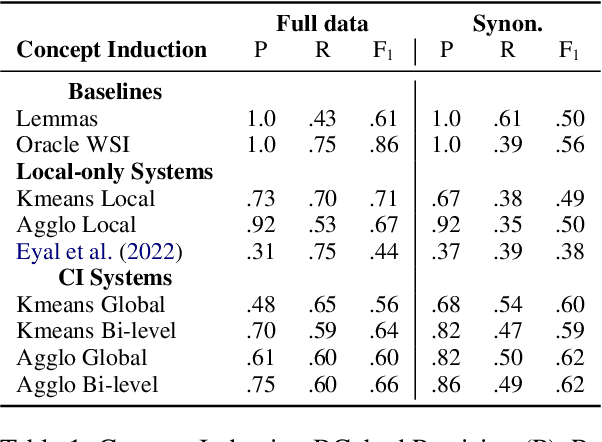

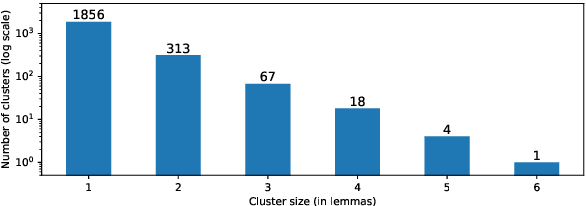

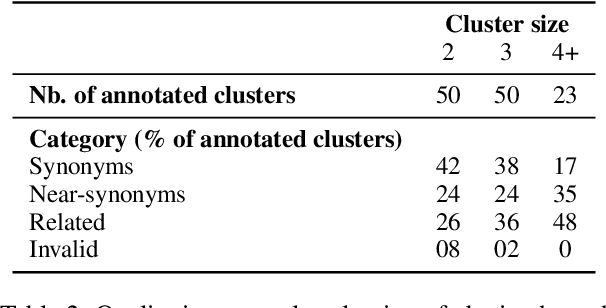

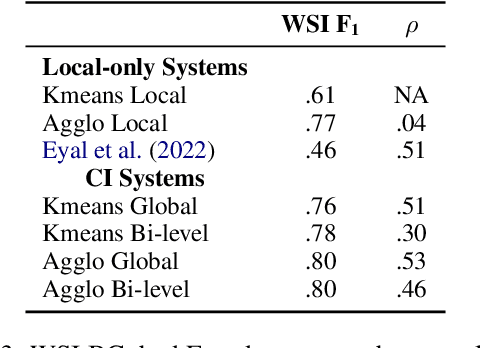

Abstract:Polysemy and synonymy are two crucial interrelated facets of lexical ambiguity. While both phenomena have been studied extensively in NLP, leading to dedicated systems, they are often been considered independently. While many tasks dealing with polysemy (e.g. Word Sense Disambiguiation or Induction) highlight the role of a word's senses, the study of synonymy is rooted in the study of concepts, i.e. meaning shared across the lexicon. In this paper, we introduce Concept Induction, the unsupervised task of learning a soft clustering among words that defines a set of concepts directly from data. This task generalizes that of Word Sense Induction. We propose a bi-level approach to Concept Induction that leverages both a local lemma-centric view and a global cross-lexicon perspective to induce concepts. We evaluate the obtained clustering on SemCor's annotated data and obtain good performances (BCubed F1 above 0.60). We find that the local and the global levels are mutually beneficial to induce concepts and also senses in our setting. Finally, we create static embeddings representing our induced concepts and use them on the Word-in-Context task, obtaining competitive performances with the State-of-the-Art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge