Michael Hegedus

Generalized Grasping for Mechanical Grippers for Unknown Objects with Partial Point Cloud Representations

Jun 23, 2020

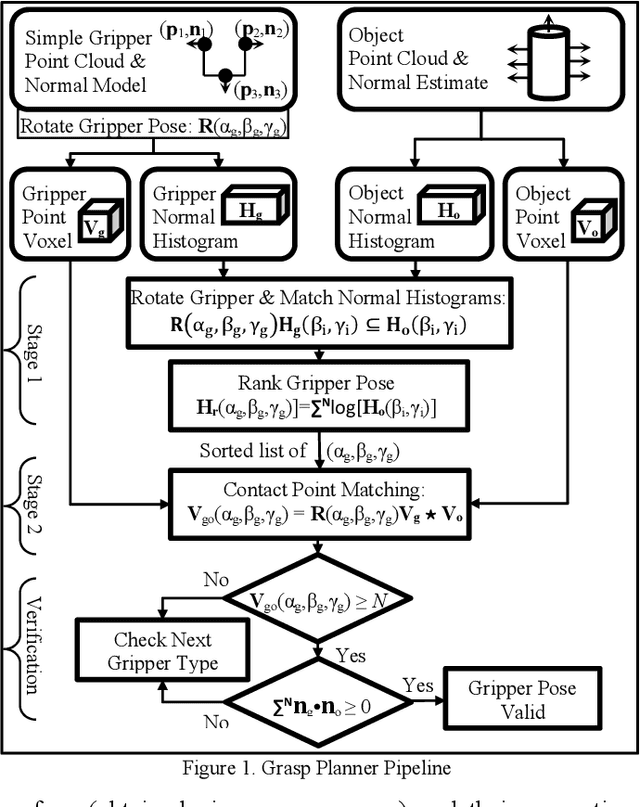

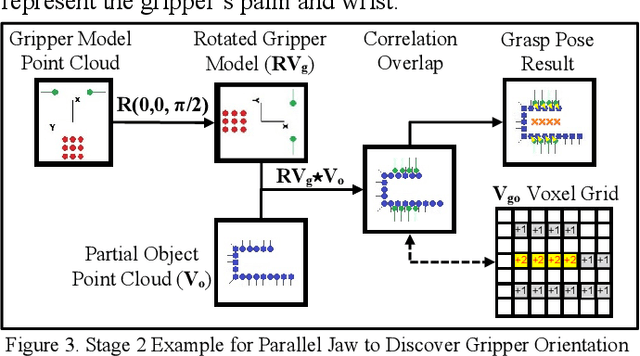

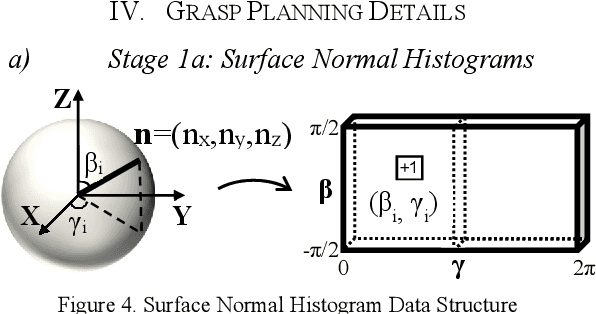

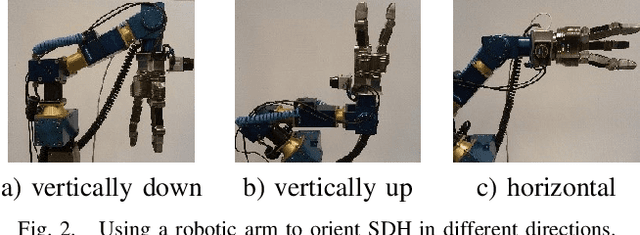

Abstract:We present a generalized grasping algorithm that uses point clouds (i.e. a group of points and their respective surface normals) to discover grasp pose solutions for multiple grasp types, executed by a mechanical gripper, in near real-time. The algorithm introduces two ideas: 1) a histogram of finger contact normals is used to represent a grasp 'shape' to guide a gripper orientation search in a histogram of object(s) surface normals, and 2) voxel grid representations of gripper and object(s) are cross-correlated to match finger contact points, i.e. grasp 'size', to discover a grasp pose. Constraints, such as collisions with neighbouring objects, are optionally incorporated in the cross-correlation computation. We show via simulations and experiments that 1) grasp poses for three grasp types can be found in near real-time, 2) grasp pose solutions are consistent with respect to voxel resolution changes for both partial and complete point cloud scans, and 3) a planned grasp is executed with a mechanical gripper.

Identifying Multiple Interaction Events from Tactile Data during Robot-Human Object Transfer

Sep 15, 2019

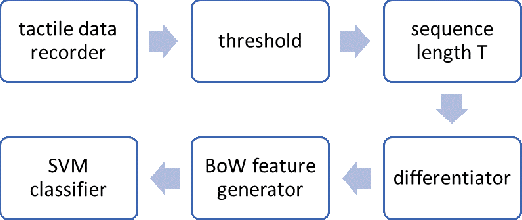

Abstract:During a robot to human object handover task, several intended or unintended events may occur with the object - it may be pulled, pushed, bumped or simply held - by the human receiver. We show that it is possible to differentiate between these events solely via tactile sensors. Training data from tactile sensors were recorded during interaction of human subjects with the object held by a 3-finger robotic hand. A Bag of Words approach was used to automatically extract effective features from the tactile data. A Support Vector Machine was used to distinguish between the four events with over 95 percent average accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge