Mengfei Zhao

TensorNEAT: A GPU-accelerated Library for NeuroEvolution of Augmenting Topologies

Apr 11, 2025

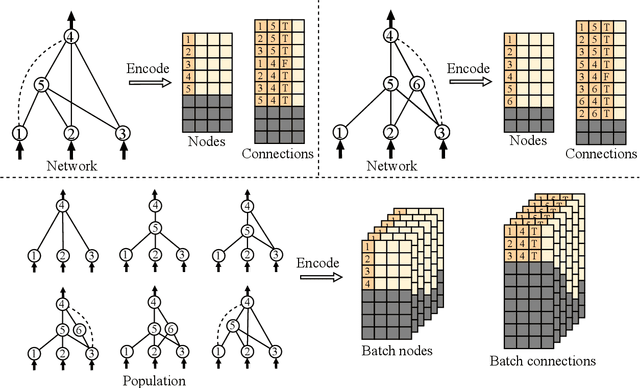

Abstract:The NeuroEvolution of Augmenting Topologies (NEAT) algorithm has received considerable recognition in the field of neuroevolution. Its effectiveness is derived from initiating with simple networks and incrementally evolving both their topologies and weights. Although its capability across various challenges is evident, the algorithm's computational efficiency remains an impediment, limiting its scalability potential. To address these limitations, this paper introduces TensorNEAT, a GPU-accelerated library that applies tensorization to the NEAT algorithm. Tensorization reformulates NEAT's diverse network topologies and operations into uniformly shaped tensors, enabling efficient parallel execution across entire populations. TensorNEAT is built upon JAX, leveraging automatic function vectorization and hardware acceleration to significantly enhance computational efficiency. In addition to NEAT, the library supports variants such as CPPN and HyperNEAT, and integrates with benchmark environments like Gym, Brax, and gymnax. Experimental evaluations across various robotic control environments in Brax demonstrate that TensorNEAT delivers up to 500x speedups compared to existing implementations, such as NEAT-Python. The source code for TensorNEAT is publicly available at: https://github.com/EMI-Group/tensorneat.

Tensorized NeuroEvolution of Augmenting Topologies for GPU Acceleration

Apr 11, 2024

Abstract:The NeuroEvolution of Augmenting Topologies (NEAT) algorithm has received considerable recognition in the field of neuroevolution. Its effectiveness is derived from initiating with simple networks and incrementally evolving both their topologies and weights. Although its capability across various challenges is evident, the algorithm's computational efficiency remains an impediment, limiting its scalability potential. In response, this paper introduces a tensorization method for the NEAT algorithm, enabling the transformation of its diverse network topologies and associated operations into uniformly shaped tensors for computation. This advancement facilitates the execution of the NEAT algorithm in a parallelized manner across the entire population. Furthermore, we develop TensorNEAT, a library that implements the tensorized NEAT algorithm and its variants, such as CPPN and HyperNEAT. Building upon JAX, TensorNEAT promotes efficient parallel computations via automated function vectorization and hardware acceleration. Moreover, the TensorNEAT library supports various benchmark environments including Gym, Brax, and gymnax. Through evaluations across a spectrum of robotics control environments in Brax, TensorNEAT achieves up to 500x speedups compared to the existing implementations such as NEAT-Python. Source codes are available at: https://github.com/EMI-Group/tensorneat.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge