Mehran Andalibi

RGB-D Robotic Pose Estimation For a Servicing Robotic Arm

Jul 23, 2022

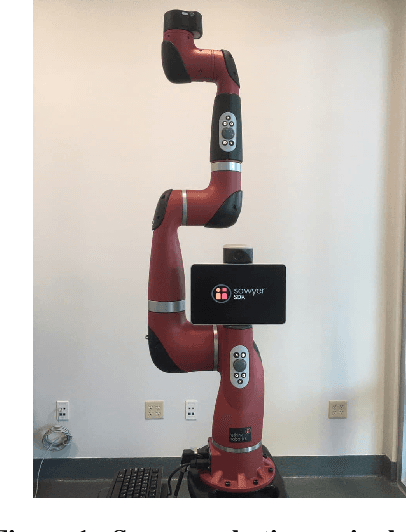

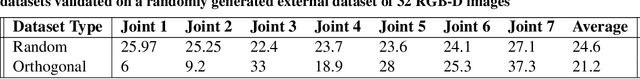

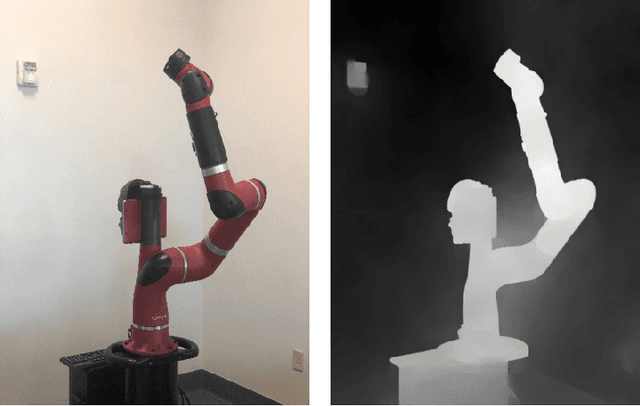

Abstract:A large number of robotic and human-assisted missions to the Moon and Mars are forecast. NASA's efforts to learn about the geology and makeup of these celestial bodies rely heavily on the use of robotic arms. The safety and redundancy aspects will be crucial when humans will be working alongside the robotic explorers. Additionally, robotic arms are crucial to satellite servicing and planned orbit debris mitigation missions. The goal of this work is to create a custom Computer Vision (CV) based Artificial Neural Network (ANN) that would be able to rapidly identify the posture of a 7 Degree of Freedom (DoF) robotic arm from a single (RGB-D) image - just like humans can easily identify if an arm is pointing in some general direction. The Sawyer robotic arm is used for developing and training this intelligent algorithm. Since Sawyer's joint space spans 7 dimensions, it is an insurmountable task to cover the entire joint configuration space. In this work, orthogonal arrays are used, similar to the Taguchi method, to efficiently span the joint space with the minimal number of training images. This ``optimally'' generated database is used to train the custom ANN and its degree of accuracy is on average equal to twice the smallest joint displacement step used for database generation. A pre-trained ANN will be useful for estimating the postures of robotic manipulators used on space stations, spacecraft, and rovers as an auxiliary tool or for contingency plans.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge