May Nathan

PriorPath: Coarse-To-Fine Approach for Controlled De-Novo Pathology Semantic Masks Generation

Nov 25, 2024

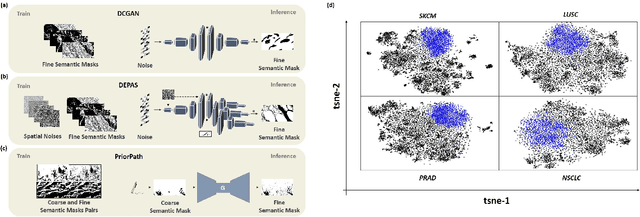

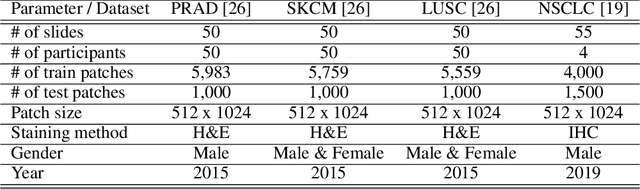

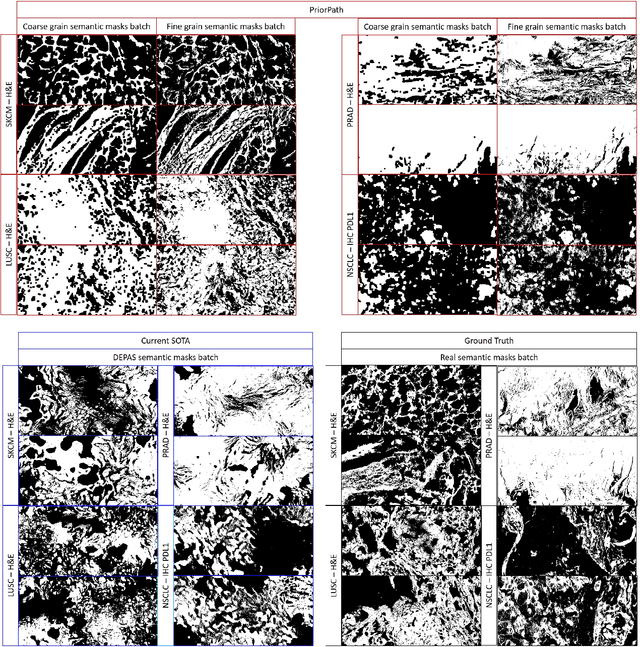

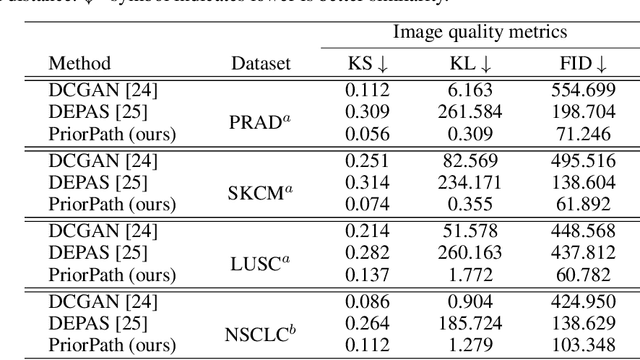

Abstract:Incorporating artificial intelligence (AI) into digital pathology offers promising prospects for automating and enhancing tasks such as image analysis and diagnostic processes. However, the diversity of tissue samples and the necessity for meticulous image labeling often result in biased datasets, constraining the applicability of algorithms trained on them. To harness synthetic histopathological images to cope with this challenge, it is essential not only to produce photorealistic images but also to be able to exert control over the cellular characteristics they depict. Previous studies used methods to generate, from random noise, semantic masks that captured the spatial distribution of the tissue. These masks were then used as a prior for conditional generative approaches to produce photorealistic histopathological images. However, as with many other generative models, this solution exhibits mode collapse as the model fails to capture the full diversity of the underlying data distribution. In this work, we present a pipeline, coined PriorPath, that generates detailed, realistic, semantic masks derived from coarse-grained images delineating tissue regions. This approach enables control over the spatial arrangement of the generated masks and, consequently, the resulting synthetic images. We demonstrated the efficacy of our method across three cancer types, skin, prostate, and lung, showcasing PriorPath's capability to cover the semantic mask space and to provide better similarity to real masks compared to previous methods. Our approach allows for specifying desired tissue distributions and obtaining both photorealistic masks and images within a single platform, thus providing a state-of-the-art, controllable solution for generating histopathological images to facilitate AI for computational pathology.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge