Matteo Pozzi

A Multicentric Dataset for Training and Benchmarking Breast Cancer Segmentation in H&E Slides

Oct 02, 2025

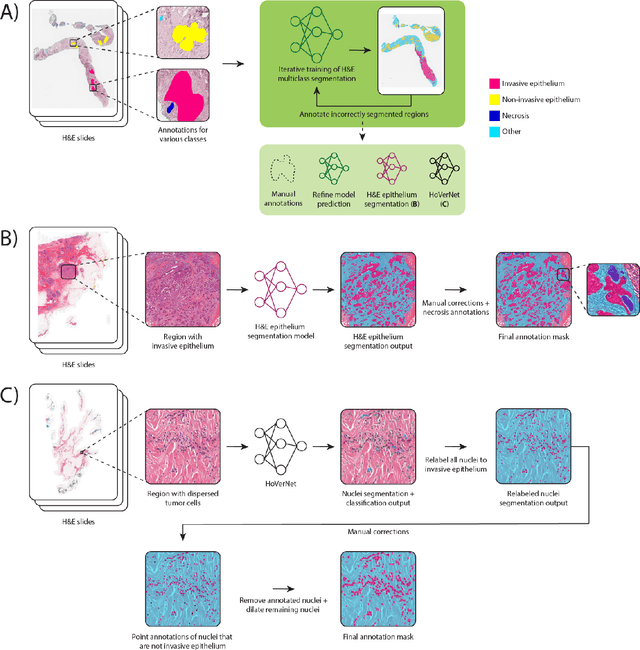

Abstract:Automated semantic segmentation of whole-slide images (WSIs) stained with hematoxylin and eosin (H&E) is essential for large-scale artificial intelligence-based biomarker analysis in breast cancer. However, existing public datasets for breast cancer segmentation lack the morphological diversity needed to support model generalizability and robust biomarker validation across heterogeneous patient cohorts. We introduce BrEast cancEr hisTopathoLogy sEgmentation (BEETLE), a dataset for multiclass semantic segmentation of H&E-stained breast cancer WSIs. It consists of 587 biopsies and resections from three collaborating clinical centers and two public datasets, digitized using seven scanners, and covers all molecular subtypes and histological grades. Using diverse annotation strategies, we collected annotations across four classes - invasive epithelium, non-invasive epithelium, necrosis, and other - with particular focus on morphologies underrepresented in existing datasets, such as ductal carcinoma in situ and dispersed lobular tumor cells. The dataset's diversity and relevance to the rapidly growing field of automated biomarker quantification in breast cancer ensure its high potential for reuse. Finally, we provide a well-curated, multicentric external evaluation set to enable standardized benchmarking of breast cancer segmentation models.

Subsidy design for better social outcomes

Sep 04, 2024Abstract:Overcoming the impact of selfish behavior of rational players in multiagent systems is a fundamental problem in game theory. Without any intervention from a central agent, strategic users take actions in order to maximize their personal utility, which can lead to extremely inefficient overall system performance, often indicated by a high Price of Anarchy. Recent work (Lin et al. 2021) investigated and formalized yet another undesirable behavior of rational agents, that of avoiding freely available information about the game for selfish reasons, leading to worse social outcomes. A central planner can significantly mitigate these issues by injecting a subsidy to reduce certain costs associated with the system and obtain net gains in the system performance. Crucially, the planner needs to determine how to allocate this subsidy effectively. We formally show that designing subsidies that perfectly optimize the social good, in terms of minimizing the Price of Anarchy or preventing the information avoidance behavior, is computationally hard under standard complexity theoretic assumptions. On the positive side, we show that we can learn provably good values of subsidy in repeated games coming from the same domain. This data-driven subsidy design approach avoids solving computationally hard problems for unseen games by learning over polynomially many games. We also show that optimal subsidy can be learned with no-regret given an online sequence of games, under mild assumptions on the cost matrix. Our study focuses on two distinct games: a Bayesian extension of the well-studied fair cost-sharing game, and a component maintenance game with engineering applications.

Information Avoidance and Overvaluation in Sequential Decision Making under Epistemic Constraints

Jun 09, 2021

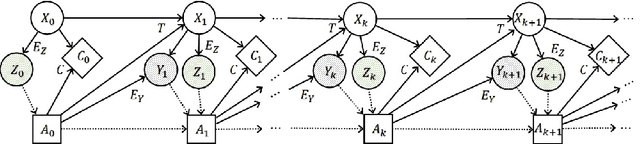

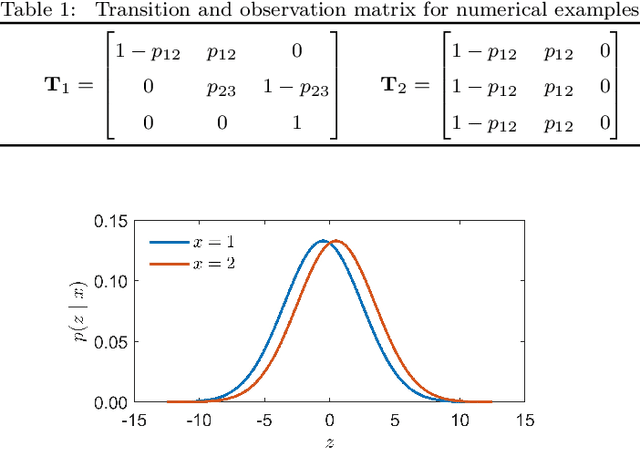

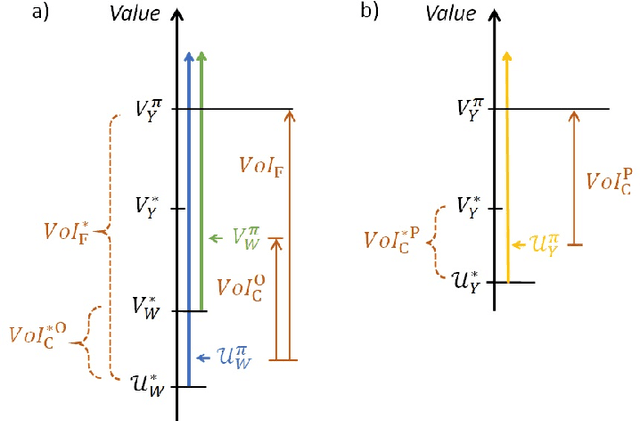

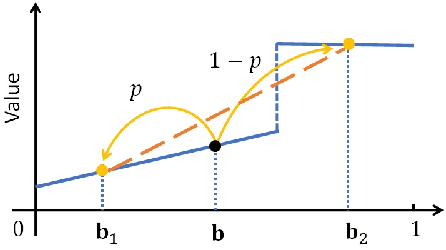

Abstract:Decision makers involved in the management of civil assets and systems usually take actions under constraints imposed by societal regulations. Some of these constraints are related to epistemic quantities, as the probability of failure events and the corresponding risks. Sensors and inspectors can provide useful information supporting the control process (e.g. the maintenance process of an asset), and decisions about collecting this information should rely on an analysis of its cost and value. When societal regulations encode an economic perspective that is not aligned with that of the decision makers, the Value of Information (VoI) can be negative (i.e., information sometimes hurts), and almost irrelevant information can even have a significant value (either positive or negative), for agents acting under these epistemic constraints. We refer to these phenomena as Information Avoidance (IA) and Information OverValuation (IOV). In this paper, we illustrate how to assess VoI in sequential decision making under epistemic constraints (as those imposed by societal regulations), by modeling a Partially Observable Markov Decision Processes (POMDP) and evaluating non optimal policies via Finite State Controllers (FSCs). We focus on the value of collecting information at current time, and on that of collecting sequential information, we illustrate how these values are related and we discuss how IA and IOV can occur in those settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge