Maryam Ziaei

Self Punishment and Reward Backfill for Deep Q-Learning

Apr 10, 2020

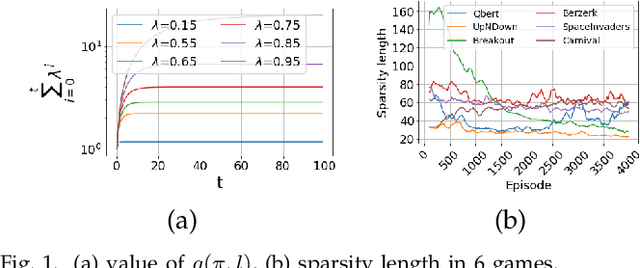

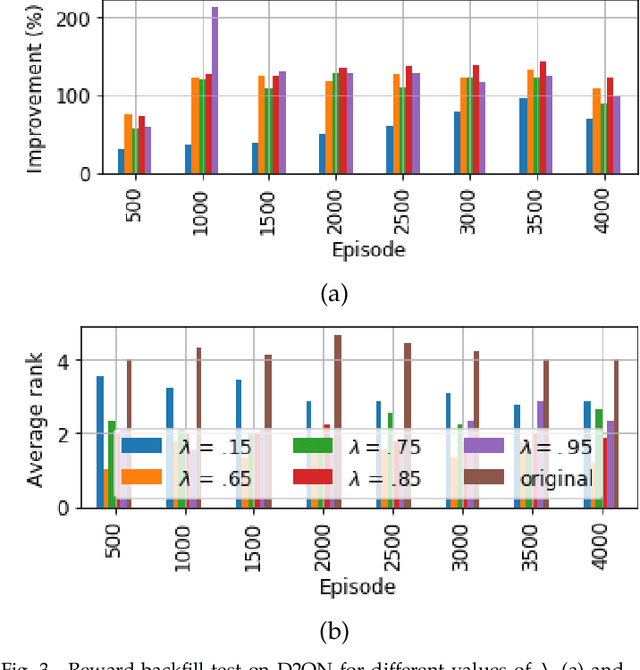

Abstract:Reinforcement learning agents learn by encouraging behaviours which maximize their total reward, usually provided by the environment. In many environments, however, the reward is provided after a series of actions rather than each single action, causing the agent to experience ambiguity in terms of whether those actions are effective, an issue called the credit assignment problem. In this paper, we propose two strategies, inspired by behavioural psychology, to estimate a more informative reward value for actions with no reward. The first strategy, called self-punishment, discourages the agent to avoid making mistakes, i.e., actions which lead to a terminal state. The second strategy, called the rewards backfill, backpropagates the rewards between two rewarded actions. We prove that, under certain assumptions, these two strategies maintain the order of the policies in the space of all possible policies in terms of their total reward, and, by extension, maintain the optimal policy. We incorporated these two strategies into three popular deep reinforcement learning approaches and evaluated the results on thirty Atari games. After parameter tuning, our results indicate that the proposed strategies improve the tested methods in over 65 percent of tested games by up to over 25 times performance improvement.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge