Martin Claverie

From Rows to Yields: How Foundation Models for Tabular Data Simplify Crop Yield Prediction

Jun 23, 2025Abstract:We present an application of a foundation model for small- to medium-sized tabular data (TabPFN), to sub-national yield forecasting task in South Africa. TabPFN has recently demonstrated superior performance compared to traditional machine learning (ML) models in various regression and classification tasks. We used the dekadal (10-days) time series of Earth Observation (EO; FAPAR and soil moisture) and gridded weather data (air temperature, precipitation and radiation) to forecast the yield of summer crops at the sub-national level. The crop yield data was available for 23 years and for up to 8 provinces. Covariate variables for TabPFN (i.e., EO and weather) were extracted by region and aggregated at a monthly scale. We benchmarked the results of the TabPFN against six ML models and three baseline models. Leave-one-year-out cross-validation experiment setting was used in order to ensure the assessment of the models capacity to forecast an unseen year. Results showed that TabPFN and ML models exhibit comparable accuracy, outperforming the baselines. Nonetheless, TabPFN demonstrated superior practical utility due to its significantly faster tuning time and reduced requirement for feature engineering. This renders TabPFN a more viable option for real-world operation yield forecasting applications, where efficiency and ease of implementation are paramount.

Ai4Fapar: How artificial intelligence can help to forecast the seasonal earth observation signal

Feb 08, 2024

Abstract:This paper investigated the potential of a multivariate Transformer model to forecast the temporal trajectory of the Fraction of Absorbed Photosynthetically Active Radiation (FAPAR) for short (1 month) and long horizon (more than 1 month) periods at the regional level in Europe and North Africa. The input data covers the period from 2002 to 2022 and includes remote sensing and weather data for modelling FAPAR predictions. The model was evaluated using a leave one year out cross-validation and compared with the climatological benchmark. Results show that the transformer model outperforms the benchmark model for one month forecasting horizon, after which the climatological benchmark is better. The RMSE values of the transformer model ranged from 0.02 to 0.04 FAPAR units for the first 2 months of predictions. Overall, the tested Transformer model is a valid method for FAPAR forecasting, especially when combined with weather data and used for short-term predictions.

Boosting Crop Classification by Hierarchically Fusing Satellite, Rotational, and Contextual Data

May 25, 2023Abstract:Accurate in-season crop type classification is crucial for the crop production estimation and monitoring of agricultural parcels. However, the complexity of the plant growth patterns and their spatio-temporal variability present significant challenges. While current deep learning-based methods show promise in crop type classification from single- and multi-modal time series, most existing methods rely on a single modality, such as satellite optical remote sensing data or crop rotation patterns. We propose a novel approach to fuse multimodal information into a model for improved accuracy and robustness across multiple years and countries. The approach relies on three modalities used: remote sensing time series from Sentinel-2 and Landsat 8 observations, parcel crop rotation and local crop distribution. To evaluate our approach, we release a new annotated dataset of 7.4 million agricultural parcels in France and Netherlands. We associate each parcel with time-series of surface reflectance (Red and NIR) and biophysical variables (LAI, FAPAR). Additionally, we propose a new approach to automatically aggregate crop types into a hierarchical class structure for meaningful model evaluation and a novel data-augmentation technique for early-season classification. Performance of the multimodal approach was assessed at different aggregation level in the semantic domain spanning from 151 to 8 crop types or groups. It resulted in accuracy ranging from 91\% to 95\% for NL dataset and from 85\% to 89\% for FR dataset. Pre-training on a dataset improves domain adaptation between countries, allowing for cross-domain zero-shot learning, and robustness of the performances in a few-shot setting from France to Netherlands. Our proposed approach outperforms comparable methods by enabling learning methods to use the often overlooked spatio-temporal context of parcels, resulting in increased preci...

Multimodal Crop Type Classification Fusing Multi-Spectral Satellite Time Series with Farmers Crop Rotations and Local Crop Distribution

Aug 23, 2022

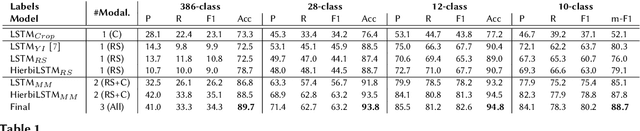

Abstract:Accurate, detailed, and timely crop type mapping is a very valuable information for the institutions in order to create more accurate policies according to the needs of the citizens. In the last decade, the amount of available data dramatically increased, whether it can come from Remote Sensing (using Copernicus Sentinel-2 data) or directly from the farmers (providing in-situ crop information throughout the years and information on crop rotation). Nevertheless, the majority of the studies are restricted to the use of one modality (Remote Sensing data or crop rotation) and never fuse the Earth Observation data with domain knowledge like crop rotations. Moreover, when they use Earth Observation data they are mainly restrained to one year of data, not taking into account the past years. In this context, we propose to tackle a land use and crop type classification task using three data types, by using a Hierarchical Deep Learning algorithm modeling the crop rotations like a language model, the satellite signals like a speech signal and using the crop distribution as additional context vector. We obtained very promising results compared to classical approaches with significant performances, increasing the Accuracy by 5.1 points in a 28-class setting (.948), and the micro-F1 by 9.6 points in a 10-class setting (.887) using only a set of crop of interests selected by an expert. We finally proposed a data-augmentation technique to allow the model to classify the crop before the end of the season, which works surprisingly well in a multimodal setting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge