Marsette Vona

Curved patch mapping and tracking for irregular terrain modeling: Application to bipedal robot foot placement

Apr 05, 2020

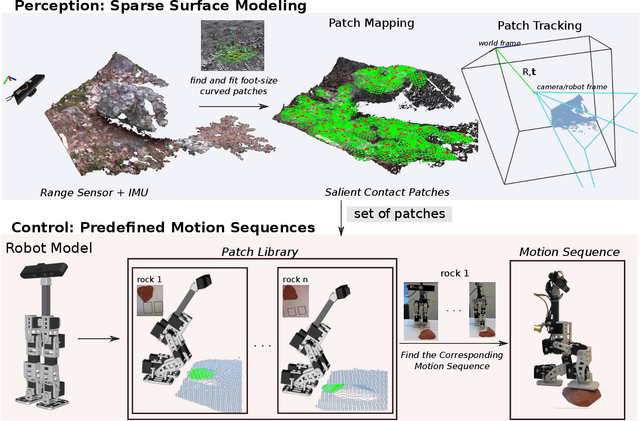

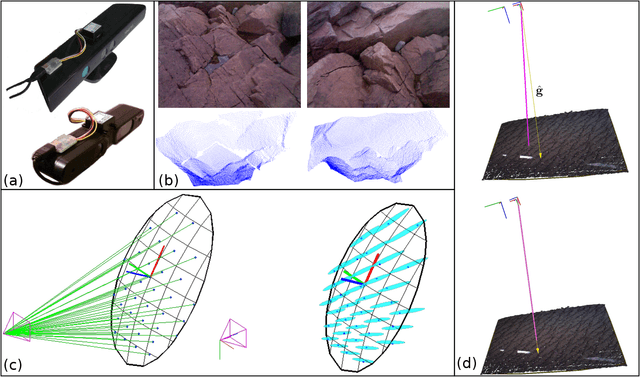

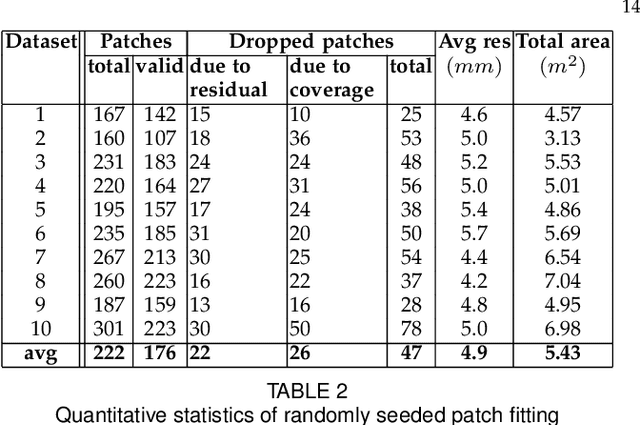

Abstract:Legged robots need to make contact with irregular surfaces, when operating in unstructured natural terrains. Representing and perceiving these areas to reason about potential contact between a robot and its surrounding environment, is still largely an open problem. This paper introduces a new framework to model and map local rough terrain surfaces, for tasks such as bipedal robot foot placement. The system operates in real-time, on data from an RGB-D and an IMU sensor. We introduce a set of parametrized patch models and an algorithm to fit them in the environment. Potential contacts are identified as bounded curved patches of approximately the same size as the robot's foot sole. This includes sparse seed point sampling, point cloud neighborhood search, and patch fitting and validation. We also present a mapping and tracking system, where patches are maintained in a local spatial map around the robot as it moves. A bio-inspired sampling algorithm is introduced for finding salient contacts. We include a dense volumetric fusion layer for spatiotemporally tracking, using multiple depth data to reconstruct a local point cloud. We present experimental results on a mini-biped robot that performs foot placements on rocks, implementing a 3D foothold perception system, that uses the developed patch mapping and tracking framework.

* arXiv admin note: text overlap with arXiv:1612.06164

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge