Manuel Gomes

Canonical Space Representation for 4D Panoptic Segmentation of Articulated Objects

Nov 07, 2025Abstract:Articulated object perception presents significant challenges in computer vision, particularly because most existing methods ignore temporal dynamics despite the inherently dynamic nature of such objects. The use of 4D temporal data has not been thoroughly explored in articulated object perception and remains unexamined for panoptic segmentation. The lack of a benchmark dataset further hurt this field. To this end, we introduce Artic4D as a new dataset derived from PartNet Mobility and augmented with synthetic sensor data, featuring 4D panoptic annotations and articulation parameters. Building on this dataset, we propose CanonSeg4D, a novel 4D panoptic segmentation framework. This approach explicitly estimates per-frame offsets mapping observed object parts to a learned canonical space, thereby enhancing part-level segmentation. The framework employs this canonical representation to achieve consistent alignment of object parts across sequential frames. Comprehensive experiments on Artic4D demonstrate that the proposed CanonSeg4D outperforms state of the art approaches in panoptic segmentation accuracy in more complex scenarios. These findings highlight the effectiveness of temporal modeling and canonical alignment in dynamic object understanding, and pave the way for future advances in 4D articulated object perception.

Volumetric Occupancy Detection: A Comparative Analysis of Mapping Algorithms

Jul 06, 2023Abstract:Despite the growing interest in innovative functionalities for collaborative robotics, volumetric detection remains indispensable for ensuring basic security. However, there is a lack of widely used volumetric detection frameworks specifically tailored to this domain, and existing evaluation metrics primarily focus on time and memory efficiency. To bridge this gap, the authors present a detailed comparison using a simulation environment, ground truth extraction, and automated evaluation metrics calculation. This enables the evaluation of state-of-the-art volumetric mapping algorithms, including OctoMap, SkiMap, and Voxblox, providing valuable insights and comparisons through the impact of qualitative and quantitative analyses. The study not only compares different frameworks but also explores various parameters within each framework, offering additional insights into their performance.

A sensor-to-pattern calibration framework for multi-modal industrial collaborative cells

Oct 19, 2022

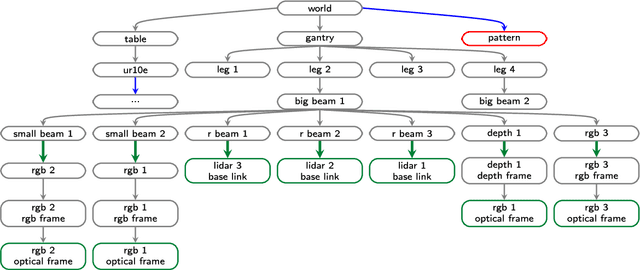

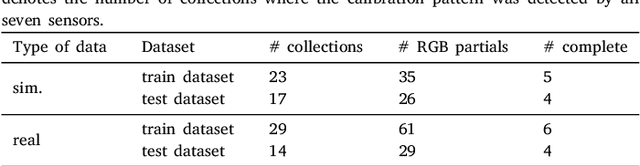

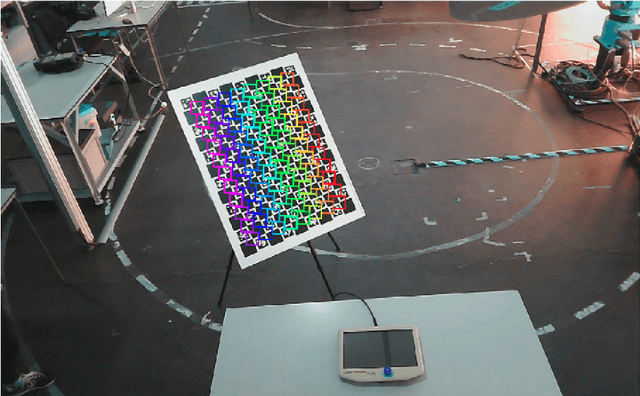

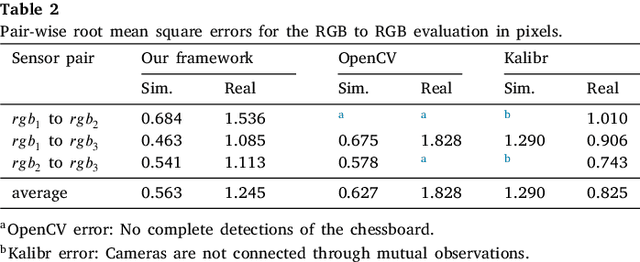

Abstract:Collaborative robotic industrial cells are workspaces where robots collaborate with human operators. In this context, safety is paramount, and for that a complete perception of the space where the collaborative robot is inserted is necessary. To ensure this, collaborative cells are equipped with a large set of sensors of multiple modalities, covering the entire work volume. However, the fusion of information from all these sensors requires an accurate extrinsic calibration. The calibration of such complex systems is challenging, due to the number of sensors and modalities, and also due to the small overlapping fields of view between the sensors, which are positioned to capture different viewpoints of the cell. This paper proposes a sensor to pattern methodology that can calibrate a complex system such as a collaborative cell in a single optimization procedure. Our methodology can tackle RGB and Depth cameras, as well as LiDARs. Results show that our methodology is able to accurately calibrate a collaborative cell containing three RGB cameras, a depth camera and three 3D LiDARs.

* Journal of Manufacturing Systems

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge