Manoj Gulati

PixelGen: Rethinking Embedded Camera Systems

Feb 04, 2024

Abstract:Embedded camera systems are ubiquitous, representing the most widely deployed example of a wireless embedded system. They capture a representation of the world - the surroundings illuminated by visible or infrared light. Despite their widespread usage, the architecture of embedded camera systems has remained unchanged, which leads to limitations. They visualize only a tiny portion of the world. Additionally, they are energy-intensive, leading to limited battery lifespan. We present PixelGen, which re-imagines embedded camera systems. Specifically, PixelGen combines sensors, transceivers, and low-resolution image and infrared vision sensors to capture a broader world representation. They are deliberately chosen for their simplicity, low bitrate, and power consumption, culminating in an energy-efficient platform. We show that despite the simplicity, the captured data can be processed using transformer-based image and language models to generate novel representations of the environment. For example, we demonstrate that it can allow the generation of high-definition images, while the camera utilises low-power, low-resolution monochrome cameras. Furthermore, the capabilities of PixelGen extend beyond traditional photography, enabling visualization of phenomena invisible to conventional cameras, such as sound waves. PixelGen can enable numerous novel applications, and we demonstrate that it enables unique visualization of the surroundings that are then projected on extended reality headsets. We believe, PixelGen goes beyond conventional cameras and opens new avenues for research and photography.

VoCopilot: Voice-Activated Tracking of Everyday Interactions

Dec 15, 2023Abstract:Voice plays an important role in our lives by facilitating communication, conveying emotions, and indicating health. Therefore, tracking vocal interactions can provide valuable insight into many aspects of our lives. This paper presents our ongoing efforts to design a new vocal tracking system we call VoCopilot. VoCopilot is an end-to-end system centered around an energy-efficient acoustic hardware and firmware combined with advanced machine learning models. As a result, VoCopilot is able to continuously track conversations, record them, transcribe them, and then extract useful insights from them. By utilizing large language models, VoCopilot ensures the user can extract useful insights from recorded interactions without having to learn complex machine learning techniques. In order to protect the privacy of end users, VoCopilot uses a novel wake-up mechanism that only records conversations of end users. Additionally, all the rest of pipeline can be run on a commodity computer (Mac Mini M2). In this work, we show the effectiveness of VoCopilot in real-world environment for two use cases.

LEAD1.0: A Large-scale Annotated Dataset for Energy Anomaly Detection in Commercial Buildings

Mar 30, 2022

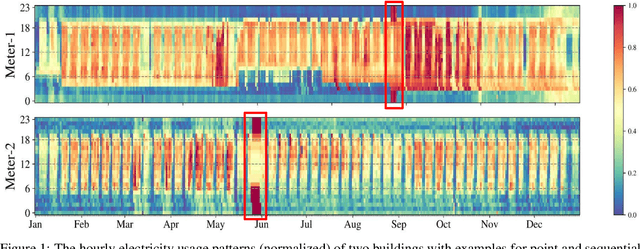

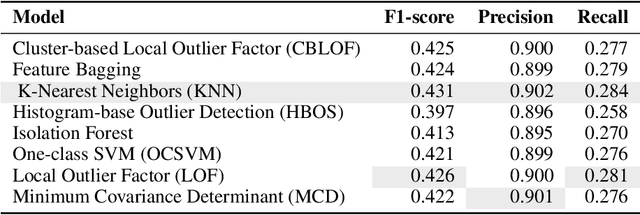

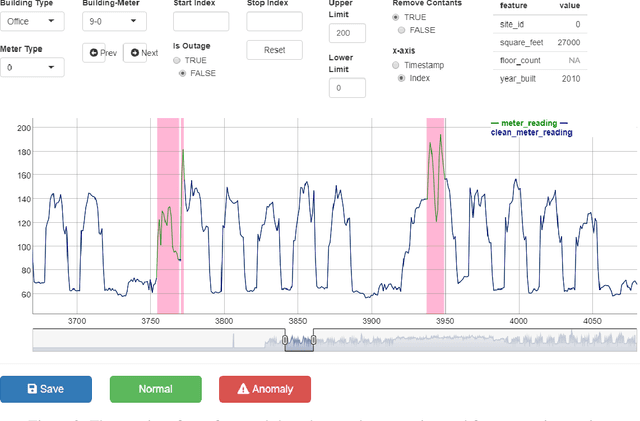

Abstract:Modern buildings are densely equipped with smart energy meters, which periodically generate a massive amount of time-series data yielding few million data points every day. This data can be leveraged to discover the underlying loads, infer their energy consumption patterns, inter-dependencies on environmental factors, and the building's operational properties. Furthermore, it allows us to simultaneously identify anomalies present in the electricity consumption profiles, which is a big step towards saving energy and achieving global sustainability. However, to date, the lack of large-scale annotated energy consumption datasets hinders the ongoing research in anomaly detection. We contribute to this effort by releasing a well-annotated version of a publicly available ASHRAE Great Energy Predictor III data set containing 1,413 smart electricity meter time series spanning over one year. In addition, we benchmark the performance of eight state-of-the-art anomaly detection methods on our dataset and compare their performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge