Madi Babaiasl

WheelArm-Sim: A Manipulation and Navigation Combined Multimodal Synthetic Data Generation Simulator for Unified Control in Assistive Robotics

Jan 29, 2026Abstract:Wheelchairs and robotic arms enhance independent living by assisting individuals with upper-body and mobility limitations in their activities of daily living (ADLs). Although recent advancements in assistive robotics have focused on Wheelchair-Mounted Robotic Arms (WMRAs) and wheelchairs separately, integrated and unified control of the combination using machine learning models remains largely underexplored. To fill this gap, we introduce the concept of WheelArm, an integrated cyber-physical system (CPS) that combines wheelchair and robotic arm controls. Data collection is the first step toward developing WheelArm models. In this paper, we present WheelArm-Sim, a simulation framework developed in Isaac Sim for synthetic data collection. We evaluate its capability by collecting a manipulation and navigation combined multimodal dataset, comprising 13 tasks, 232 trajectories, and 67,783 samples. To demonstrate the potential of the WheelArm dataset, we implement a baseline model for action prediction in the mustard-picking task. The results illustrate that data collected from WheelArm-Sim is feasible for a data-driven machine learning model for integrated control.

A Multimodal Data Collection Framework for Dialogue-Driven Assistive Robotics to Clarify Ambiguities: A Wizard-of-Oz Pilot Study

Jan 23, 2026Abstract:Integrated control of wheelchairs and wheelchair-mounted robotic arms (WMRAs) has strong potential to increase independence for users with severe motor limitations, yet existing interfaces often lack the flexibility needed for intuitive assistive interaction. Although data-driven AI methods show promise, progress is limited by the lack of multimodal datasets that capture natural Human-Robot Interaction (HRI), particularly conversational ambiguity in dialogue-driven control. To address this gap, we propose a multimodal data collection framework that employs a dialogue-based interaction protocol and a two-room Wizard-of-Oz (WoZ) setup to simulate robot autonomy while eliciting natural user behavior. The framework records five synchronized modalities: RGB-D video, conversational audio, inertial measurement unit (IMU) signals, end-effector Cartesian pose, and whole-body joint states across five assistive tasks. Using this framework, we collected a pilot dataset of 53 trials from five participants and validated its quality through motion smoothness analysis and user feedback. The results show that the framework effectively captures diverse ambiguity types and supports natural dialogue-driven interaction, demonstrating its suitability for scaling to a larger dataset for learning, benchmarking, and evaluation of ambiguity-aware assistive control.

Enhanced Robot Arm at the Edge with NLP and Vision Systems

May 27, 2024

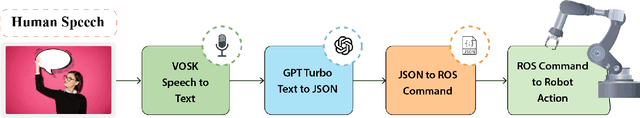

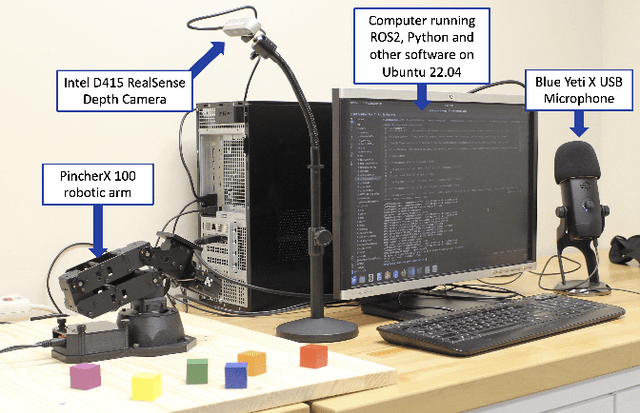

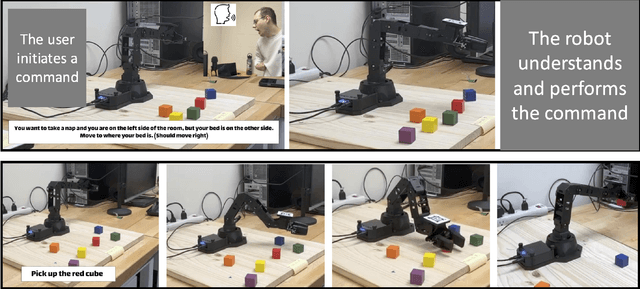

Abstract:This paper introduces a "proof of concept" for a new approach to assistive robotics, integrating edge computing with Natural Language Processing (NLP) and computer vision to enhance the interaction between humans and robotic systems. Our "proof of concept" demonstrates the feasibility of using large language models (LLMs) and vision systems in tandem for interpreting and executing complex commands conveyed through natural language. This integration aims to improve the intuitiveness and accessibility of assistive robotic systems, making them more adaptable to the nuanced needs of users with disabilities. By leveraging the capabilities of edge computing, our system has the potential to minimize latency and support offline capability, enhancing the autonomy and responsiveness of assistive robots. Experimental results from our implementation on a robotic arm show promising outcomes in terms of accurate intent interpretation and object manipulation based on verbal commands. This research lays the groundwork for future developments in assistive robotics, focusing on creating highly responsive, user-centric systems that can significantly improve the quality of life for individuals with disabilities.

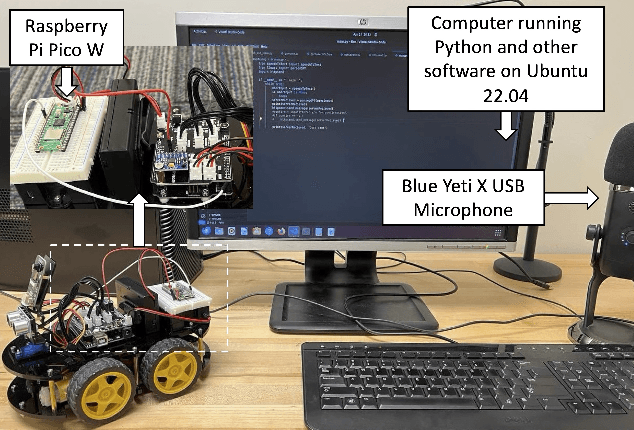

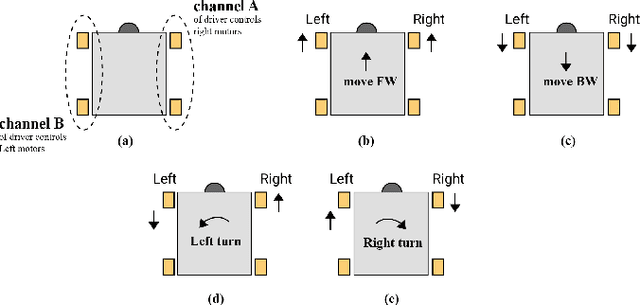

Deployment of NLP and LLM Techniques to Control Mobile Robots at the Edge: A Case Study Using GPT-4-Turbo and LLaMA 2

May 27, 2024

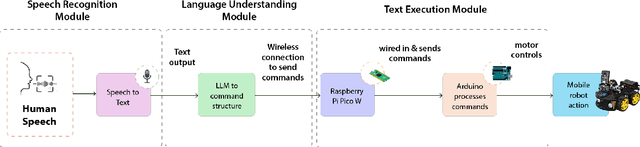

Abstract:This paper investigates the possibility of intuitive human-robot interaction through the application of Natural Language Processing (NLP) and Large Language Models (LLMs) in mobile robotics. We aim to explore the feasibility of using these technologies for edge-based deployment, where traditional cloud dependencies are eliminated. The study specifically contrasts the performance of GPT-4-Turbo, which requires cloud connectivity, with an offline-capable, quantized version of LLaMA 2 (LLaMA 2-7B.Q5 K M). Our results show that GPT-4-Turbo delivers superior performance in interpreting and executing complex commands accurately, whereas LLaMA 2 exhibits significant limitations in consistency and reliability of command execution. Communication between the control computer and the mobile robot is established via a Raspberry Pi Pico W, which wirelessly receives commands from the computer without internet dependency and transmits them through a wired connection to the robot's Arduino controller. This study highlights the potential and challenges of implementing LLMs and NLP at the edge, providing groundwork for future research into fully autonomous and network-independent robotic systems. For video demonstrations and source code, please refer to: https://tinyurl.com/RobocupSym2024.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge