Madhavun Candadai Vasu

Information Bottleneck in Control Tasks with Recurrent Spiking Neural Networks

Jun 06, 2017

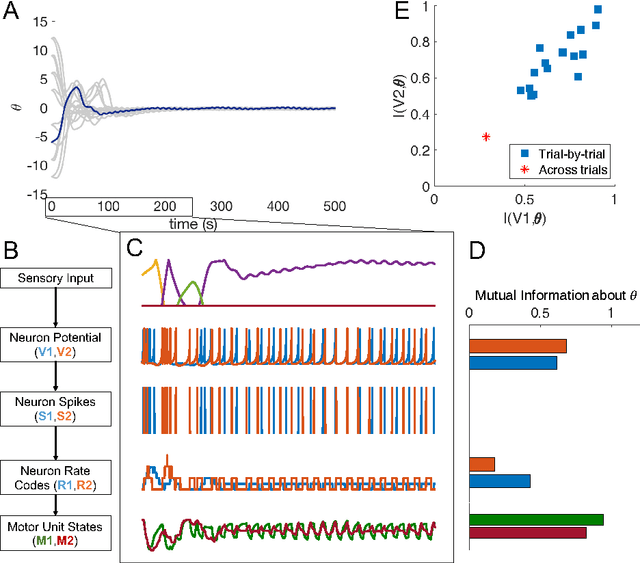

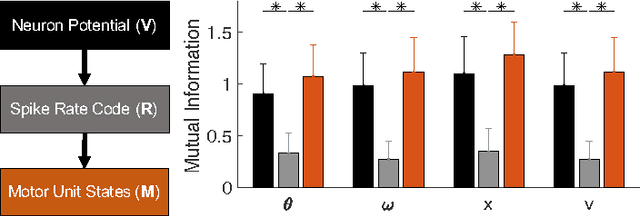

Abstract:The nervous system encodes continuous information from the environment in the form of discrete spikes, and then decodes these to produce smooth motor actions. Understanding how spikes integrate, represent, and process information to produce behavior is one of the greatest challenges in neuroscience. Information theory has the potential to help us address this challenge. Informational analyses of deep and feed-forward artificial neural networks solving static input-output tasks, have led to the proposal of the \emph{Information Bottleneck} principle, which states that deeper layers encode more relevant yet minimal information about the inputs. Such an analyses on networks that are recurrent, spiking, and perform control tasks is relatively unexplored. Here, we present results from a Mutual Information analysis of a recurrent spiking neural network that was evolved to perform the classic pole-balancing task. Our results show that these networks deviate from the \emph{Information Bottleneck} principle prescribed for feed-forward networks.

* Accepted at ICANN'17 to appear in Springer Lecture Notes in Computer Science

Evolution and Analysis of Embodied Spiking Neural Networks Reveals Task-Specific Clusters of Effective Networks

Apr 19, 2017

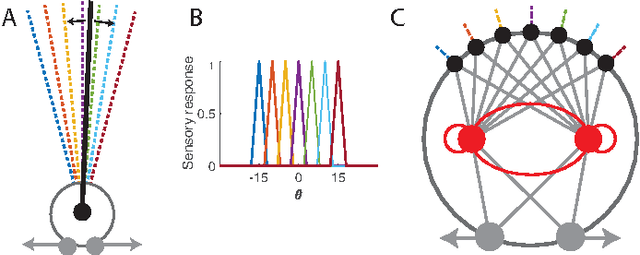

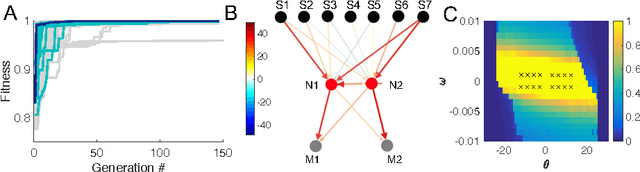

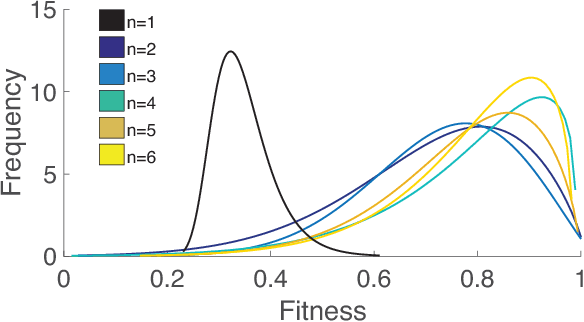

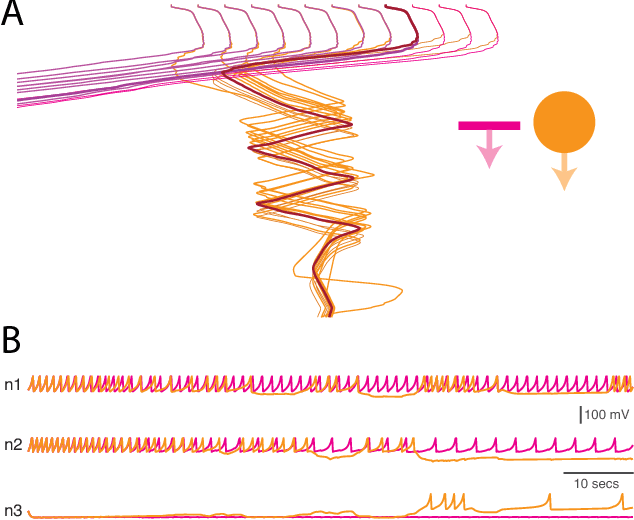

Abstract:Elucidating principles that underlie computation in neural networks is currently a major research topic of interest in neuroscience. Transfer Entropy (TE) is increasingly used as a tool to bridge the gap between network structure, function, and behavior in fMRI studies. Computational models allow us to bridge the gap even further by directly associating individual neuron activity with behavior. However, most computational models that have analyzed embodied behaviors have employed non-spiking neurons. On the other hand, computational models that employ spiking neural networks tend to be restricted to disembodied tasks. We show for the first time the artificial evolution and TE-analysis of embodied spiking neural networks to perform a cognitively-interesting behavior. Specifically, we evolved an agent controlled by an Izhikevich neural network to perform a visual categorization task. The smallest networks capable of performing the task were found by repeating evolutionary runs with different network sizes. Informational analysis of the best solution revealed task-specific TE-network clusters, suggesting that within-task homogeneity and across-task heterogeneity were key to behavioral success. Moreover, analysis of the ensemble of solutions revealed that task-specificity of TE-network clusters correlated with fitness. This provides an empirically testable hypothesis that links network structure to behavior.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge