Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Lorenz Braun

A Simple Model for Portable and Fast Prediction of Execution Time and Power Consumption of GPU Kernels

Jan 20, 2020Figures and Tables:

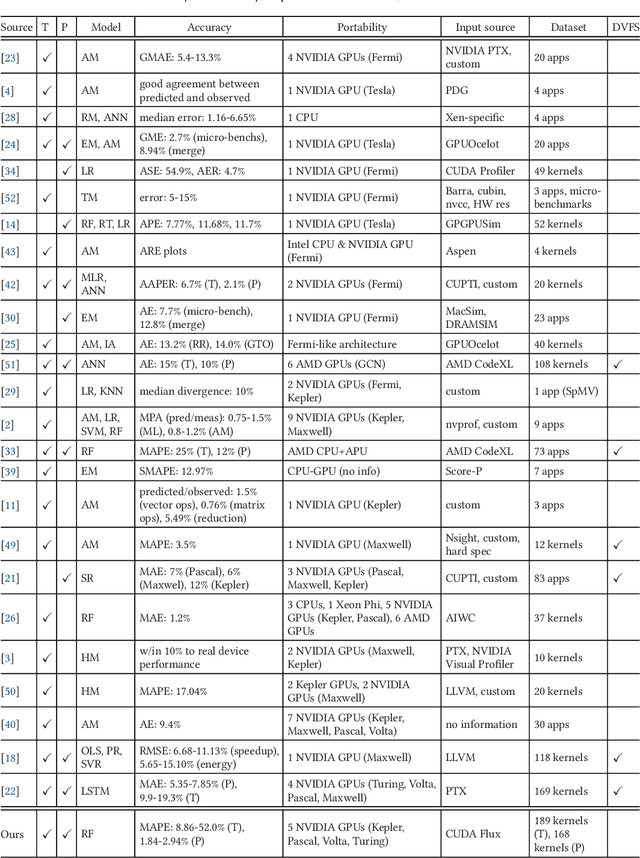

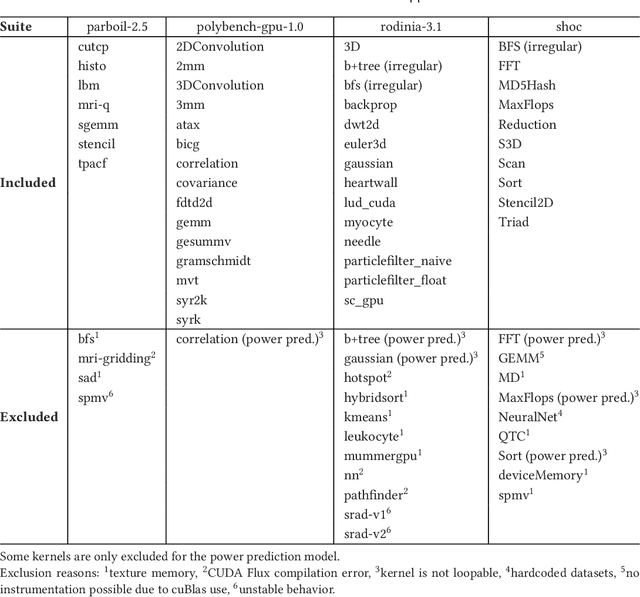

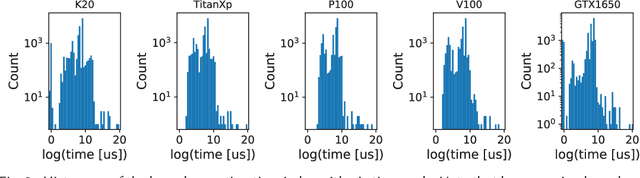

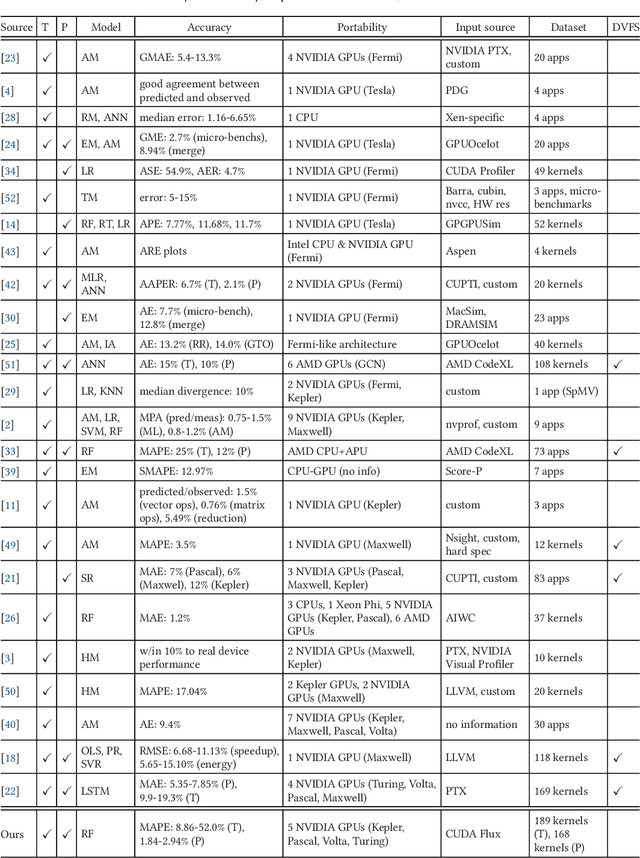

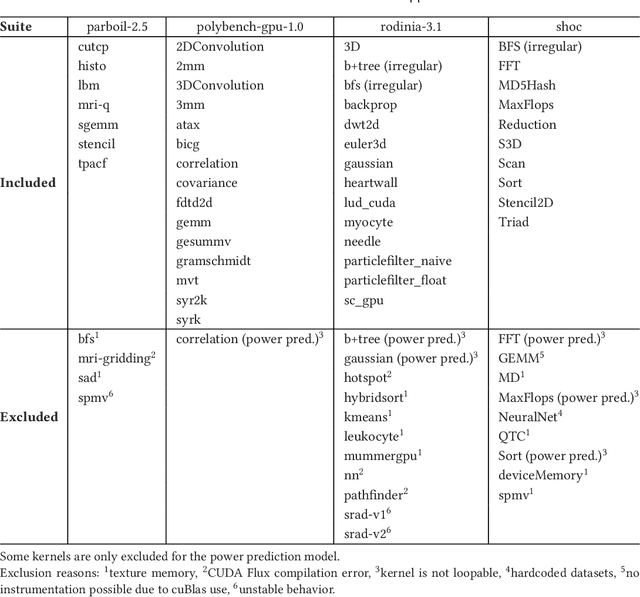

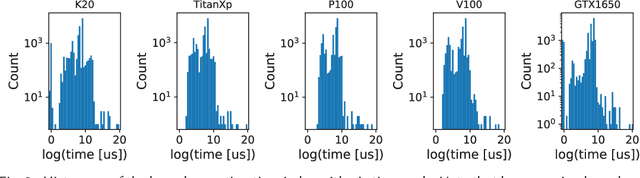

Abstract:Characterizing compute kernel execution behavior on GPUs for efficient task scheduling is a non trivial task. We address this with a simple model enabling portable and fast predictions among different GPUs using only hardware-independent features extracted. This model is built based on random forests using 189 individual compute kernels from benchmarks such as Parboil, Rodinia, Polybench-GPU and SHOC. Evaluation of the model performance using cross-validation yields a median Mean Average Percentage Error (MAPE) of [13.45%, 44.56%] and [1.81%, 2.91%], for time respectively power prediction on five different GPUs, while latency for a single prediction varies between 0.1 and 0.2 seconds.

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge