Lizy Abraham

Building Trust in AI-Driven Decision Making for Cyber-Physical Systems : A Comprehensive Review

May 10, 2024Abstract:Recent advancements in technology have led to the emergence of Cyber-Physical Systems (CPS), which seamlessly integrate the cyber and physical domains in various sectors such as agriculture, autonomous systems, and healthcare. This integration presents opportunities for enhanced efficiency and automation through the utilization of artificial intelligence (AI) and machine learning (ML). However, the complexity of CPS brings forth challenges related to transparency, bias, and trust in AI-enabled decision-making processes. This research explores the significance of AI and ML in enabling CPS in these domains and addresses the challenges associated with interpreting and trusting AI systems within CPS. Specifically, the role of explainable AI (XAI) in enhancing trustworthiness and reliability in AI-enabled decision-making processes is discussed. Key challenges such as transparency, security, and privacy are identified, along with the necessity of building trust through transparency, accountability, and ethical considerations.

Energy-Efficient Uncertainty-Aware Biomass Composition Prediction at the Edge

Apr 17, 2024

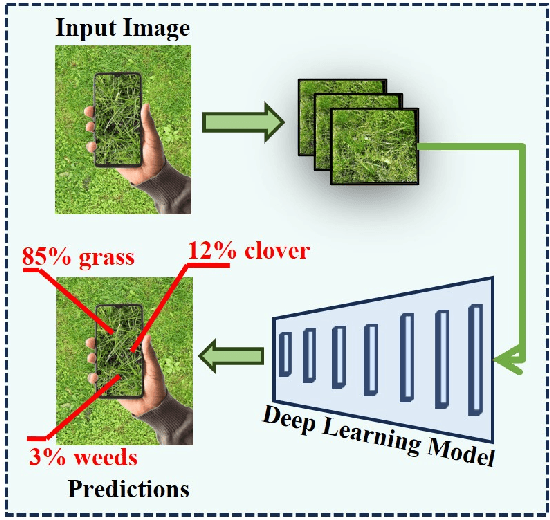

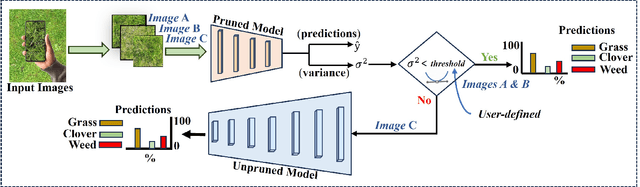

Abstract:Clover fixates nitrogen from the atmosphere to the ground, making grass-clover mixtures highly desirable to reduce external nitrogen fertilization. Herbage containing clover additionally promotes higher food intake, resulting in higher milk production. Herbage probing however remains largely unused as it requires a time-intensive manual laboratory analysis. Without this information, farmers are unable to perform localized clover sowing or take targeted fertilization decisions. Deep learning algorithms have been proposed with the goal to estimate the dry biomass composition from images of the grass directly in the fields. The energy-intensive nature of deep learning however limits deployment to practical edge devices such as smartphones. This paper proposes to fill this gap by applying filter pruning to reduce the energy requirement of existing deep learning solutions. We report that although pruned networks are accurate on controlled, high-quality images of the grass, they struggle to generalize to real-world smartphone images that are blurry or taken from challenging angles. We address this challenge by training filter-pruned models using a variance attenuation loss so they can predict the uncertainty of their predictions. When the uncertainty exceeds a threshold, we re-infer using a more accurate unpruned model. This hybrid approach allows us to reduce energy consumption while retaining a high accuracy. We evaluate our algorithm on two datasets: the GrassClover and the Irish clover using an NVIDIA Jetson Nano edge device. We find that we reduce energy reduction with respect to state-of-the-art solutions by 50% on average with only 4% accuracy loss.

Complexity-Driven CNN Compression for Resource-constrained Edge AI

Aug 26, 2022

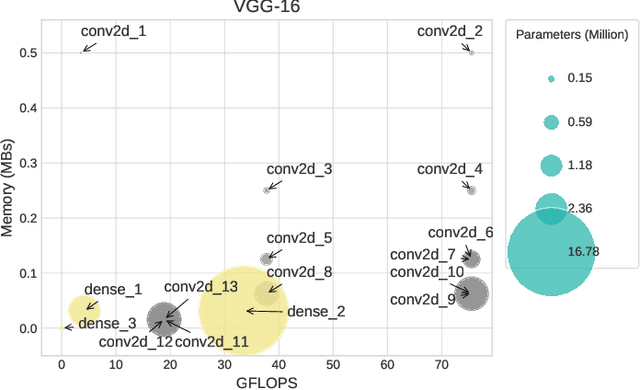

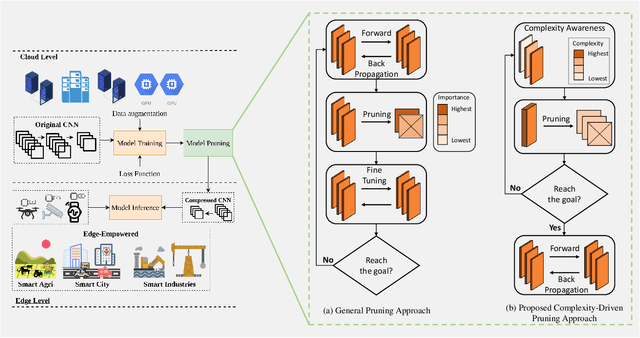

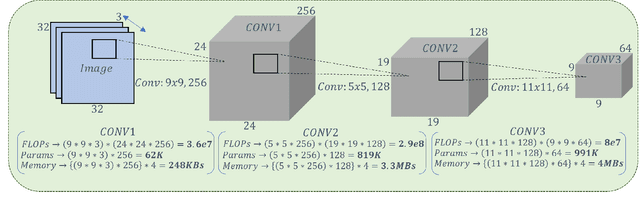

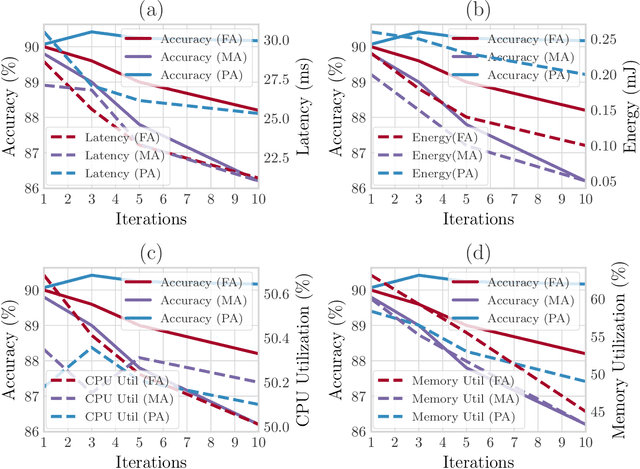

Abstract:Recent advances in Artificial Intelligence (AI) on the Internet of Things (IoT)-enabled network edge has realized edge intelligence in several applications such as smart agriculture, smart hospitals, and smart factories by enabling low-latency and computational efficiency. However, deploying state-of-the-art Convolutional Neural Networks (CNNs) such as VGG-16 and ResNets on resource-constrained edge devices is practically infeasible due to their large number of parameters and floating-point operations (FLOPs). Thus, the concept of network pruning as a type of model compression is gaining attention for accelerating CNNs on low-power devices. State-of-the-art pruning approaches, either structured or unstructured do not consider the different underlying nature of complexities being exhibited by convolutional layers and follow a training-pruning-retraining pipeline, which results in additional computational overhead. In this work, we propose a novel and computationally efficient pruning pipeline by exploiting the inherent layer-level complexities of CNNs. Unlike typical methods, our proposed complexity-driven algorithm selects a particular layer for filter-pruning based on its contribution to overall network complexity. We follow a procedure that directly trains the pruned model and avoids the computationally complex ranking and fine-tuning steps. Moreover, we define three modes of pruning, namely parameter-aware (PA), FLOPs-aware (FA), and memory-aware (MA), to introduce versatile compression of CNNs. Our results show the competitive performance of our approach in terms of accuracy and acceleration. Lastly, we present a trade-off between different resources and accuracy which can be helpful for developers in making the right decisions in resource-constrained IoT environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge