Liying Xiao

Deep Data Consistency: a Fast and Robust Diffusion Model-based Solver for Inverse Problems

May 17, 2024

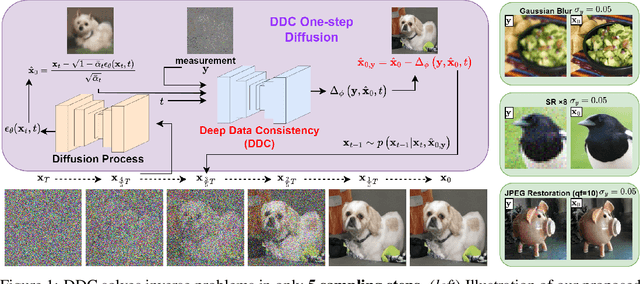

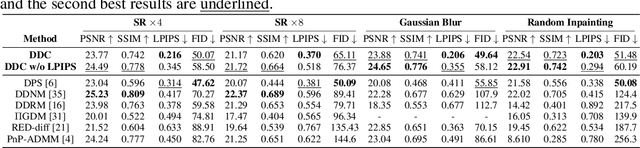

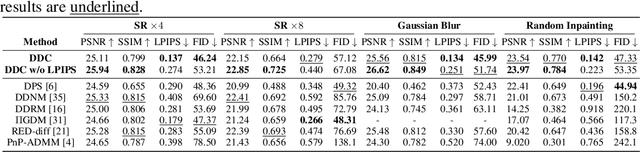

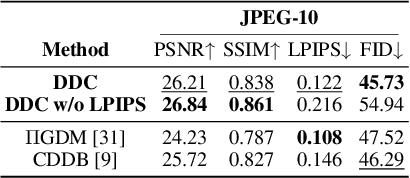

Abstract:Diffusion models have become a successful approach for solving various image inverse problems by providing a powerful diffusion prior. Many studies tried to combine the measurement into diffusion by score function replacement, matrix decomposition, or optimization algorithms, but it is hard to balance the data consistency and realness. The slow sampling speed is also a main obstacle to its wide application. To address the challenges, we propose Deep Data Consistency (DDC) to update the data consistency step with a deep learning model when solving inverse problems with diffusion models. By analyzing existing methods, the variational bound training objective is used to maximize the conditional posterior and reduce its impact on the diffusion process. In comparison with state-of-the-art methods in linear and non-linear tasks, DDC demonstrates its outstanding performance of both similarity and realness metrics in generating high-quality solutions with only 5 inference steps in 0.77 seconds on average. In addition, the robustness of DDC is well illustrated in the experiments across datasets, with large noise and the capacity to solve multiple tasks in only one pre-trained model.

Mitigating Data Consistency Induced Discrepancy in Cascaded Diffusion Models for Sparse-view CT Reconstruction

Mar 14, 2024

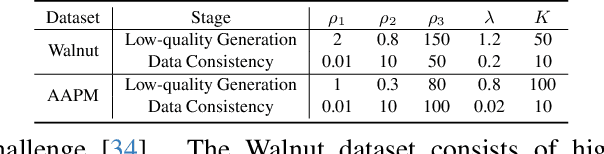

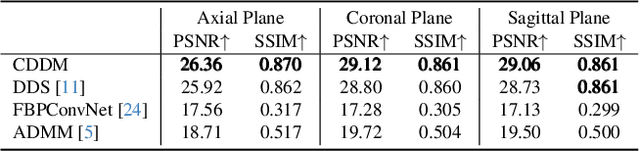

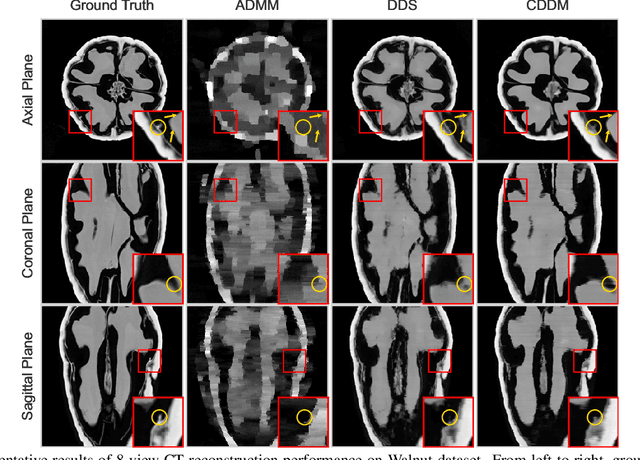

Abstract:Sparse-view Computed Tomography (CT) image reconstruction is a promising approach to reduce radiation exposure, but it inevitably leads to image degradation. Although diffusion model-based approaches are computationally expensive and suffer from the training-sampling discrepancy, they provide a potential solution to the problem. This study introduces a novel Cascaded Diffusion with Discrepancy Mitigation (CDDM) framework, including the low-quality image generation in latent space and the high-quality image generation in pixel space which contains data consistency and discrepancy mitigation in a one-step reconstruction process. The cascaded framework minimizes computational costs by moving some inference steps from pixel space to latent space. The discrepancy mitigation technique addresses the training-sampling gap induced by data consistency, ensuring the data distribution is close to the original manifold. A specialized Alternating Direction Method of Multipliers (ADMM) is employed to process image gradients in separate directions, offering a more targeted approach to regularization. Experimental results across two datasets demonstrate CDDM's superior performance in high-quality image generation with clearer boundaries compared to existing methods, highlighting the framework's computational efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge