Liben Chen

Automatic Detection of Alzheimer's Disease with Multi-Modal Fusion of Clinical MRI Scans

Nov 30, 2023

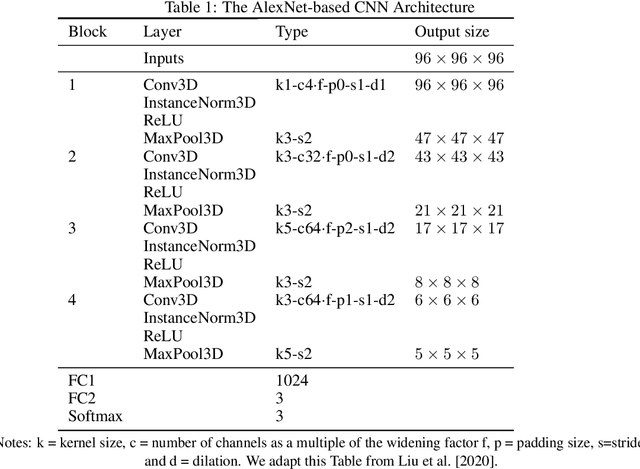

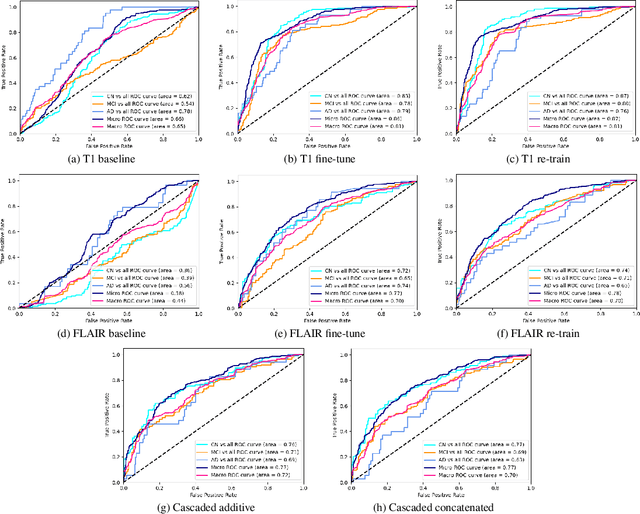

Abstract:The aging population of the U.S. drives the prevalence of Alzheimer's disease. Brookmeyer et al. forecasts approximately 15 million Americans will have either clinical AD or mild cognitive impairment by 2060. In response to this urgent call, methods for early detection of Alzheimer's disease have been developed for prevention and pre-treatment. Notably, literature on the application of deep learning in the automatic detection of the disease has been proliferating. This study builds upon previous literature and maintains a focus on leveraging multi-modal information to enhance automatic detection. We aim to predict the stage of the disease - Cognitively Normal (CN), Mildly Cognitive Impairment (MCI), and Alzheimer's Disease (AD), based on two different types of brain MRI scans. We design an AlexNet-based deep learning model that learns the synergy of complementary information from both T1 and FLAIR MRI scans.

On the Cognition of Visual Question Answering Models and Human Intelligence: A Comparative Study

Oct 04, 2023

Abstract:Visual Question Answering (VQA) is a challenging task that requires cross-modal understanding and reasoning of visual image and natural language question. To inspect the association of VQA models to human cognition, we designed a survey to record human thinking process and analyzed VQA models by comparing the outputs and attention maps with those of humans. We found that although the VQA models resemble human cognition in architecture and performs similarly with human on the recognition-level, they still struggle with cognitive inferences. The analysis of human thinking procedure serves to direct future research and introduce more cognitive capacity into modeling features and architectures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge