Langqing Shi

Unsupervised Learning of High-resolution Light Field Imaging via Beam Splitter-based Hybrid Lenses

Feb 29, 2024Abstract:In this paper, we design a beam splitter-based hybrid light field imaging prototype to record 4D light field image and high-resolution 2D image simultaneously, and make a hybrid light field dataset. The 2D image could be considered as the high-resolution ground truth corresponding to the low-resolution central sub-aperture image of 4D light field image. Subsequently, we propose an unsupervised learning-based super-resolution framework with the hybrid light field dataset, which adaptively settles the light field spatial super-resolution problem with a complex degradation model. Specifically, we design two loss functions based on pre-trained models that enable the super-resolution network to learn the detailed features and light field parallax structure with only one ground truth. Extensive experiments demonstrate the same superiority of our approach with supervised learning-based state-of-the-art ones. To our knowledge, it is the first end-to-end unsupervised learning-based spatial super-resolution approach in light field imaging research, whose input is available from our beam splitter-based hybrid light field system. The hardware and software together may help promote the application of light field super-resolution to a great extent.

Learning based Deep Disentangling Light Field Reconstruction and Disparity Estimation Application

Nov 14, 2023

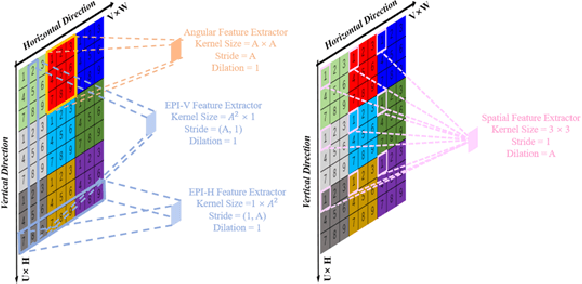

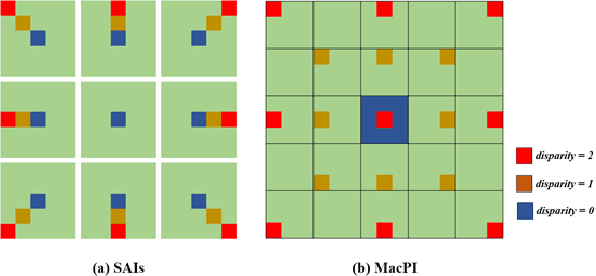

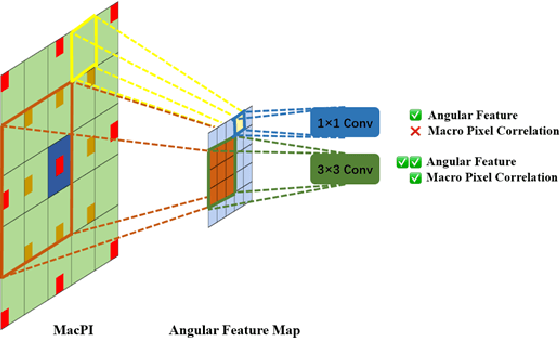

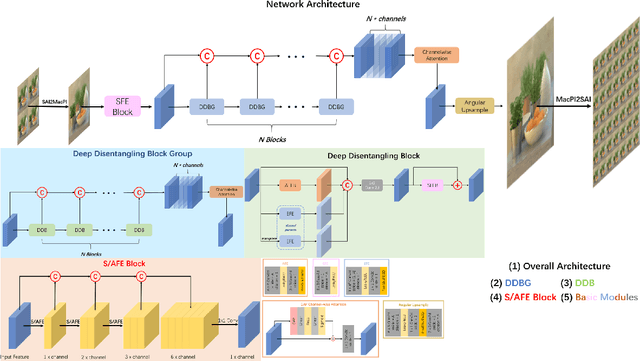

Abstract:Light field cameras have a wide range of uses due to their ability to simultaneously record light intensity and direction. The angular resolution of light fields is important for downstream tasks such as depth estimation, yet is often difficult to improve due to hardware limitations. Conventional methods tend to perform poorly against the challenge of large disparity in sparse light fields, while general CNNs have difficulty extracting spatial and angular features coupled together in 4D light fields. The light field disentangling mechanism transforms the 4D light field into 2D image format, which is more favorable for CNN for feature extraction. In this paper, we propose a Deep Disentangling Mechanism, which inherits the principle of the light field disentangling mechanism and further develops the design of the feature extractor and adds advanced network structure. We design a light-field reconstruction network (i.e., DDASR) on the basis of the Deep Disentangling Mechanism, and achieve SOTA performance in the experiments. In addition, we design a Block Traversal Angular Super-Resolution Strategy for the practical application of depth estimation enhancement where the input views is often higher than 2x2 in the experiments resulting in a high memory usage, which can reduce the memory usage while having a better reconstruction performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge