Kyungryun Lee

Reference-free Axial Super-resolution of 3D Microscopy Images using Implicit Neural Representation with a 2D Diffusion Prior

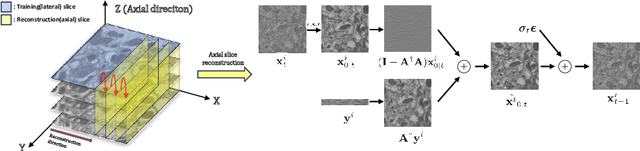

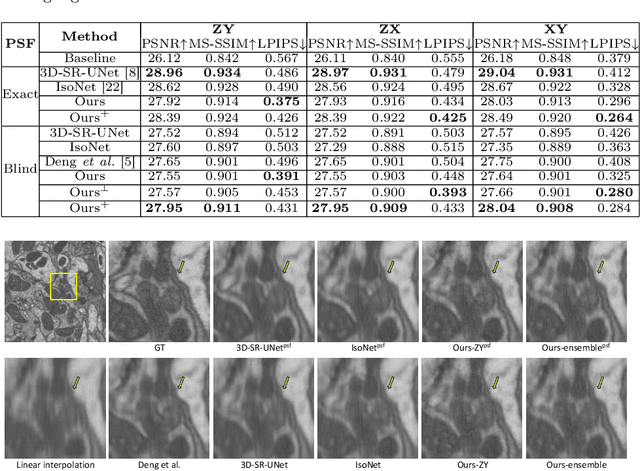

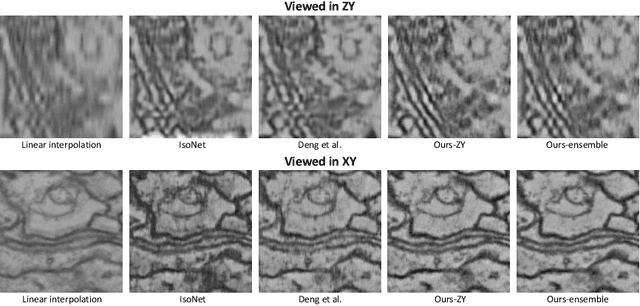

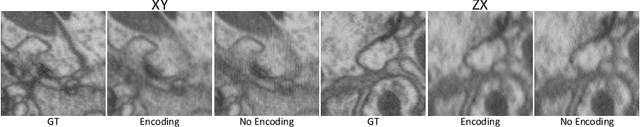

Aug 16, 2024Abstract:Analysis and visualization of 3D microscopy images pose challenges due to anisotropic axial resolution, demanding volumetric super-resolution along the axial direction. While training a learning-based 3D super-resolution model seems to be a straightforward solution, it requires ground truth isotropic volumes and suffers from the curse of dimensionality. Therefore, existing methods utilize 2D neural networks to reconstruct each axial slice, eventually piecing together the entire volume. However, reconstructing each slice in the pixel domain fails to give consistent reconstruction in all directions leading to misalignment artifacts. In this work, we present a reconstruction framework based on implicit neural representation (INR), which allows 3D coherency even when optimized by independent axial slices in a batch-wise manner. Our method optimizes a continuous volumetric representation from low-resolution axial slices, using a 2D diffusion prior trained on high-resolution lateral slices without requiring isotropic volumes. Through experiments on real and synthetic anisotropic microscopy images, we demonstrate that our method surpasses other state-of-the-art reconstruction methods. The source code is available on GitHub: https://github.com/hvcl/INR-diffusion.

Reference-Free Isotropic 3D EM Reconstruction using Diffusion Models

Aug 03, 2023

Abstract:Electron microscopy (EM) images exhibit anisotropic axial resolution due to the characteristics inherent to the imaging modality, presenting challenges in analysis and downstream tasks.In this paper, we propose a diffusion-model-based framework that overcomes the limitations of requiring reference data or prior knowledge about the degradation process. Our approach utilizes 2D diffusion models to consistently reconstruct 3D volumes and is well-suited for highly downsampled data. Extensive experiments conducted on two public datasets demonstrate the robustness and superiority of leveraging the generative prior compared to supervised learning methods. Additionally, we demonstrate our method's feasibility for self-supervised reconstruction, which can restore a single anisotropic volume without any training data.

I2V: Towards Texture-Aware Self-Supervised Blind Denoising using Self-Residual Learning for Real-World Images

Feb 21, 2023

Abstract:Although the advances of self-supervised blind denoising are significantly superior to conventional approaches without clean supervision in synthetic noise scenarios, it shows poor quality in real-world images due to spatially correlated noise corruption. Recently, pixel-shuffle downsampling (PD) has been proposed to eliminate the spatial correlation of noise. A study combining a blind spot network (BSN) and asymmetric PD (AP) successfully demonstrated that self-supervised blind denoising is applicable to real-world noisy images. However, PD-based inference may degrade texture details in the testing phase because high-frequency details (e.g., edges) are destroyed in the downsampled images. To avoid such an issue, we propose self-residual learning without the PD process to maintain texture information. We also propose an order-variant PD constraint, noise prior loss, and an efficient inference scheme (progressive random-replacing refinement ($\text{PR}^3$)) to boost overall performance. The results of extensive experiments show that the proposed method outperforms state-of-the-art self-supervised blind denoising approaches, including several supervised learning methods, in terms of PSNR, SSIM, LPIPS, and DISTS in real-world sRGB images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge