Kyeongsu Kang

Bayesian NeRF: Quantifying Uncertainty with Volume Density in Neural Radiance Fields

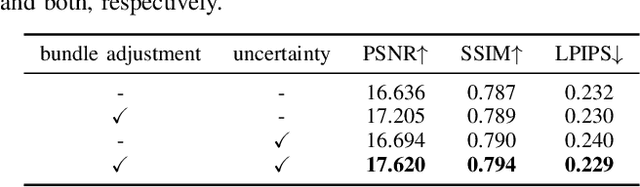

Apr 10, 2024Abstract:We present the Bayesian Neural Radiance Field (NeRF), which explicitly quantifies uncertainty in geometric volume structures without the need for additional networks, making it adept for challenging observations and uncontrolled images. NeRF diverges from traditional geometric methods by offering an enriched scene representation, rendering color and density in 3D space from various viewpoints. However, NeRF encounters limitations in relaxing uncertainties by using geometric structure information, leading to inaccuracies in interpretation under insufficient real-world observations. Recent research efforts aimed at addressing this issue have primarily relied on empirical methods or auxiliary networks. To fundamentally address this issue, we propose a series of formulational extensions to NeRF. By introducing generalized approximations and defining density-related uncertainty, our method seamlessly extends to manage uncertainty not only for RGB but also for depth, without the need for additional networks or empirical assumptions. In experiments we show that our method significantly enhances performance on RGB and depth images in the comprehensive dataset, demonstrating the reliability of the Bayesian NeRF approach to quantifying uncertainty based on the geometric structure.

Necessity Feature Correspondence Estimation for Large-scale Global Place Recognition and Relocalization

Mar 11, 2023

Abstract:Global place recognition and 3D relocalization are one of the most important components in the loop closing detection for 3D LiDAR Simultaneous Localization and Mapping (SLAM). In order to find the accurate global 6-DoF transform by feature matching approach, various end-to-end architectures have been proposed. However, existing methods do not consider the false correspondence of the features, thereby unnecessary features are also involved in global place recognition and relocalization. In this paper, we introduce a robust correspondence estimation method by removing unnecessary features and highlighting necessary features simultaneously. To focus on the necessary features and ignore the unnecessary ones, we use the geometric correlation between two scenes represented in the 3D LiDAR point clouds. We introduce the correspondence auxiliary loss that finds key correlations based on the point align algorithm and enables end-to-end training of the proposed networks with robust correspondence estimation. Since the ground with many plane patches acts as an outlier during correspondence estimation, we also propose a preprocessing step to consider negative correspondence by removing dominant plane patches. The evaluation results on the dynamic urban driving dataset, show that our proposed method can improve the performances of both global place recognition and relocalization tasks. We show that estimating the robust feature correspondence is one of the important factors in place recognition and relocalization.

Just Flip: Flipped Observation Generation and Optimization for Neural Radiance Fields to Cover Unobserved View

Mar 11, 2023

Abstract:With the advent of Neural Radiance Field (NeRF), representing 3D scenes through multiple observations has shown remarkable improvements in performance. Since this cutting-edge technique is able to obtain high-resolution renderings by interpolating dense 3D environments, various approaches have been proposed to apply NeRF for the spatial understanding of robot perception. However, previous works are challenging to represent unobserved scenes or views on the unexplored robot trajectory, as these works do not take into account 3D reconstruction without observation information. To overcome this problem, we propose a method to generate flipped observation in order to cover unexisting observation for unexplored robot trajectory. To achieve this, we propose a data augmentation method for 3D reconstruction using NeRF by flipping observed images, and estimating flipped camera 6DOF poses. Our technique exploits the property of objects being geometrically symmetric, making it simple but fast and powerful, thereby making it suitable for robotic applications where real-time performance is important. We demonstrate that our method significantly improves three representative perceptual quality measures on the NeRF synthetic dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge