Krishnananda Prabhu Sivananda

Augmented Environment Representations with Complete Object Models

Mar 12, 2021

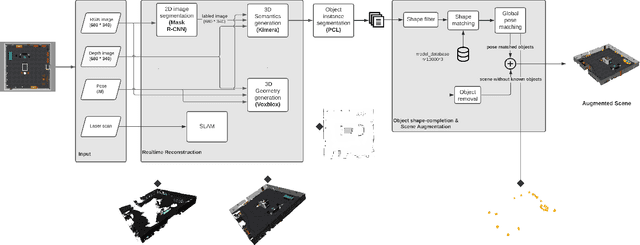

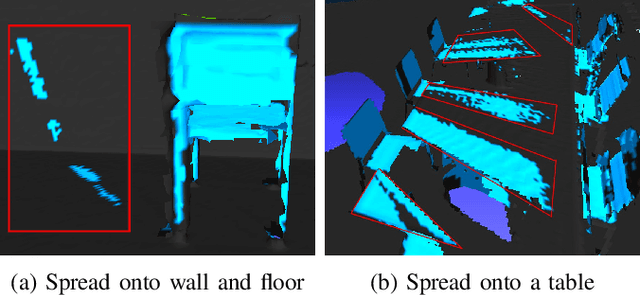

Abstract:While 2D occupancy maps commonly used in mobile robotics enable safe navigation in indoor environments, in order for robots to understand their environment to the level required for them to perform more advanced tasks, representing 3D geometry and semantic environment information is required. We propose a pipeline that can generate a multi-layer representation of indoor environments for robotic applications. The proposed representation includes 3D metric-semantic layers, a 2D occupancy layer, and an object instance layer where known objects are replaced with an approximate model obtained through a novel model-matching approach. The metric-semantic layer and the object instance layer are combined to form an augmented representation of the environment. Experiments show that the proposed shape matching method outperforms a state-of-the-art deep learning method when tasked to complete unseen parts of objects in the scene. The pipeline performance translates well from simulation to real world as shown by F1-score analysis, with semantic segmentation accuracy using Mask R-CNN acting as the major bottleneck. Finally, we also demonstrate on a real robotic platform how the multi-layer map can be used to improve navigation safety.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge