Koshi Watanabe

StarMAP: Global Neighbor Embedding for Faithful Data Visualization

Feb 06, 2025

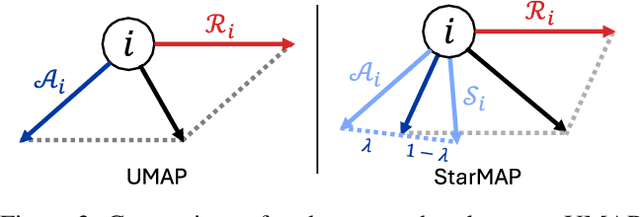

Abstract:Neighbor embedding is widely employed to visualize high-dimensional data; however, it frequently overlooks the global structure, e.g., intercluster similarities, thereby impeding accurate visualization. To address this problem, this paper presents Star-attracted Manifold Approximation and Projection (StarMAP), which incorporates the advantage of principal component analysis (PCA) in neighbor embedding. Inspired by the property of PCA embedding, which can be viewed as the largest shadow of the data, StarMAP introduces the concept of \textit{star attraction} by leveraging the PCA embedding. This approach yields faithful global structure preservation while maintaining the interpretability and computational efficiency of neighbor embedding. StarMAP was compared with existing methods in the visualization tasks of toy datasets, single-cell RNA sequencing data, and deep representation. The experimental results show that StarMAP is simple but effective in realizing faithful visualizations.

Hyperboloid GPLVM for Discovering Continuous Hierarchies via Nonparametric Estimation

Oct 22, 2024

Abstract:Dimensionality reduction (DR) offers a useful representation of complex high-dimensional data. Recent DR methods focus on hyperbolic geometry to derive a faithful low-dimensional representation of hierarchical data. However, existing methods are based on neighbor embedding, frequently ruining the continual relation of the hierarchies. This paper presents hyperboloid Gaussian process (GP) latent variable models (hGP-LVMs) to embed high-dimensional hierarchical data with implicit continuity via nonparametric estimation. We adopt generative modeling using the GP, which brings effective hierarchical embedding and executes ill-posed hyperparameter tuning. This paper presents three variants that employ original point, sparse point, and Bayesian estimations. We establish their learning algorithms by incorporating the Riemannian optimization and active approximation scheme of GP-LVM. For Bayesian inference, we further introduce the reparameterization trick to realize Bayesian latent variable learning. In the last part of this paper, we apply hGP-LVMs to several datasets and show their ability to represent high-dimensional hierarchies in low-dimensional spaces.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge