Konstantin Ziegler

Global and Local Feature Learning for Ego-Network Analysis

Feb 16, 2020

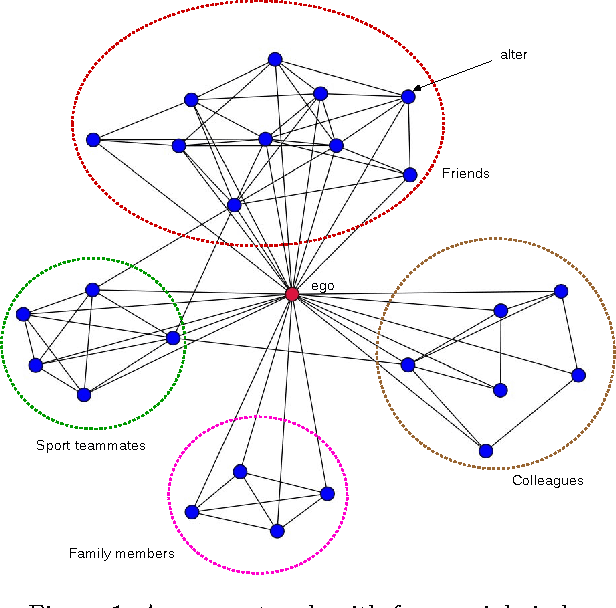

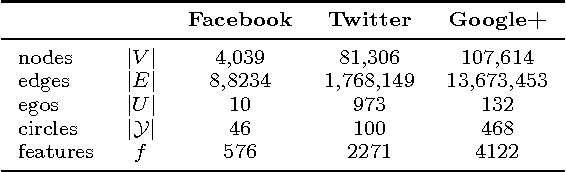

Abstract:In an ego-network, an individual (ego) organizes its friends (alters) in different groups (social circles). This social network can be efficiently analyzed after learning representations of the ego and its alters in a low-dimensional, real vector space. These representations are then easily exploited via statistical models for tasks such as social circle detection and prediction. Recent advances in language modeling via deep learning have inspired new methods for learning network representations. These methods can capture the global structure of networks. In this paper, we evolve these techniques to also encode the local structure of neighborhoods. Therefore, our local representations capture network features that are hidden in the global representation of large networks. We show that the task of social circle prediction benefits from a combination of global and local features generated by our technique.

Analysing Neural Network Topologies: a Game Theoretic Approach

Apr 17, 2019

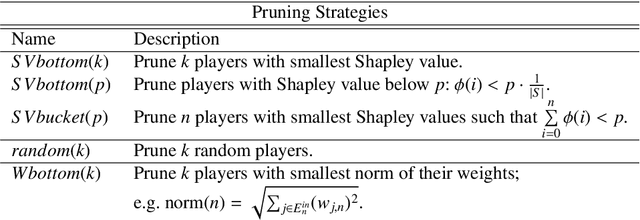

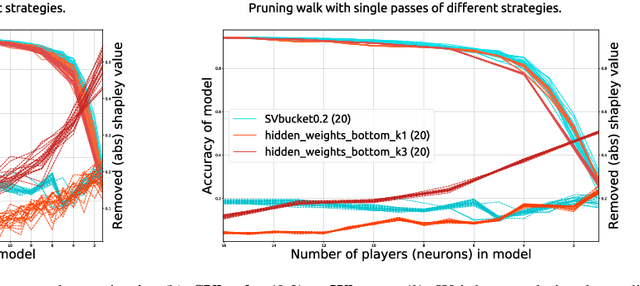

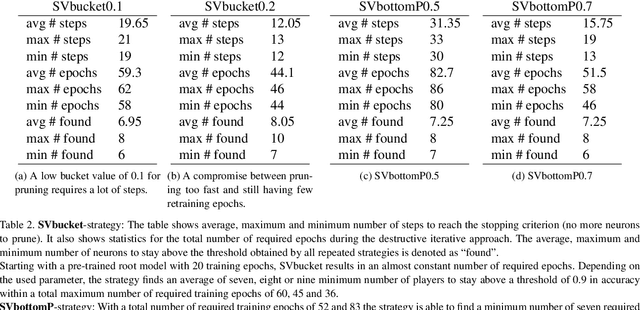

Abstract:Artificial Neural Networks have shown impressive success in very different application cases. Choosing a proper network architecture is a critical decision for a network's success, usually done in a manual manner. As a straightforward strategy, large, mostly fully connected architectures are selected, thereby relying on a good optimization strategy to find proper weights while at the same time avoiding overfitting. However, large parts of the final network are redundant. In the best case, large parts of the network become simply irrelevant for later inferencing. In the worst case, highly parameterized architectures hinder proper optimization and allow the easy creation of adverserial examples fooling the network. A first step in removing irrelevant architectural parts lies in identifying those parts, which requires measuring the contribution of individual components such as neurons. In previous work, heuristics based on using the weight distribution of a neuron as contribution measure have shown some success, but do not provide a proper theoretical understanding. Therefore, in our work we investigate game theoretic measures, namely the Shapley value (SV), in order to separate relevant from irrelevant parts of an artificial neural network. We begin by designing a coalitional game for an artificial neural network, where neurons form coalitions and the average contributions of neurons to coalitions yield to the Shapley value. In order to measure how well the Shapley value measures the contribution of individual neurons, we remove low-contributing neurons and measure its impact on the network performance. In our experiments we show that the Shapley value outperforms other heuristics for measuring the contribution of neurons.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge