Kirthi Shankar Sivamani

Non-Intrusive Detection of Adversarial Deep Learning Attacks via Observer Networks

Feb 22, 2020

Abstract:Recent studies have shown that deep learning models are vulnerable to specifically crafted adversarial inputs that are quasi-imperceptible to humans. In this letter, we propose a novel method to detect adversarial inputs, by augmenting the main classification network with multiple binary detectors (observer networks) which take inputs from the hidden layers of the original network (convolutional kernel outputs) and classify the input as clean or adversarial. During inference, the detectors are treated as a part of an ensemble network and the input is deemed adversarial if at least half of the detectors classify it as so. The proposed method addresses the trade-off between accuracy of classification on clean and adversarial samples, as the original classification network is not modified during the detection process. The use of multiple observer networks makes attacking the detection mechanism non-trivial even when the attacker is aware of the victim classifier. We achieve a 99.5% detection accuracy on the MNIST dataset and 97.5% on the CIFAR-10 dataset using the Fast Gradient Sign Attack in a semi-white box setup. The number of false positive detections is a mere 0.12% in the worst case scenario.

Feature Losses for Adversarial Robustness

Dec 10, 2019

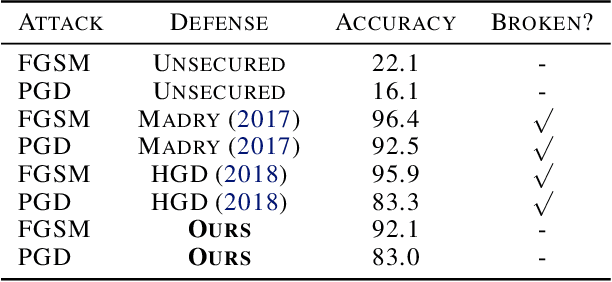

Abstract:Deep learning has made tremendous advances in computer vision tasks such as image classification. However, recent studies have shown that deep learning models are vulnerable to specifically crafted adversarial inputs that are quasi-imperceptible to humans. In this work, we propose a novel approach to defending adversarial attacks. We employ an input processing technique based on denoising autoencoders as a defense. It has been shown that the input perturbations grow and accumulate as noise in feature maps while propagating through a convolutional neural network (CNN). We exploit the noisy feature maps by using an additional subnetwork to extract image feature maps and train an auto-encoder on perceptual losses of these feature maps. This technique achieves close to state-of-the-art results on defending MNIST and CIFAR10 datasets, but more importantly, shows a new way of employing a defense that cannot be trivially trained end-to-end by the attacker. Empirical results demonstrate the effectiveness of this approach on the MNIST and CIFAR10 datasets on simple as well as iterative LP attacks. Our method can be applied as a preprocessing technique to any off the shelf CNN.

Unsupervised Domain Alignment to Mitigate Low Level Dataset Biases

Jul 12, 2019

Abstract:Dataset bias is a well-known problem in the field of computer vision. The presence of implicit bias in any image collection hinders a model trained and validated on a particular dataset to yield similar accuracies when tested on other datasets. In this paper, we propose a novel debiasing technique to reduce the effects of a biased training dataset. Our goal is to augment the training data using a generative network by learning a non-linear mapping from the source domain (training set) to the target domain (testing set) while retaining training set labels. The cycle consistency loss and adversarial loss for generative adversarial networks are used to learn the mapping. A structured similarity index (SSIM) loss is used to enforce label retention while augmenting the training set. Our methods and hypotheses are supported by quantitative comparisons with prior debiasing techniques. These comparisons showcase the superiority of our method and its potential to mitigate the effects of dataset bias during the inference stage.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge