Kinshuk Dua

FedUKD: Federated UNet Model with Knowledge Distillation for Land Use Classification from Satellite and Street Views

Dec 05, 2022

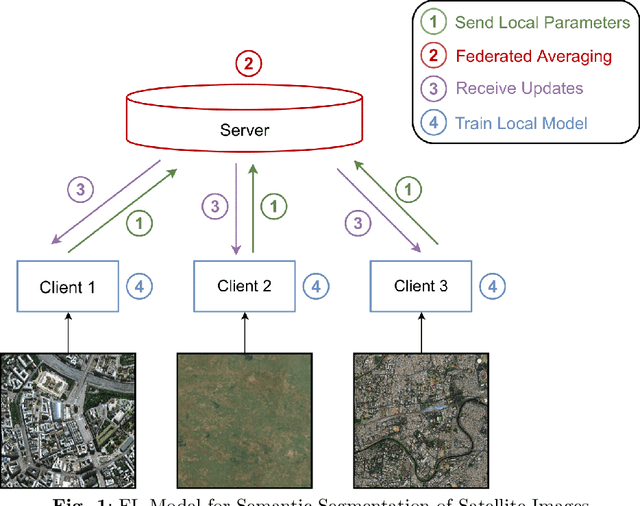

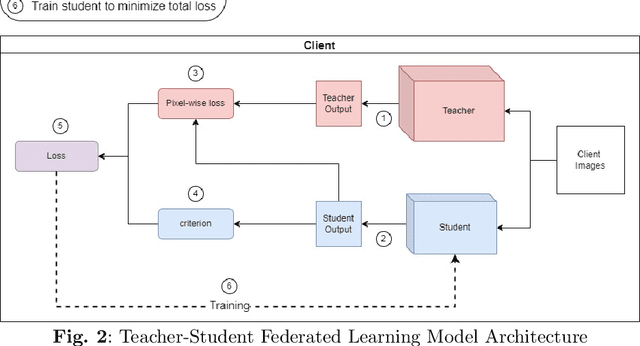

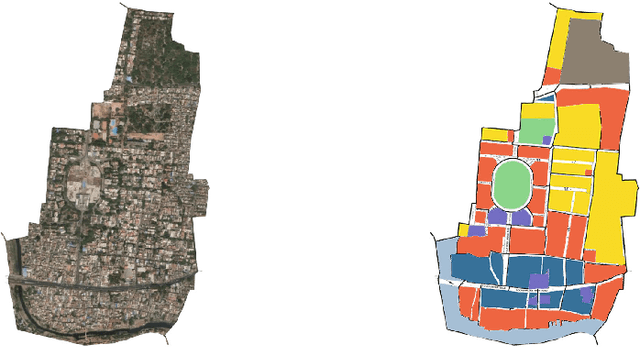

Abstract:Federated Deep Learning frameworks can be used strategically to monitor Land Use locally and infer environmental impacts globally. Distributed data from across the world would be needed to build a global model for Land Use classification. The need for a Federated approach in this application domain would be to avoid transfer of data from distributed locations and save network bandwidth to reduce communication cost. We use a Federated UNet model for Semantic Segmentation of satellite and street view images. The novelty of the proposed architecture is the integration of Knowledge Distillation to reduce communication cost and response time. The accuracy obtained was above 95% and we also brought in a significant model compression to over 17 times and 62 times for street View and satellite images respectively. Our proposed framework has the potential to be a game-changer in real-time tracking of climate change across the planet.

HyBNN and FedHyBNN: (Federated) Hybrid Binary Neural Networks

May 19, 2022

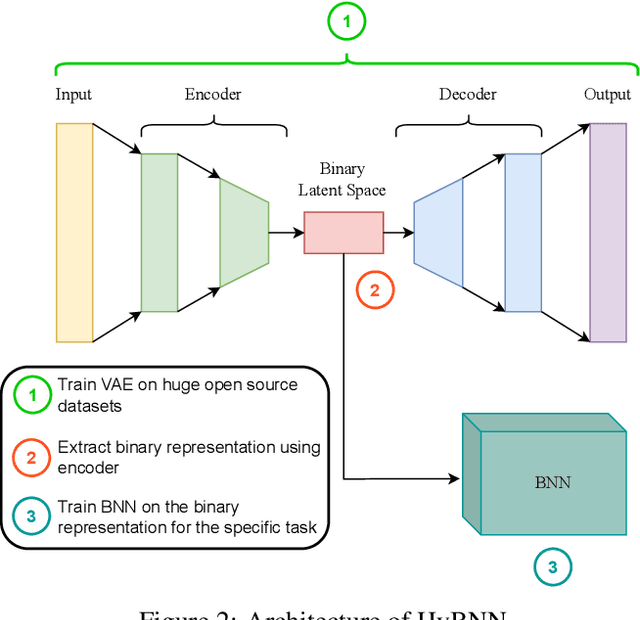

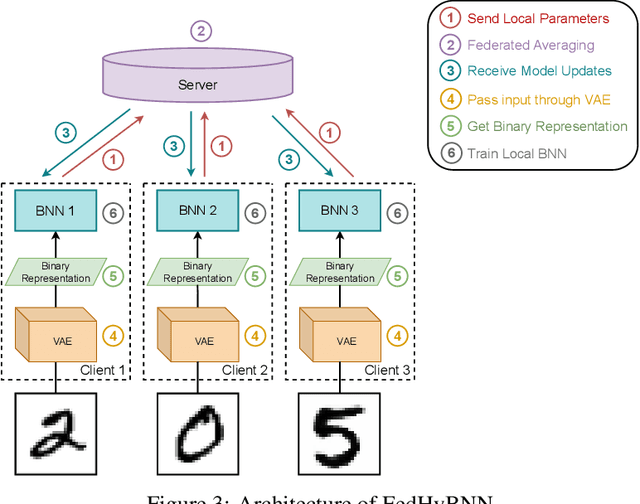

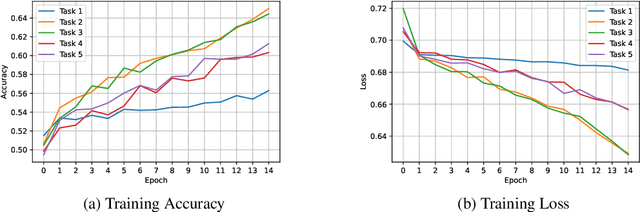

Abstract:Binary Neural Networks (BNNs), neural networks with weights and activations constrained to -1(0) and +1, are an alternative to deep neural networks which offer faster training, lower memory consumption and lightweight models, ideal for use in resource constrained devices while being able to utilize the architecture of their deep neural network counterpart. However, the input binarization step used in BNNs causes a severe accuracy loss. In this paper, we introduce a novel hybrid neural network architecture, Hybrid Binary Neural Network (HyBNN), consisting of a task-independent, general, full-precision variational autoencoder with a binary latent space and a task specific binary neural network that is able to greatly limit the accuracy loss due to input binarization by using the full precision variational autoencoder as a feature extractor. We use it to combine the state-of-the-art accuracy of deep neural networks with the much faster training time, quicker test-time inference and power efficiency of binary neural networks. We show that our proposed system is able to very significantly outperform a vanilla binary neural network with input binarization. We also introduce FedHyBNN, a highly communication efficient federated counterpart to HyBNN and demonstrate that it is able to reach the same accuracy as its non-federated equivalent. We make our source code, experimental parameters and models available at: https://anonymous.4open.science/r/HyBNN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge