Kevin N. Webster

Rewiring Networks for Graph Neural Network Training Using Discrete Geometry

Jul 16, 2022

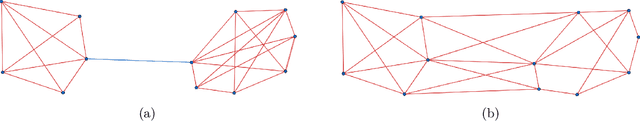

Abstract:Information over-squashing is a phenomenon of inefficient information propagation between distant nodes on networks. It is an important problem that is known to significantly impact the training of graph neural networks (GNNs), as the receptive field of a node grows exponentially. To mitigate this problem, a preprocessing procedure known as rewiring is often applied to the input network. In this paper, we investigate the use of discrete analogues of classical geometric notions of curvature to model information flow on networks and rewire them. We show that these classical notions achieve state-of-the-art performance in GNN training accuracy on a variety of real-world network datasets. Moreover, compared to the current state-of-the-art, these classical notions exhibit a clear advantage in computational runtime by several orders of magnitude.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge