Kevin Hyekang Joo

Multimodal Fusion with LLMs for Engagement Prediction in Natural Conversation

Sep 13, 2024Abstract:Over the past decade, wearable computing devices (``smart glasses'') have undergone remarkable advancements in sensor technology, design, and processing power, ushering in a new era of opportunity for high-density human behavior data. Equipped with wearable cameras, these glasses offer a unique opportunity to analyze non-verbal behavior in natural settings as individuals interact. Our focus lies in predicting engagement in dyadic interactions by scrutinizing verbal and non-verbal cues, aiming to detect signs of disinterest or confusion. Leveraging such analyses may revolutionize our understanding of human communication, foster more effective collaboration in professional environments, provide better mental health support through empathetic virtual interactions, and enhance accessibility for those with communication barriers. In this work, we collect a dataset featuring 34 participants engaged in casual dyadic conversations, each providing self-reported engagement ratings at the end of each conversation. We introduce a novel fusion strategy using Large Language Models (LLMs) to integrate multiple behavior modalities into a ``multimodal transcript'' that can be processed by an LLM for behavioral reasoning tasks. Remarkably, this method achieves performance comparable to established fusion techniques even in its preliminary implementation, indicating strong potential for further research and optimization. This fusion method is one of the first to approach ``reasoning'' about real-world human behavior through a language model. Smart glasses provide us the ability to unobtrusively gather high-density multimodal data on human behavior, paving the way for new approaches to understanding and improving human communication with the potential for important societal benefits. The features and data collected during the studies will be made publicly available to promote further research.

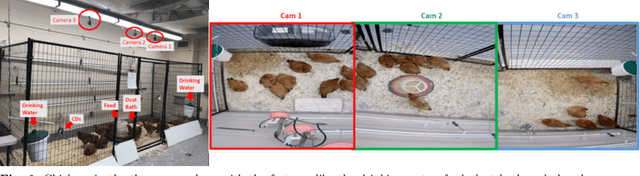

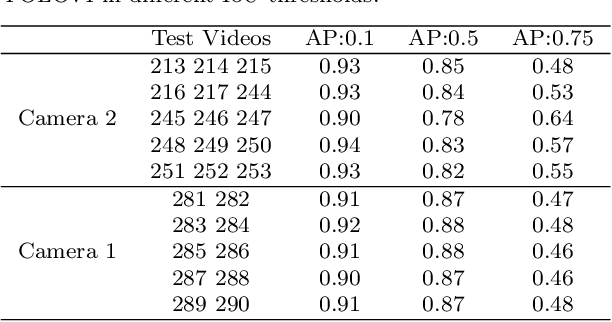

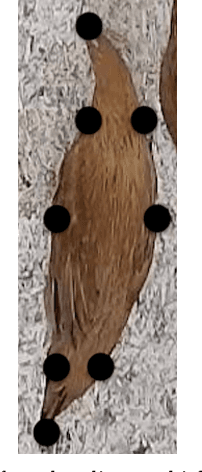

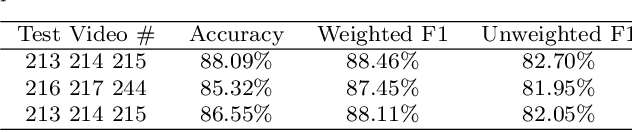

Birds' Eye View: Measuring Behavior and Posture of Chickens as a Metric for Their Well-Being

Apr 29, 2022

Abstract:Chicken well-being is important for ensuring food security and better nutrition for a growing global human population. In this research, we represent behavior and posture as a metric to measure chicken well-being. With the objective of detecting chicken posture and behavior in a pen, we employ two algorithms: Mask R-CNN for instance segmentation and YOLOv4 in combination with ResNet50 for classification. Our results indicate a weighted F1 score of 88.46% for posture and behavior detection using Mask R-CNN and an average of 91% accuracy in behavior detection and 86.5% average accuracy in posture detection using YOLOv4. These experiments are conducted under uncontrolled scenarios for both posture and behavior measurements. These metrics establish a strong foundation to obtain a decent indication of individual and group behaviors and postures. Such outcomes would help improve the overall well-being of the chickens. The dataset used in this research is collected in-house and will be made public after the publication as it would serve as a very useful resource for future research. To the best of our knowledge no other research work has been conducted in this specific setup used for this work involving multiple behaviors and postures simultaneously.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge