Keerthika Rajvel

A Survey of Voice Translation Methodologies - Acoustic Dialect Decoder

Oct 13, 2016

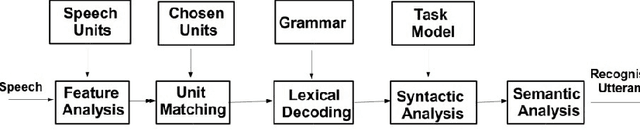

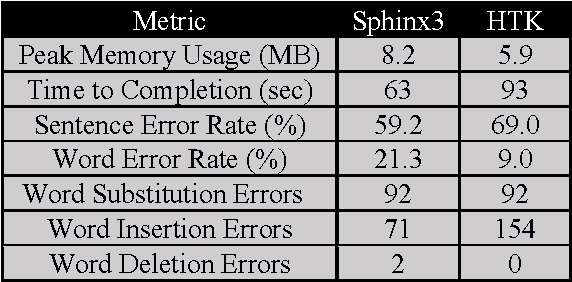

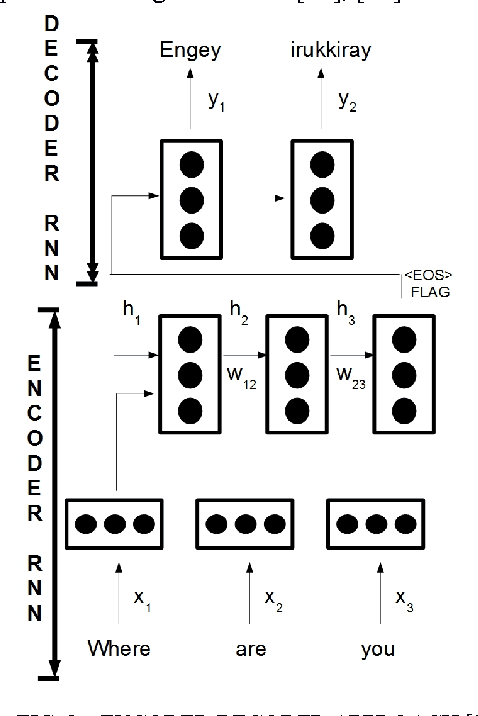

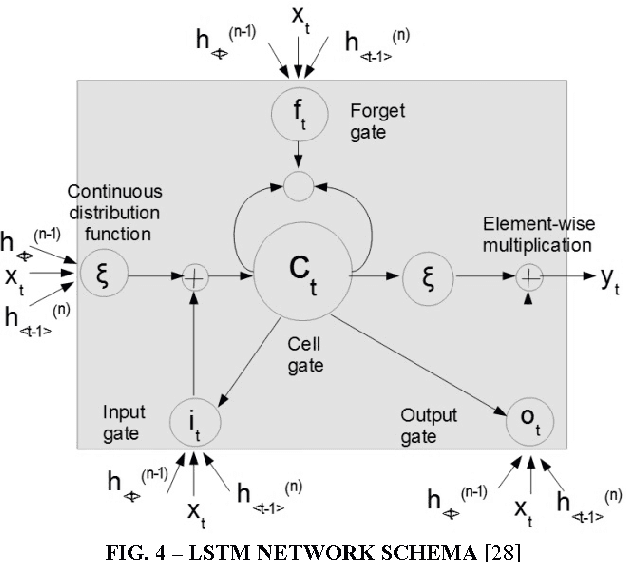

Abstract:Speech Translation has always been about giving source text or audio input and waiting for system to give translated output in desired form. In this paper, we present the Acoustic Dialect Decoder (ADD) - a voice to voice ear-piece translation device. We introduce and survey the recent advances made in the field of Speech Engineering, to employ in the ADD, particularly focusing on the three major processing steps of Recognition, Translation and Synthesis. We tackle the problem of machine understanding of natural language by designing a recognition unit for source audio to text, a translation unit for source language text to target language text, and a synthesis unit for target language text to target language speech. Speech from the surroundings will be recorded by the recognition unit present on the ear-piece and translation will start as soon as one sentence is successfully read. This way, we hope to give translated output as and when input is being read. The recognition unit will use Hidden Markov Models (HMMs) Based Tool-Kit (HTK), hybrid RNN systems with gated memory cells, and the synthesis unit, HMM based speech synthesis system HTS. This system will initially be built as an English to Tamil translation device.

* (8 pages, 7 figures, IEEE Digital Xplore paper)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge