Katja Seeliger

Generalization of an Upper Bound on the Number of Nodes Needed to Achieve Linear Separability

Feb 10, 2018

Abstract:An important issue in neural network research is how to choose the number of nodes and layers such as to solve a classification problem. We provide new intuitions based on earlier results by An et al. (2015) by deriving an upper bound on the number of nodes in networks with two hidden layers such that linear separability can be achieved. Concretely, we show that if the data can be described in terms of N finite sets and the used activation function f is non-constant, increasing and has a left asymptote, we can derive how many nodes are needed to linearly separate these sets. This will be an upper bound that depends on the structure of the data. This structure can be analyzed using an algorithm. For the leaky rectified linear activation function, we prove separately that under some conditions on the slope, the same number of layers and nodes as for the aforementioned activation functions is sufficient. We empirically validate our claims.

Deep adversarial neural decoding

Jun 15, 2017

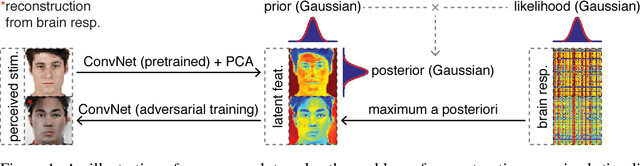

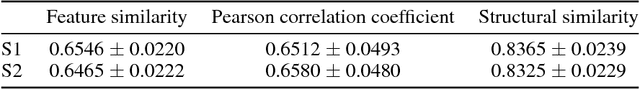

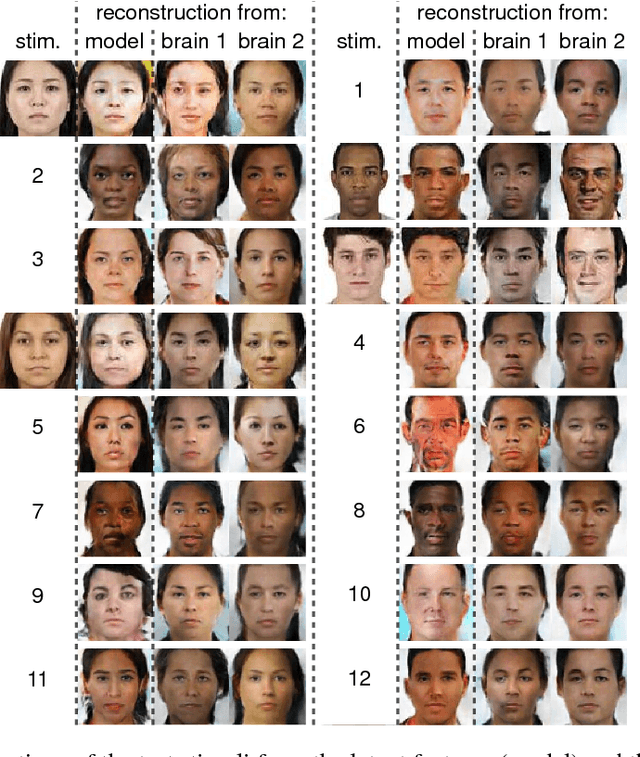

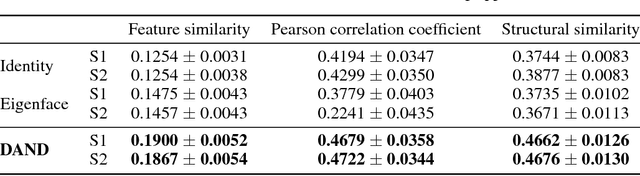

Abstract:Here, we present a novel approach to solve the problem of reconstructing perceived stimuli from brain responses by combining probabilistic inference with deep learning. Our approach first inverts the linear transformation from latent features to brain responses with maximum a posteriori estimation and then inverts the nonlinear transformation from perceived stimuli to latent features with adversarial training of convolutional neural networks. We test our approach with a functional magnetic resonance imaging experiment and show that it can generate state-of-the-art reconstructions of perceived faces from brain activations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge