Katarzyna Radecka

FoodTracker: A Real-time Food Detection Mobile Application by Deep Convolutional Neural Networks

Sep 16, 2019

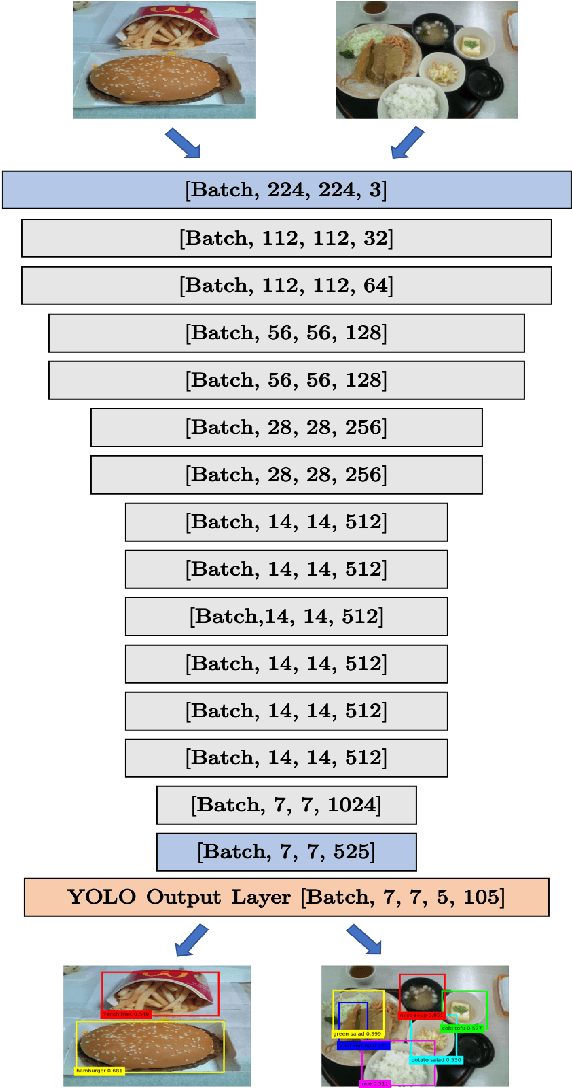

Abstract:We present a mobile application made to recognize food items of multi-object meal from a single image in real-time, and then return the nutrition facts with components and approximate amounts. Our work is organized in two parts. First, we build a deep convolutional neural network merging with YOLO, a state-of-the-art detection strategy, to achieve simultaneous multi-object recognition and localization with nearly 80% mean average precision. Second, we adapt our model into a mobile application with extending function for nutrition analysis. After inferring and decoding the model output in the app side, we present detection results that include bounding box position and class label in either real-time or local mode. Our model is well-suited for mobile devices with negligible inference time and small memory requirements with a deep learning algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge