Kartik Pandey

CAVE: Controllable Authorship Verification Explanations

Jun 24, 2024

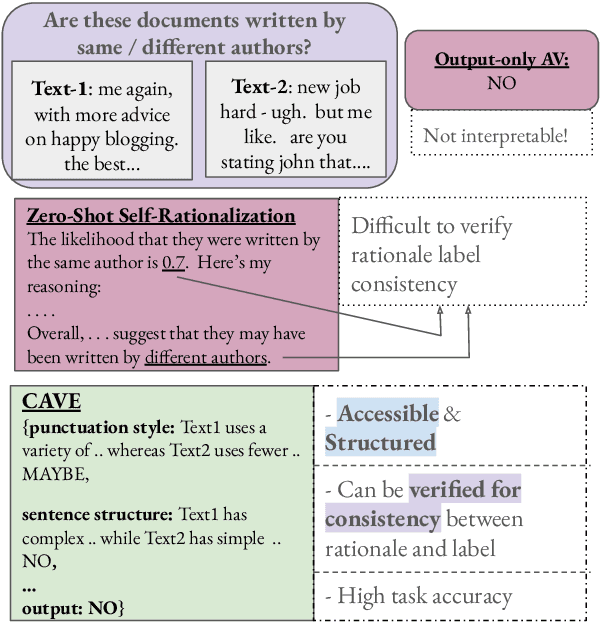

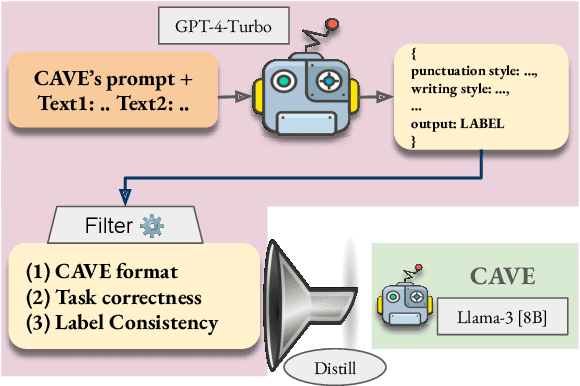

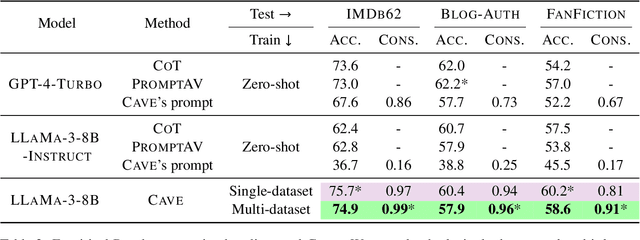

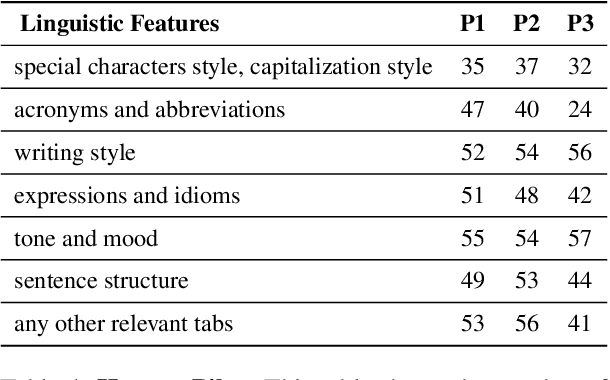

Abstract:Authorship Verification (AV) (do two documents have the same author?) is essential for many sensitive real-life applications. AV is often used in proprietary domains that require a private, offline model, making SOTA online models like ChatGPT undesirable. Other SOTA systems use methods, e.g. Siamese Networks, that are uninterpretable, and hence cannot be trusted in high-stakes applications. In this work, we take the first step to address the above challenges with our model CAVE (Controllable Authorship Verification Explanations): CAVE generates free-text AV explanations that are controlled to be 1) structured (can be decomposed into sub-explanations with respect to relevant linguistic features), and 2) easily verified for explanation-label consistency (via intermediate labels in sub-explanations). In this work, we train a Llama-3-8B as CAVE; since there are no human-written corpora for AV explanations, we sample silver-standard explanations from GPT-4-TURBO and distill them into a pretrained Llama-3-8B. Results on three difficult AV datasets IMdB2, Blog-Auth, and FanFiction show that CAVE generates high quality explanations (as measured by automatic and human evaluation) as well as competitive task accuracies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge