Karthikeyan Sundaresan

Fast Localization and Tracking in City-Scale UWB Networks

Oct 03, 2023Abstract:Localization of networked nodes is an essential problem in emerging applications, including first-responder navigation, automated manufacturing lines, vehicular and drone navigation, asset navigation and tracking, Internet of Things and 5G communication networks. In this paper, we present Locate3D, a novel system for peer-to-peer node localization and orientation estimation in large networks. Unlike traditional range-only methods, Locate3D introduces angle-of-arrival (AoA) data as an added network topology constraint. The system solves three key challenges: it uses angles to reduce the number of measurements required by 4x and jointly use range and angle data for location estimation. We develop a spanning-tree approach for fast location updates, and to ensure the output graphs are rigid and uniquely realizable, even in occluded or weakly connected areas. Locate3D cuts down latency by up to 75% without compromising accuracy, surpassing standard range-only solutions. It has a 10.2 meters median localization error for large-scale networks (30,000 nodes, 15 anchors spread across 14km square) and 0.5 meters for small-scale networks (10 nodes).

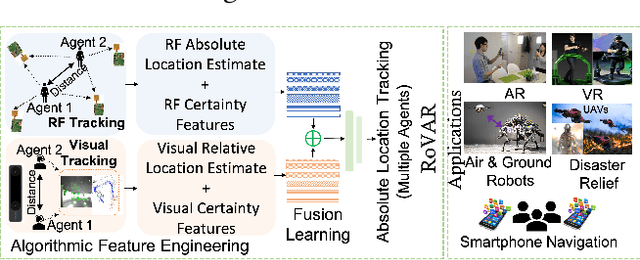

RoVaR: Robust Multi-agent Tracking through Dual-layer Diversity in Visual and RF Sensor Fusion

Jul 06, 2022

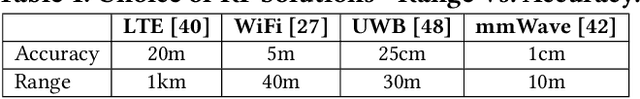

Abstract:The plethora of sensors in our commodity devices provides a rich substrate for sensor-fused tracking. Yet, today's solutions are unable to deliver robust and high tracking accuracies across multiple agents in practical, everyday environments - a feature central to the future of immersive and collaborative applications. This can be attributed to the limited scope of diversity leveraged by these fusion solutions, preventing them from catering to the multiple dimensions of accuracy, robustness (diverse environmental conditions) and scalability (multiple agents) simultaneously. In this work, we take an important step towards this goal by introducing the notion of dual-layer diversity to the problem of sensor fusion in multi-agent tracking. We demonstrate that the fusion of complementary tracking modalities, - passive/relative (e.g., visual odometry) and active/absolute tracking (e.g., infrastructure-assisted RF localization) offer a key first layer of diversity that brings scalability while the second layer of diversity lies in the methodology of fusion, where we bring together the complementary strengths of algorithmic (for robustness) and data-driven (for accuracy) approaches. RoVaR is an embodiment of such a dual-layer diversity approach that intelligently attends to cross-modal information using algorithmic and data-driven techniques that jointly share the burden of accurately tracking multiple agents in the wild. Extensive evaluations reveal RoVaR's multi-dimensional benefits in terms of tracking accuracy (median of 15cm), robustness (in unseen environments), light weight (runs in real-time on mobile platforms such as Jetson Nano/TX2), to enable practical multi-agent immersive applications in everyday environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge