Karthik Raghunathan

LLM-Based Insight Extraction for Contact Center Analytics and Cost-Efficient Deployment

Mar 24, 2025

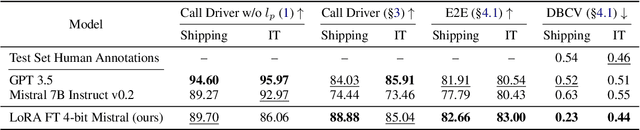

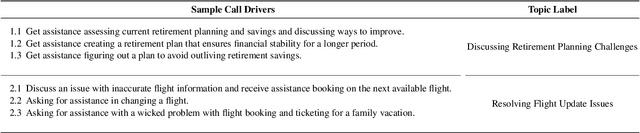

Abstract:Large Language Models have transformed the Contact Center industry, manifesting in enhanced self-service tools, streamlined administrative processes, and augmented agent productivity. This paper delineates our system that automates call driver generation, which serves as the foundation for tasks such as topic modeling, incoming call classification, trend detection, and FAQ generation, delivering actionable insights for contact center agents and administrators to consume. We present a cost-efficient LLM system design, with 1) a comprehensive evaluation of proprietary, open-weight, and fine-tuned models and 2) cost-efficient strategies, and 3) the corresponding cost analysis when deployed in production environments.

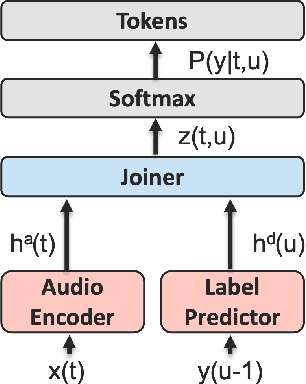

Self-consistent context aware conformer transducer for speech recognition

Feb 09, 2024

Abstract:We propose a novel neural network architecture based on conformer transducer that adds contextual information flow to the ASR systems. Our method improves the accuracy of recognizing uncommon words while not harming the word error rate of regular words. We explore the uncommon words accuracy improvement when we use the new model and/or shallow fusion with context language model. We found that combination of both provides cumulative gain in uncommon words recognition accuracy.

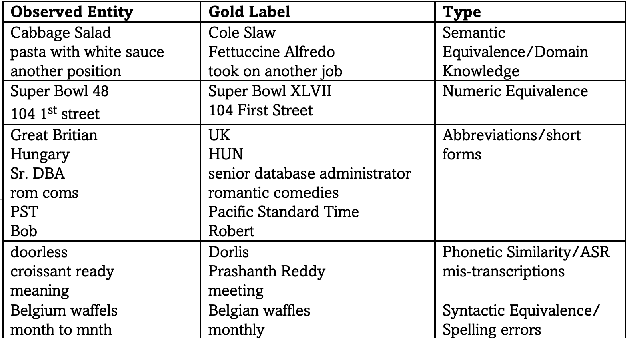

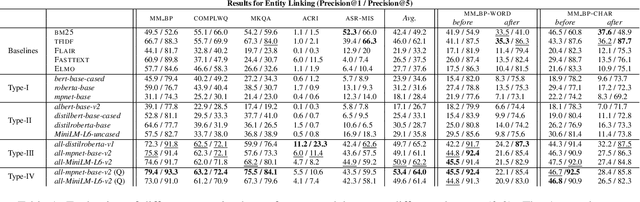

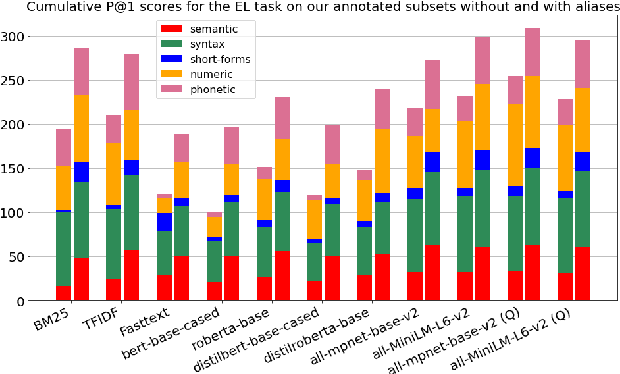

Evaluating Pretrained Transformer Models for Entity Linking in Task-Oriented Dialog

Dec 15, 2021

Abstract:The wide applicability of pretrained transformer models (PTMs) for natural language tasks is well demonstrated, but their ability to comprehend short phrases of text is less explored. To this end, we evaluate different PTMs from the lens of unsupervised Entity Linking in task-oriented dialog across 5 characteristics -- syntactic, semantic, short-forms, numeric and phonetic. Our results demonstrate that several of the PTMs produce sub-par results when compared to traditional techniques, albeit competitive to other neural baselines. We find that some of their shortcomings can be addressed by using PTMs fine-tuned for text-similarity tasks, which illustrate an improved ability in comprehending semantic and syntactic correspondences, as well as some improvements for short-forms, numeric and phonetic variations in entity mentions. We perform qualitative analysis to understand nuances in their predictions and discuss scope for further improvements. Code can be found at https://github.com/murali1996/el_tod

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge