Karl-Günter Gaßmann

Stride Length Estimation with Deep Learning

Mar 09, 2017

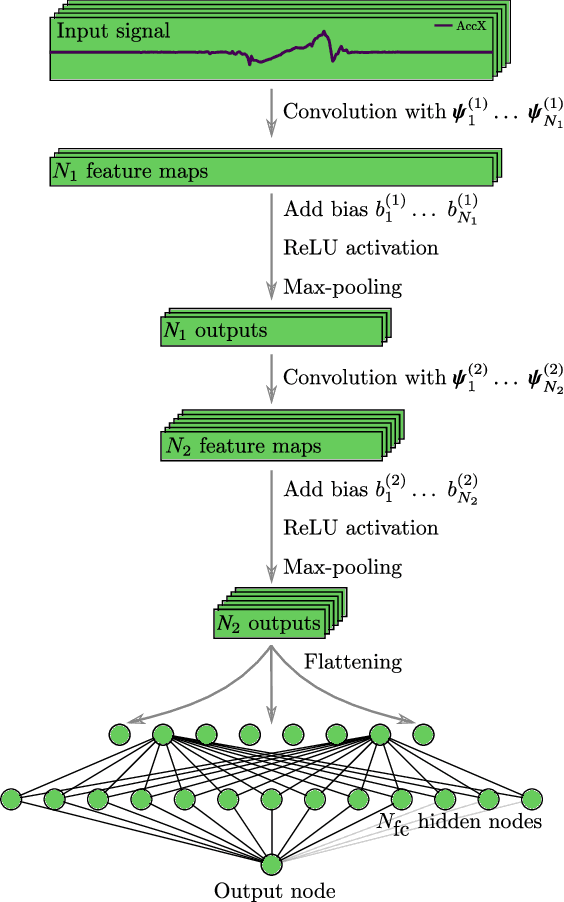

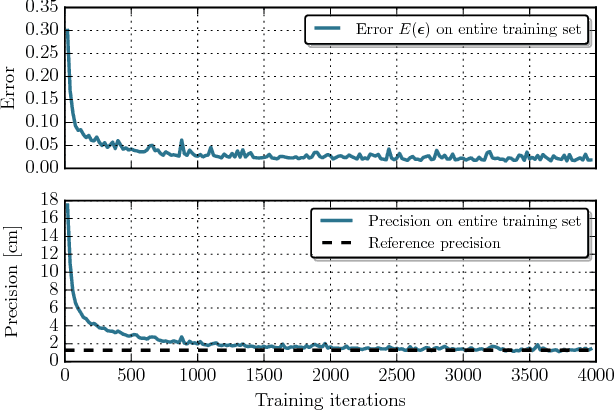

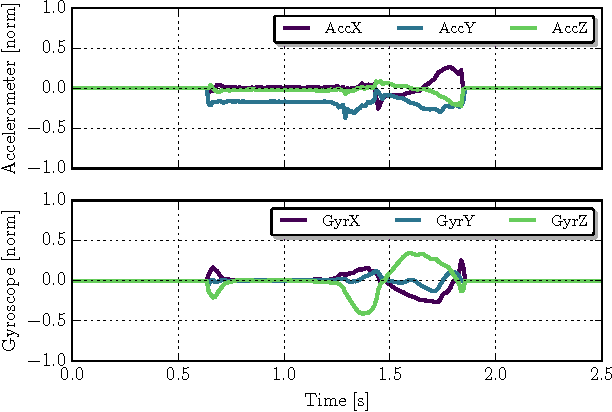

Abstract:Accurate estimation of spatial gait characteristics is critical to assess motor impairments resulting from neurological or musculoskeletal disease. Currently, however, methodological constraints limit clinical applicability of state-of-the-art double integration approaches to gait patterns with a clear zero-velocity phase. We describe a novel approach to stride length estimation that uses deep convolutional neural networks to map stride-specific inertial sensor data to the resulting stride length. The model is trained on a publicly available and clinically relevant benchmark dataset consisting of 1220 strides from 101 geriatric patients. Evaluation is done in a 10-fold cross validation and for three different stride definitions. Even though best results are achieved with strides defined from mid-stance to mid-stance with average accuracy and precision of 0.01 $\pm$ 5.37 cm, performance does not strongly depend on stride definition. The achieved precision outperforms state-of-the-art methods evaluated on this benchmark dataset by 3.0 cm (36%). Due to the independence of stride definition, the proposed method is not subject to the methodological constrains that limit applicability of state-of-the-art double integration methods. Furthermore, precision on the benchmark dataset could be improved. With more precise mobile stride length estimation, new insights to the progression of neurological disease or early indications might be gained. Due to the independence of stride definition, previously uncharted diseases in terms of mobile gait analysis can now be investigated by re-training and applying the proposed method.

Sensor-based Gait Parameter Extraction with Deep Convolutional Neural Networks

Jan 13, 2017

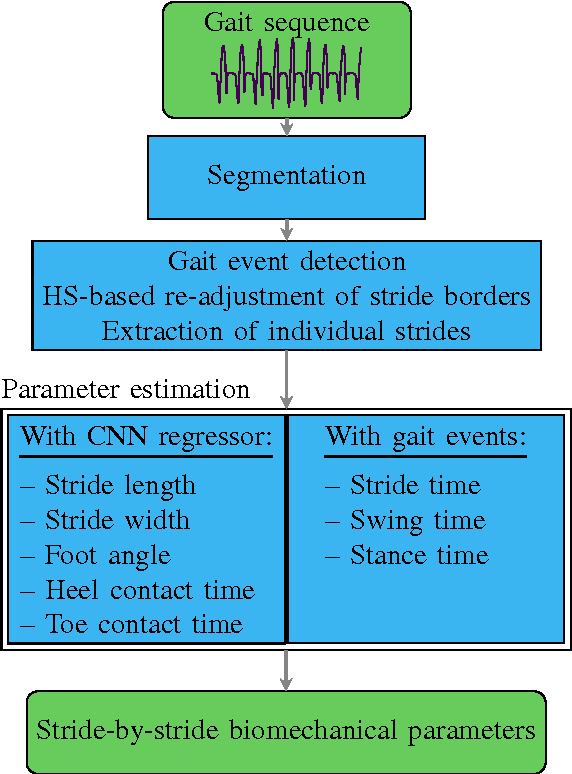

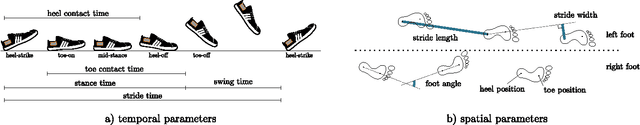

Abstract:Measurement of stride-related, biomechanical parameters is the common rationale for objective gait impairment scoring. State-of-the-art double integration approaches to extract these parameters from inertial sensor data are, however, limited in their clinical applicability due to the underlying assumptions. To overcome this, we present a method to translate the abstract information provided by wearable sensors to context-related expert features based on deep convolutional neural networks. Regarding mobile gait analysis, this enables integration-free and data-driven extraction of a set of 8 spatio-temporal stride parameters. To this end, two modelling approaches are compared: A combined network estimating all parameters of interest and an ensemble approach that spawns less complex networks for each parameter individually. The ensemble approach is outperforming the combined modelling in the current application. On a clinically relevant and publicly available benchmark dataset, we estimate stride length, width and medio-lateral change in foot angle up to ${-0.15\pm6.09}$ cm, ${-0.09\pm4.22}$ cm and ${0.13 \pm 3.78^\circ}$ respectively. Stride, swing and stance time as well as heel and toe contact times are estimated up to ${\pm 0.07}$, ${\pm0.05}$, ${\pm 0.07}$, ${\pm0.07}$ and ${\pm0.12}$ s respectively. This is comparable to and in parts outperforming or defining state-of-the-art. Our results further indicate that the proposed change in methodology could substitute assumption-driven double-integration methods and enable mobile assessment of spatio-temporal stride parameters in clinically critical situations as e.g. in the case of spastic gait impairments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge