Karl Munson

Out of style: Misadventures with LLMs and code style transfer

Jun 14, 2024

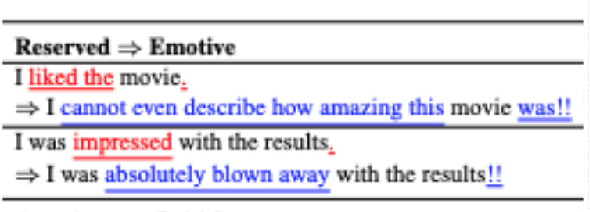

Abstract:Like text, programs have styles, and certain programming styles are more desirable than others for program readability, maintainability, and performance. Code style transfer, however, is difficult to automate except for trivial style guidelines such as limits on line length. Inspired by the success of using language models for text style transfer, we investigate if code language models can perform code style transfer. Code style transfer, unlike text transfer, has rigorous requirements: the system needs to identify lines of code to change, change them correctly, and leave the rest of the program untouched. We designed CSB (Code Style Benchmark), a benchmark suite of code style transfer tasks across five categories including converting for-loops to list comprehensions, eliminating duplication in code, adding decorators to methods, etc. We then used these tests to see if large pre-trained code language models or fine-tuned models perform style transfer correctly, based on rigorous metrics to test that the transfer did occur, and the code still passes functional tests. Surprisingly, language models failed to perform all of the tasks, suggesting that they perform poorly on tasks that require code understanding. We will make available the large-scale corpora to help the community build better code models.

Exploring Code Style Transfer with Neural Networks

Sep 13, 2022

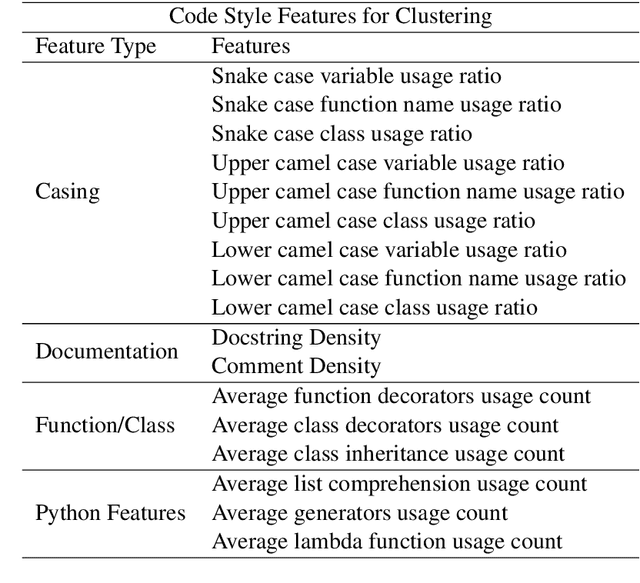

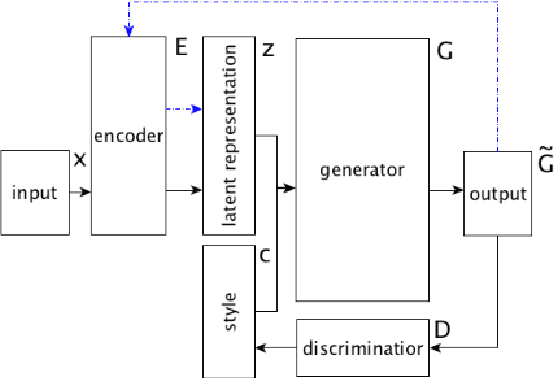

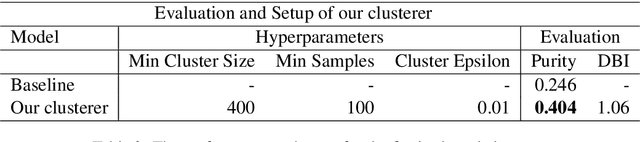

Abstract:Style is a significant component of natural language text, reflecting a change in the tone of text while keeping the underlying information the same. Even though programming languages have strict syntax rules, they also have style. Code can be written with the same functionality but using different language features. However, programming style is difficult to quantify, and thus as part of this work, we define style attributes, specifically for Python. To build a definition of style, we utilized hierarchical clustering to capture a style definition without needing to specify transformations. In addition to defining style, we explore the capability of a pre-trained code language model to capture information about code style. To do this, we fine-tuned pre-trained code-language models and evaluated their performance in code style transfer tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge