Kai-Ling Lo

GPoeT-2: A GPT-2 Based Poem Generator

May 18, 2022

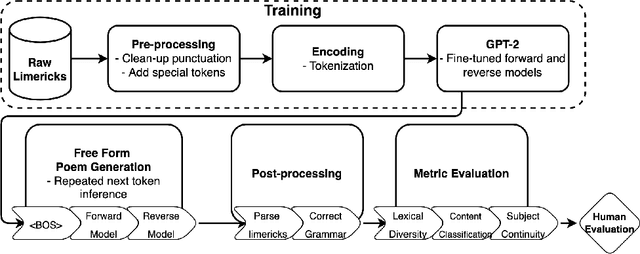

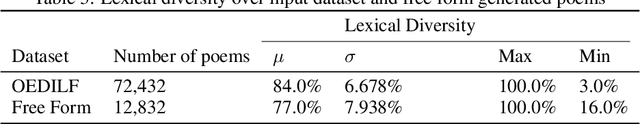

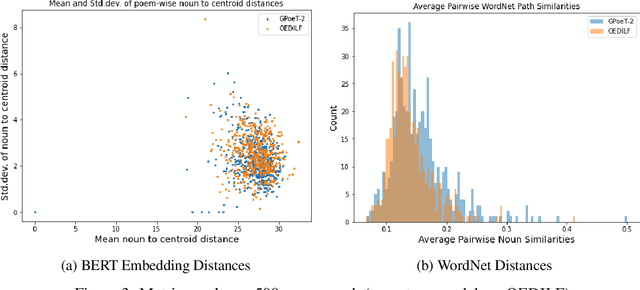

Abstract:This project aims to produce the next volume of machine-generated poetry, a complex art form that can be structured and unstructured, and carries depth in the meaning between the lines. GPoeT-2 is based on fine-tuning a state of the art natural language model (i.e. GPT-2) to generate limericks, typically humorous structured poems consisting of five lines with a AABBA rhyming scheme. With a two-stage generation system utilizing both forward and reverse language modeling, GPoeT-2 is capable of freely generating limericks in diverse topics while following the rhyming structure without any seed phrase or a posteriori constraints.Based on the automated generation process, we explore a wide variety of evaluation metrics to quantify "good poetry," including syntactical correctness, lexical diversity, and subject continuity. Finally, we present a collection of 94 categorized limericks that rank highly on the explored "good poetry" metrics to provoke human creativity.

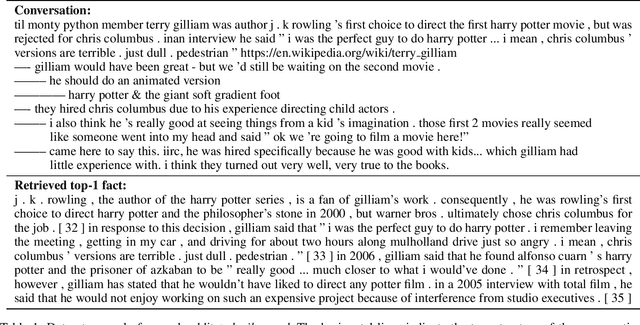

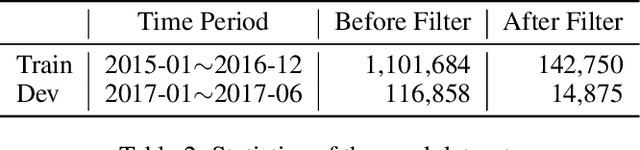

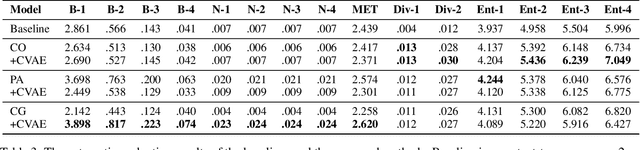

Knowledge-Grounded Response Generation with Deep Attentional Latent-Variable Model

Mar 23, 2019

Abstract:End-to-end dialogue generation has achieved promising results without using handcrafted features and attributes specific for each task and corpus. However, one of the fatal drawbacks in such approaches is that they are unable to generate informative utterances, so it limits their usage from some real-world conversational applications. This paper attempts at generating diverse and informative responses with a variational generation model, which contains a joint attention mechanism conditioning on the information from both dialogue contexts and extra knowledge.

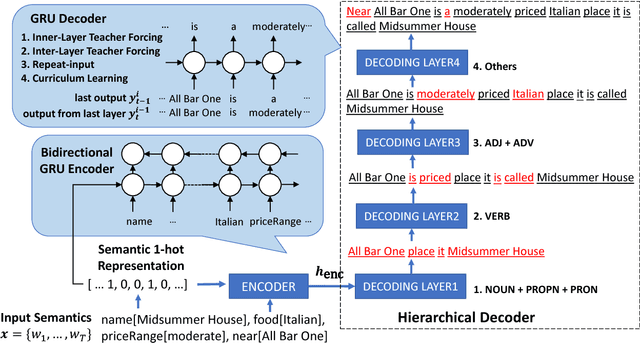

Natural Language Generation by Hierarchical Decoding with Linguistic Patterns

Aug 09, 2018

Abstract:Natural language generation (NLG) is a critical component in spoken dialogue systems. Classic NLG can be divided into two phases: (1) sentence planning: deciding on the overall sentence structure, (2) surface realization: determining specific word forms and flattening the sentence structure into a string. Many simple NLG models are based on recurrent neural networks (RNN) and sequence-to-sequence (seq2seq) model, which basically contains an encoder-decoder structure; these NLG models generate sentences from scratch by jointly optimizing sentence planning and surface realization using a simple cross entropy loss training criterion. However, the simple encoder-decoder architecture usually suffers from generating complex and long sentences, because the decoder has to learn all grammar and diction knowledge. This paper introduces a hierarchical decoding NLG model based on linguistic patterns in different levels, and shows that the proposed method outperforms the traditional one with a smaller model size. Furthermore, the design of the hierarchical decoding is flexible and easily-extensible in various NLG systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge