Juan Pablo García Amboage

Model Performance Prediction for Hyperparameter Optimization of Deep Learning Models Using High Performance Computing and Quantum Annealing

Nov 29, 2023

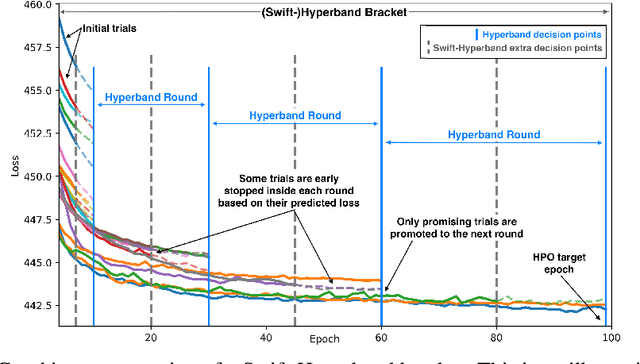

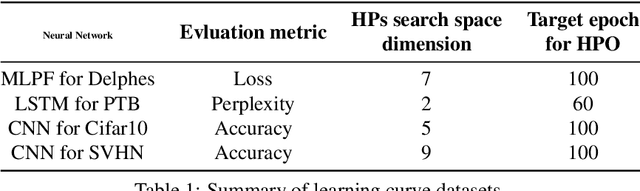

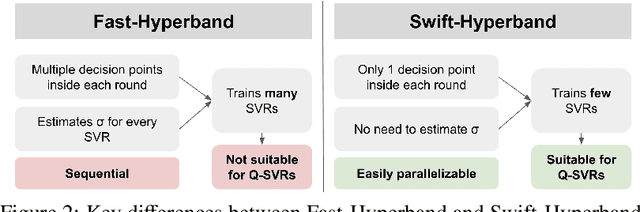

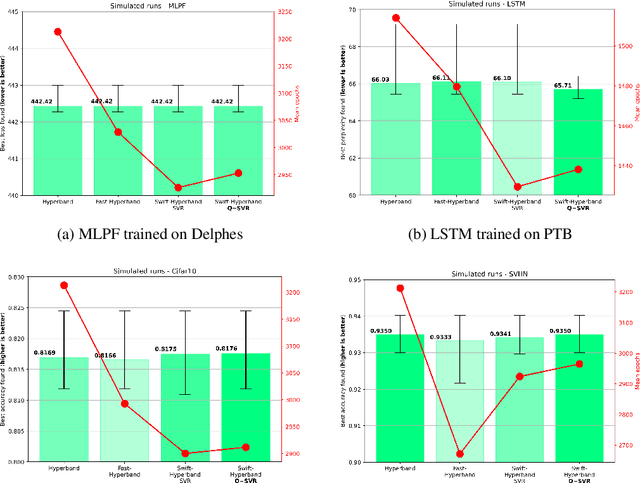

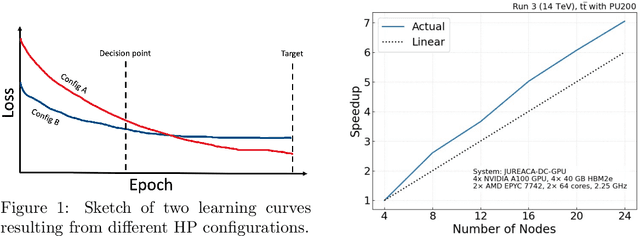

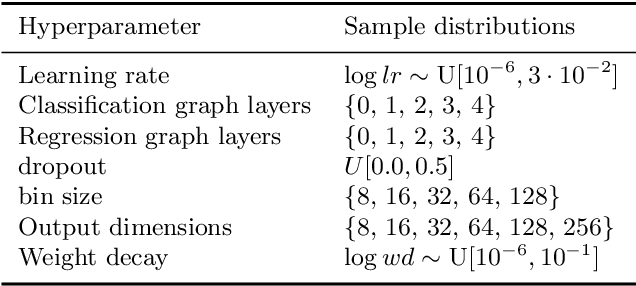

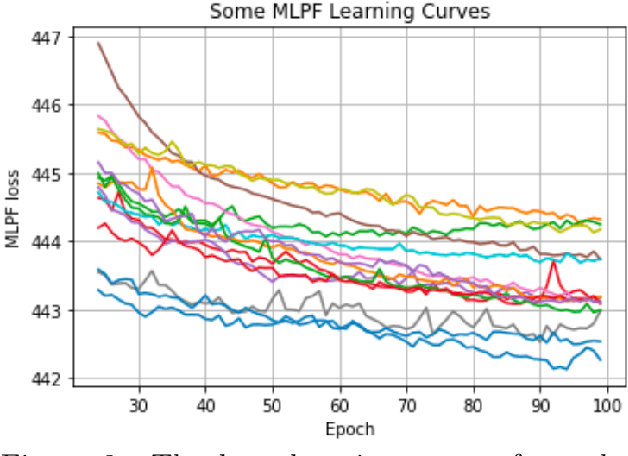

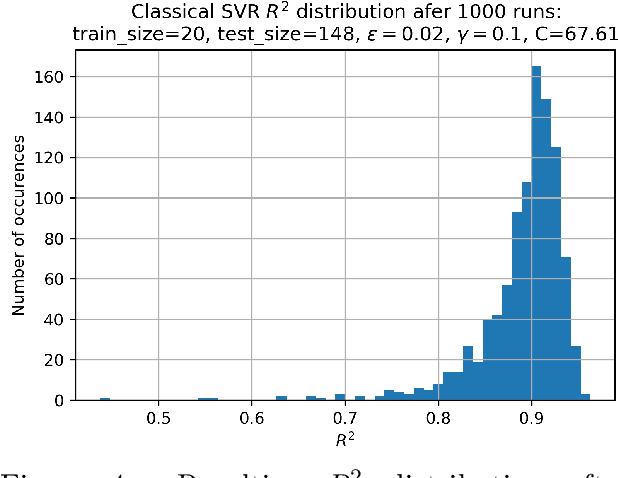

Abstract:Hyperparameter Optimization (HPO) of Deep Learning-based models tends to be a compute resource intensive process as it usually requires to train the target model with many different hyperparameter configurations. We show that integrating model performance prediction with early stopping methods holds great potential to speed up the HPO process of deep learning models. Moreover, we propose a novel algorithm called Swift-Hyperband that can use either classical or quantum support vector regression for performance prediction and benefit from distributed High Performance Computing environments. This algorithm is tested not only for the Machine-Learned Particle Flow model used in High Energy Physics, but also for a wider range of target models from domains such as computer vision and natural language processing. Swift-Hyperband is shown to find comparable (or better) hyperparameters as well as using less computational resources in all test cases.

Hyperparameter optimization, quantum-assisted model performance prediction, and benchmarking of AI-based High Energy Physics workloads using HPC

Mar 27, 2023

Abstract:Training and Hyperparameter Optimization (HPO) of deep learning-based AI models are often compute resource intensive and calls for the use of large-scale distributed resources as well as scalable and resource efficient hyperparameter search algorithms. This work studies the potential of using model performance prediction to aid the HPO process carried out on High Performance Computing systems. In addition, a quantum annealer is used to train the performance predictor and a method is proposed to overcome some of the problems derived from the current limitations in quantum systems as well as to increase the stability of solutions. This allows for achieving results on a quantum machine comparable to those obtained on a classical machine, showing how quantum computers could be integrated within classical machine learning tuning pipelines. Furthermore, results are presented from the development of a containerized benchmark based on an AI-model for collision event reconstruction that allows us to compare and assess the suitability of different hardware accelerators for training deep neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge