Joshua Reid

Improving the Performance of the LSTM and HMM Models via Hybridization

Jul 09, 2019

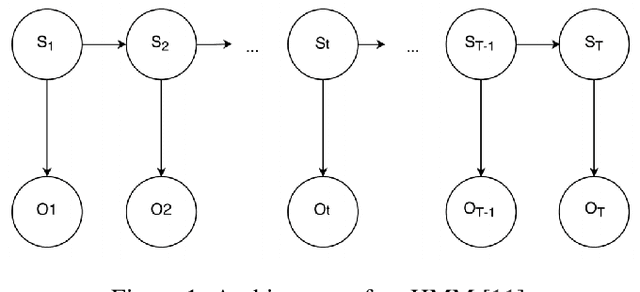

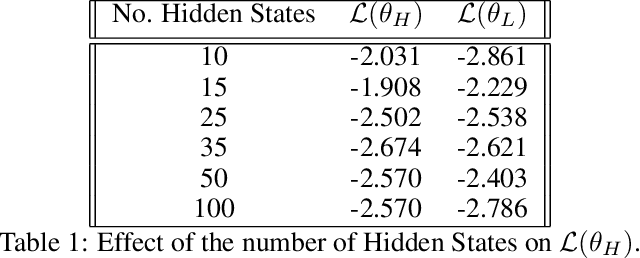

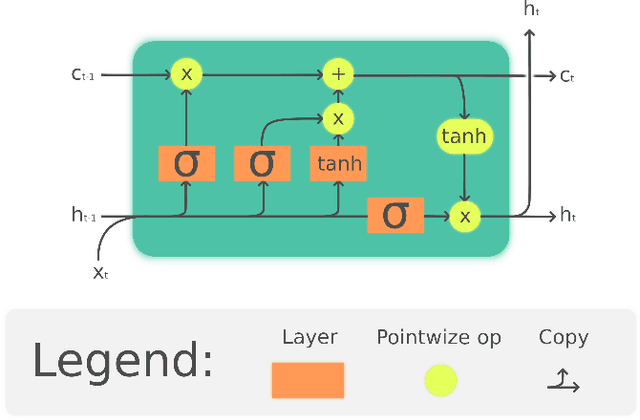

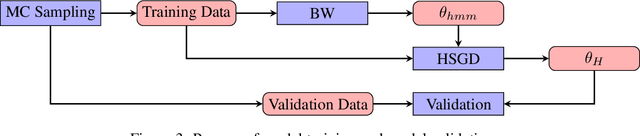

Abstract:Language models based on deep neural neural networks and traditionalstochastic modelling has become both highly functional and effective in recenttimes. In this work a general survey into the two types of language modelling is conducted. We investigate the effectiveness of a combination of the Hidden Markov Model (HMM) with the Long Short-Term Memory (LSTM) model via a process known as hybridization, which we introduce in this paper. This process involves combining the substitution of hidden state probabilities of the HMM into those of the LSTM. We conduct Monte Carlo sampling to produce training and validation of the data in order to produce robust results. The experimental results of this work displayed an increase in the predictive accuracy of LSTM model when hybridized with the HMM.

Multi-Armed Bandit Strategies for Non-Stationary Reward Distributions and Delayed Feedback Processes

Feb 22, 2019

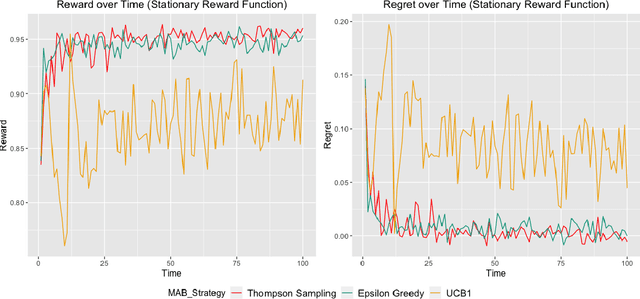

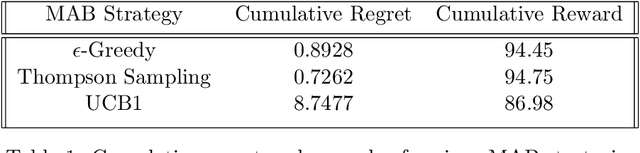

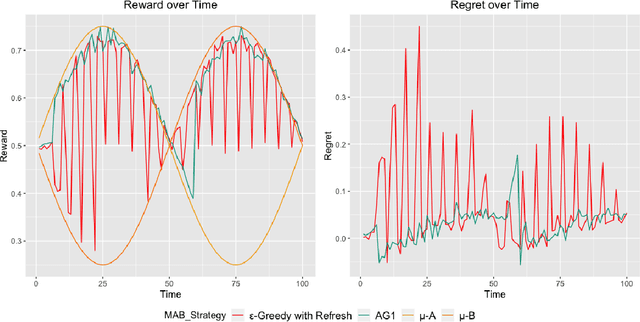

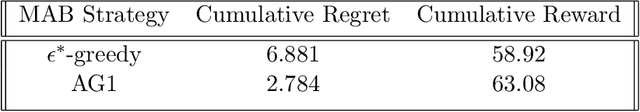

Abstract:A survey is performed of various Multi-Armed Bandit (MAB) strategies in order to examine their performance in circumstances exhibiting non-stationary stochastic reward functions in conjunction with delayed feedback. We run several MAB simulations to simulate an online eCommerce platform for grocery pick up, optimizing for product availability. In this work, we evaluate several popular MAB strategies, such as $\epsilon$-greedy, UCB1, and Thompson Sampling. We compare the respective performances of each MAB strategy in the context of regret minimization. We run the analysis in the scenario where the reward function is non-stationary. Furthermore, the process experiences delayed feedback, where the reward function is not immediately responsive to the arm played. We devise a new Bayesian technique (BAG1) tailored for non-stationary reward functions in the delayed feedback scenario. The results of the simulation show show superior performance in the context of regret minimization compared to traditional MAB strategies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge