José Ramon Herrero

Resource-aware Elastic Swap Random Forest for Evolving Data Streams

May 14, 2019

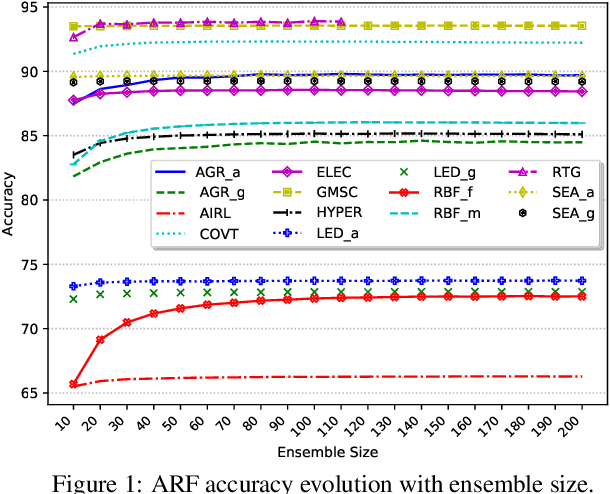

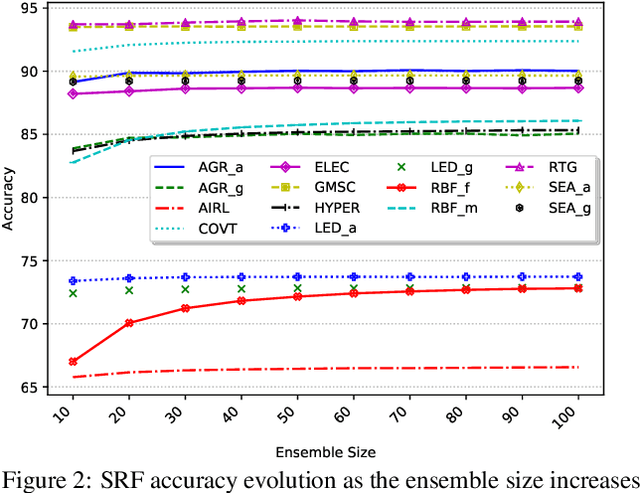

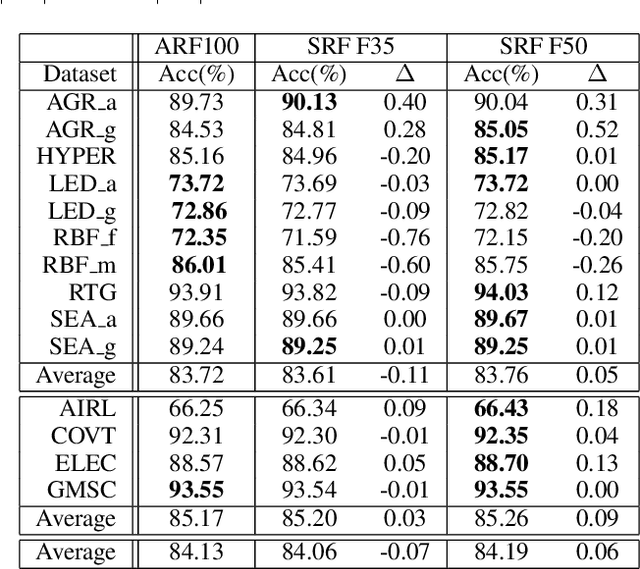

Abstract:Continual learning based on data stream mining deals with ubiquitous sources of Big Data arriving at high-velocity and in real-time. Adaptive Random Forest ({\em ARF}) is a popular ensemble method used for continual learning due to its simplicity in combining adaptive leveraging bagging with fast random Hoeffding trees. While the default ARF size provides competitive accuracy, it is usually over-provisioned resulting in the use of additional classifiers that only contribute to increasing CPU and memory consumption with marginal impact in the overall accuracy. This paper presents Elastic Swap Random Forest ({\em ESRF}), a method for reducing the number of trees in the ARF ensemble while providing similar accuracy. {\em ESRF} extends {\em ARF} with two orthogonal components: 1) a swap component that splits learners into two sets based on their accuracy (only classifiers with the highest accuracy are used to make predictions); and 2) an elastic component for dynamically increasing or decreasing the number of classifiers in the ensemble. The experimental evaluation of {\em ESRF} and comparison with the original {\em ARF} shows how the two new components contribute to reducing the number of classifiers up to one third while providing almost the same accuracy, resulting in speed-ups in terms of per-sample execution time close to 3x.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge