Jiri Ocenasek

Parallel Mixed Bayesian Optimization Algorithm: A Scaleup Analysis

Jun 03, 2004

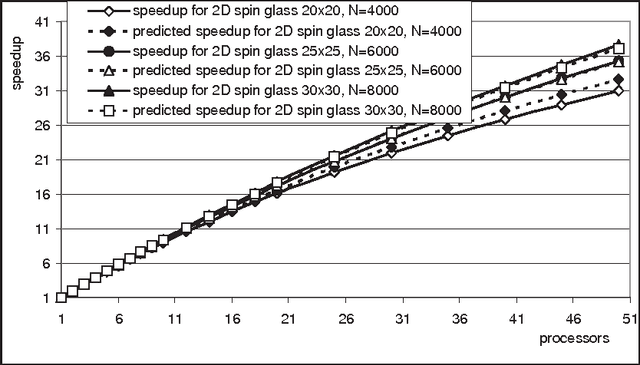

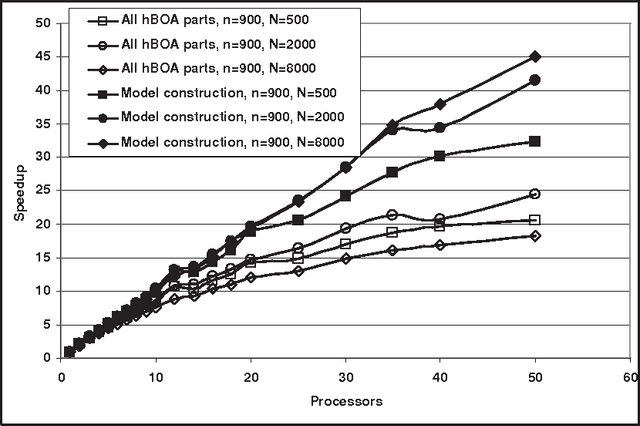

Abstract:Estimation of Distribution Algorithms have been proposed as a new paradigm for evolutionary optimization. This paper focuses on the parallelization of Estimation of Distribution Algorithms. More specifically, the paper discusses how to predict performance of parallel Mixed Bayesian Optimization Algorithm (MBOA) that is based on parallel construction of Bayesian networks with decision trees. We determine the time complexity of parallel Mixed Bayesian Optimization Algorithm and compare this complexity with experimental results obtained by solving the spin glass optimization problem. The empirical results fit well the theoretical time complexity, so the scalability and efficiency of parallel Mixed Bayesian Optimization Algorithm for unknown instances of spin glass benchmarks can be predicted. Furthermore, we derive the guidelines that can be used to design effective parallel Estimation of Distribution Algorithms with the speedup proportional to the number of variables in the problem.

Computational complexity and simulation of rare events of Ising spin glasses

Feb 15, 2004

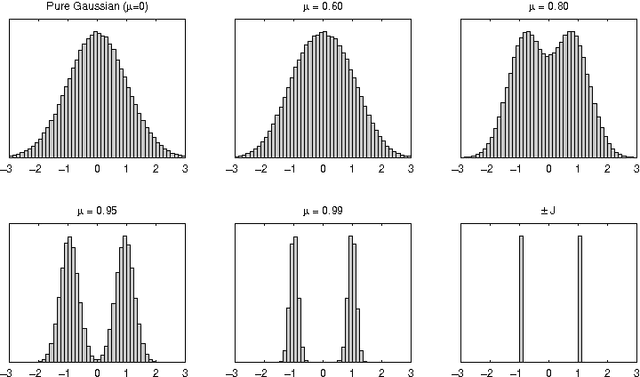

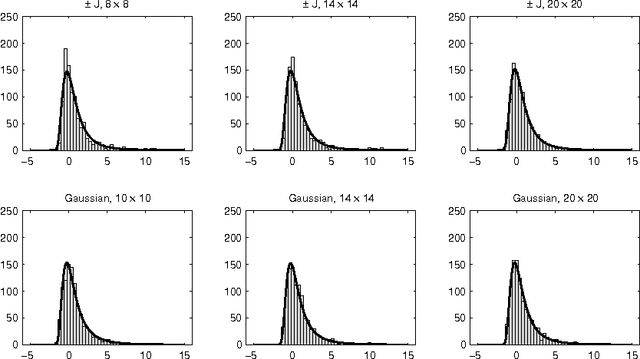

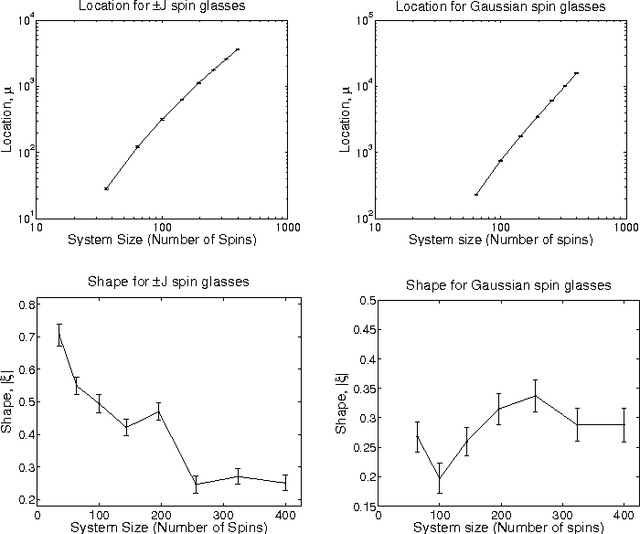

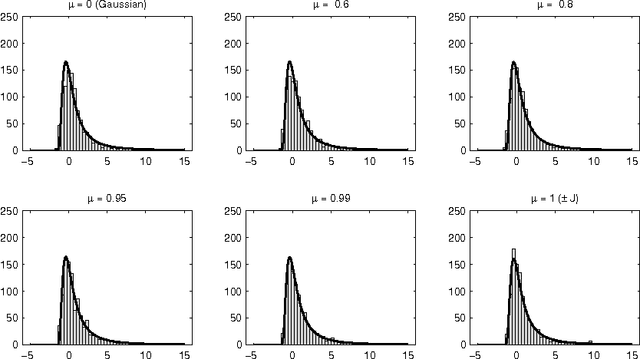

Abstract:We discuss the computational complexity of random 2D Ising spin glasses, which represent an interesting class of constraint satisfaction problems for black box optimization. Two extremal cases are considered: (1) the +/- J spin glass, and (2) the Gaussian spin glass. We also study a smooth transition between these two extremal cases. The computational complexity of all studied spin glass systems is found to be dominated by rare events of extremely hard spin glass samples. We show that complexity of all studied spin glass systems is closely related to Frechet extremal value distribution. In a hybrid algorithm that combines the hierarchical Bayesian optimization algorithm (hBOA) with a deterministic bit-flip hill climber, the number of steps performed by both the global searcher (hBOA) and the local searcher follow Frechet distributions. Nonetheless, unlike in methods based purely on local search, the parameters of these distributions confirm good scalability of hBOA with local search. We further argue that standard performance measures for optimization algorithms--such as the average number of evaluations until convergence--can be misleading. Finally, our results indicate that for highly multimodal constraint satisfaction problems, such as Ising spin glasses, recombination-based search can provide qualitatively better results than mutation-based search.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge