Jindou Dai

Residual Hyperbolic Graph Convolution Networks

Dec 05, 2024Abstract:Hyperbolic graph convolutional networks (HGCNs) have demonstrated representational capabilities of modeling hierarchical-structured graphs. However, as in general GCNs, over-smoothing may occur as the number of model layers increases, limiting the representation capabilities of most current HGCN models. In this paper, we propose residual hyperbolic graph convolutional networks (R-HGCNs) to address the over-smoothing problem. We introduce a hyperbolic residual connection function to overcome the over-smoothing problem, and also theoretically prove the effectiveness of the hyperbolic residual function. Moreover, we use product manifolds and HyperDrop to facilitate the R-HGCNs. The distinctive features of the R-HGCNs are as follows: (1) The hyperbolic residual connection preserves the initial node information in each layer and adds a hyperbolic identity mapping to prevent node features from being indistinguishable. (2) Product manifolds in R-HGCNs have been set up with different origin points in different components to facilitate the extraction of feature information from a wider range of perspectives, which enhances the representing capability of R-HGCNs. (3) HyperDrop adds multiplicative Gaussian noise into hyperbolic representations, such that perturbations can be added to alleviate the over-fitting problem without deconstructing the hyperbolic geometry. Experiment results demonstrate the effectiveness of R-HGCNs under various graph convolution layers and different structures of product manifolds.

A Hyperbolic-to-Hyperbolic Graph Convolutional Network

Apr 14, 2021

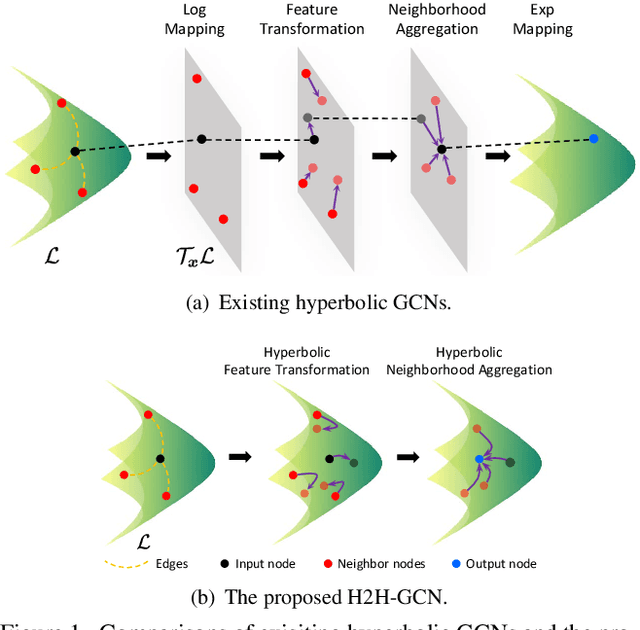

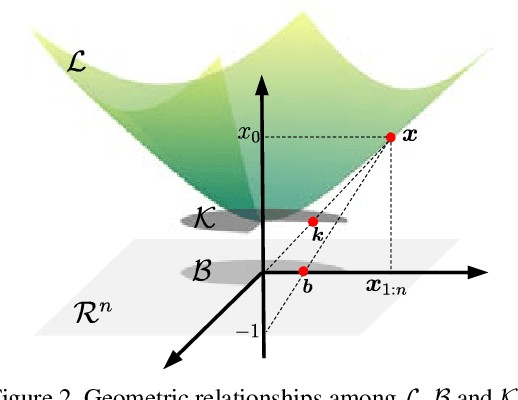

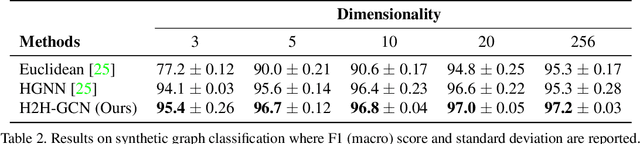

Abstract:Hyperbolic graph convolutional networks (GCNs) demonstrate powerful representation ability to model graphs with hierarchical structure. Existing hyperbolic GCNs resort to tangent spaces to realize graph convolution on hyperbolic manifolds, which is inferior because tangent space is only a local approximation of a manifold. In this paper, we propose a hyperbolic-to-hyperbolic graph convolutional network (H2H-GCN) that directly works on hyperbolic manifolds. Specifically, we developed a manifold-preserving graph convolution that consists of a hyperbolic feature transformation and a hyperbolic neighborhood aggregation. The hyperbolic feature transformation works as linear transformation on hyperbolic manifolds. It ensures the transformed node representations still lie on the hyperbolic manifold by imposing the orthogonal constraint on the transformation sub-matrix. The hyperbolic neighborhood aggregation updates each node representation via the Einstein midpoint. The H2H-GCN avoids the distortion caused by tangent space approximations and keeps the global hyperbolic structure. Extensive experiments show that the H2H-GCN achieves substantial improvements on the link prediction, node classification, and graph classification tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge