Jincheng Xiong

Neural Shell Texture Splatting: More Details and Fewer Primitives

Jul 27, 2025Abstract:Gaussian splatting techniques have shown promising results in novel view synthesis, achieving high fidelity and efficiency. However, their high reconstruction quality comes at the cost of requiring a large number of primitives. We identify this issue as stemming from the entanglement of geometry and appearance in Gaussian Splatting. To address this, we introduce a neural shell texture, a global representation that encodes texture information around the surface. We use Gaussian primitives as both a geometric representation and texture field samplers, efficiently splatting texture features into image space. Our evaluation demonstrates that this disentanglement enables high parameter efficiency, fine texture detail reconstruction, and easy textured mesh extraction, all while using significantly fewer primitives.

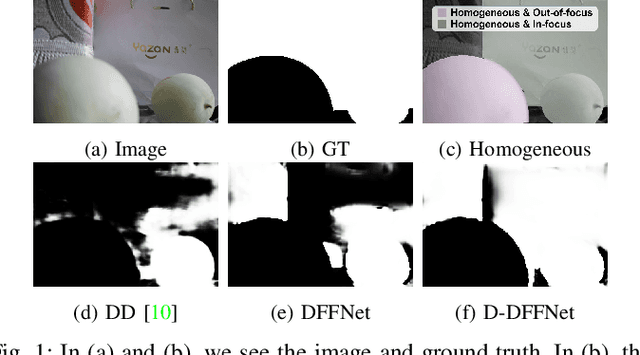

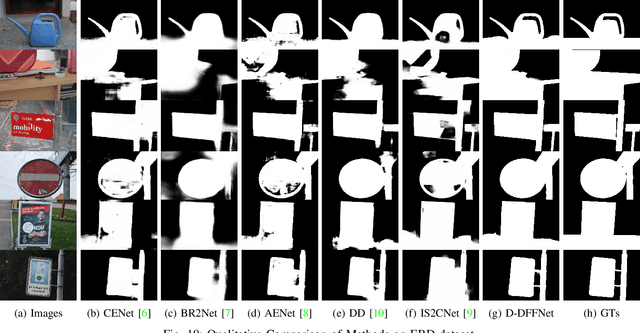

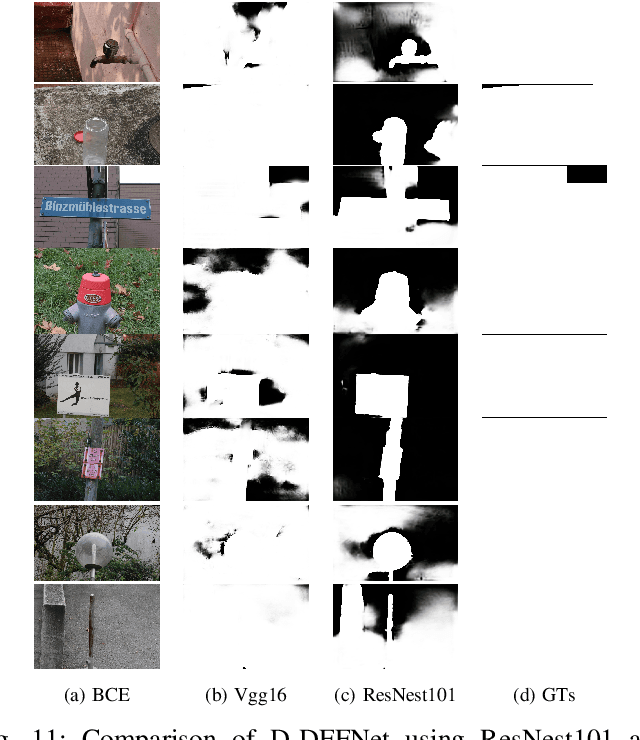

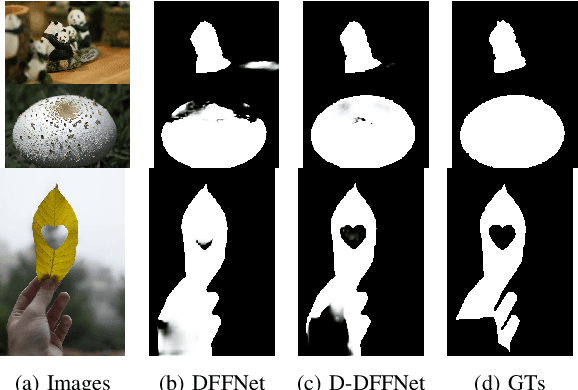

Depth and DOF Cues Make A Better Defocus Blur Detector

Jun 20, 2023

Abstract:Defocus blur detection (DBD) separates in-focus and out-of-focus regions in an image. Previous approaches mistakenly mistook homogeneous areas in focus for defocus blur regions, likely due to not considering the internal factors that cause defocus blur. Inspired by the law of depth, depth of field (DOF), and defocus, we propose an approach called D-DFFNet, which incorporates depth and DOF cues in an implicit manner. This allows the model to understand the defocus phenomenon in a more natural way. Our method proposes a depth feature distillation strategy to obtain depth knowledge from a pre-trained monocular depth estimation model and uses a DOF-edge loss to understand the relationship between DOF and depth. Our approach outperforms state-of-the-art methods on public benchmarks and a newly collected large benchmark dataset, EBD. Source codes and EBD dataset are available at: https:github.com/yuxinjin-whu/D-DFFNet.

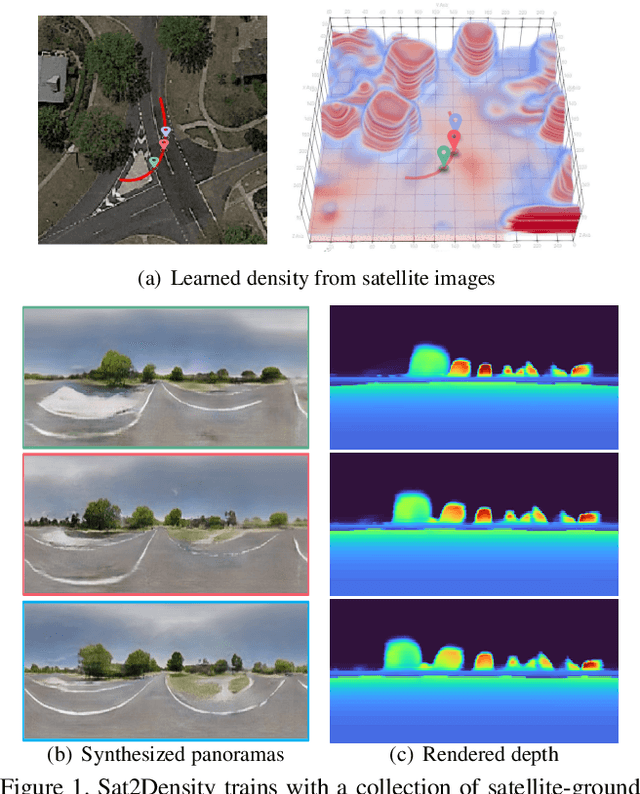

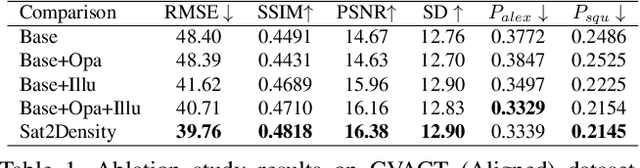

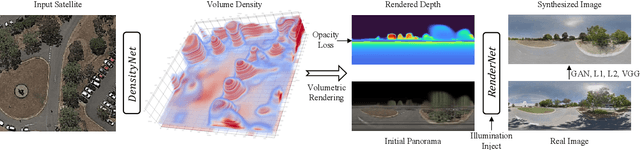

Sat2Density: Faithful Density Learning from Satellite-Ground Image Pairs

Mar 26, 2023

Abstract:This paper aims to develop an accurate 3D geometry representation of satellite images using satellite-ground image pairs. Our focus is on the challenging problem of generating ground-view panoramas from satellite images. We draw inspiration from the density field representation used in volumetric neural rendering and propose a new approach, called Sat2Density. Our method utilizes the properties of ground-view panoramas for the sky and non-sky regions to learn faithful density fields of 3D scenes in a geometric perspective. Unlike other methods that require extra 3D information during training, our Sat2Density can automatically learn the accurate and faithful 3D geometry via density representation from 2D-only supervision. This advancement significantly improves the ground-view panorama synthesis task. Additionally, our study provides a new geometric perspective to understand the relationship between satellite and ground-view images in 3D space.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge