Jimin Dai

ERDDCI: Exact Reversible Diffusion via Dual-Chain Inversion for High-Quality Image Editing

Oct 18, 2024

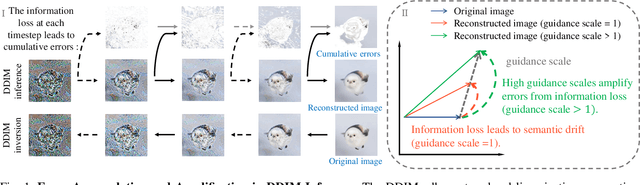

Abstract:Diffusion models (DMs) have been successfully applied to real image editing. These models typically invert images into latent noise vectors used to reconstruct the original images (known as inversion), and then edit them during the inference process. However, recent popular DMs often rely on the assumption of local linearization, where the noise injected during the inversion process is expected to approximate the noise removed during the inference process. While DM efficiently generates images under this assumption, it can also accumulate errors during the diffusion process due to the assumption, ultimately negatively impacting the quality of real image reconstruction and editing. To address this issue, we propose a novel method, referred to as ERDDCI (Exact Reversible Diffusion via Dual-Chain Inversion). ERDDCI uses the new Dual-Chain Inversion (DCI) for joint inference to derive an exact reversible diffusion process. By using DCI, our method effectively avoids the cumbersome optimization process in existing inversion approaches and achieves high-quality image editing. Additionally, to accommodate image operations under high guidance scales, we introduce a dynamic control strategy that enables more refined image reconstruction and editing. Our experiments demonstrate that ERDDCI significantly outperforms state-of-the-art methods in a 50-step diffusion process. It achieves rapid and precise image reconstruction with an SSIM of 0.999 and an LPIPS of 0.001, and also delivers competitive results in image editing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge