Jiahui Xiong

FSDNet-An efficient fire detection network for complex scenarios based on YOLOv3 and DenseNet

Apr 15, 2023

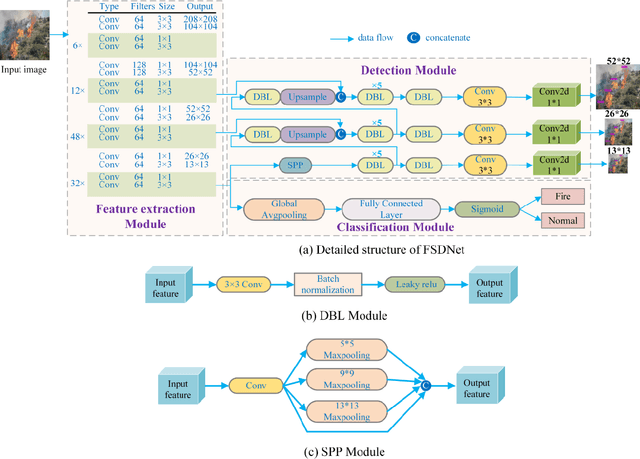

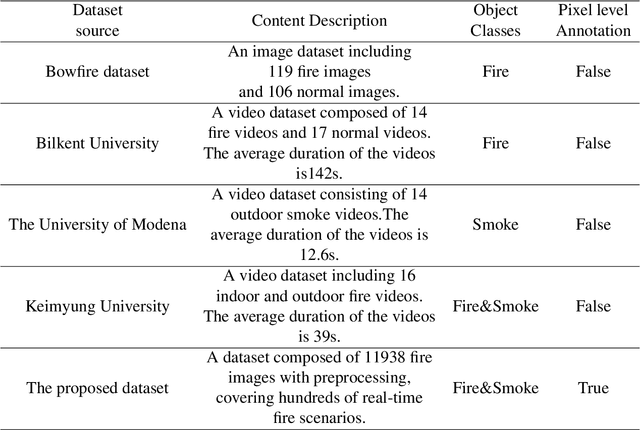

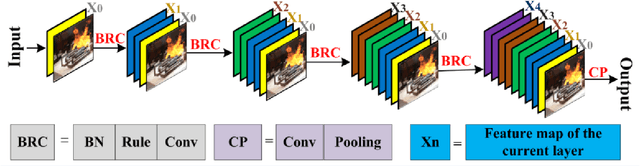

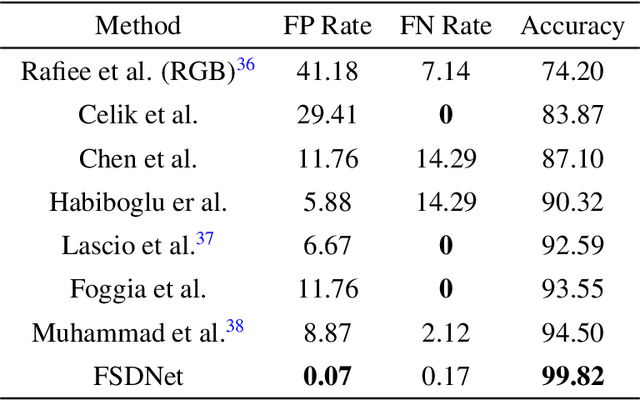

Abstract:Fire is one of the common disasters in daily life. To achieve fast and accurate detection of fires, this paper proposes a detection network called FSDNet (Fire Smoke Detection Network), which consists of a feature extraction module, a fire classification module, and a fire detection module. Firstly, a dense connection structure is introduced in the basic feature extraction module to enhance the feature extraction ability of the backbone network and alleviate the gradient disappearance problem. Secondly, a spatial pyramid pooling structure is introduced in the fire detection module, and the Mosaic data augmentation method and CIoU loss function are used in the training process to comprehensively improve the flame feature extraction ability. Finally, in view of the shortcomings of public fire datasets, a fire dataset called MS-FS (Multi-scene Fire And Smoke) containing 11938 fire images was created through data collection, screening, and object annotation. To prove the effectiveness of the proposed method, the accuracy of the method was evaluated on two benchmark fire datasets and MS-FS. The experimental results show that the accuracy of FSDNet on the two benchmark datasets is 99.82% and 91.15%, respectively, and the average precision on MS-FS is 86.80%, which is better than the mainstream fire detection methods.

YOLO-Drone:Airborne real-time detection of dense small objects from high-altitude perspective

Apr 14, 2023Abstract:Unmanned Aerial Vehicles (UAVs), specifically drones equipped with remote sensing object detection technology, have rapidly gained a broad spectrum of applications and emerged as one of the primary research focuses in the field of computer vision. Although UAV remote sensing systems have the ability to detect various objects, small-scale objects can be challenging to detect reliably due to factors such as object size, image degradation, and real-time limitations. To tackle these issues, a real-time object detection algorithm (YOLO-Drone) is proposed and applied to two new UAV platforms as well as a specific light source (silicon-based golden LED). YOLO-Drone presents several novelties: 1) including a new backbone Darknet59; 2) a new complex feature aggregation module MSPP-FPN that incorporated one spatial pyramid pooling and three atrous spatial pyramid pooling modules; 3) and the use of Generalized Intersection over Union (GIoU) as the loss function. To evaluate performance, two benchmark datasets, UAVDT and VisDrone, along with one homemade dataset acquired at night under silicon-based golden LEDs, are utilized. The experimental results show that, in both UAVDT and VisDrone, the proposed YOLO-Drone outperforms state-of-the-art (SOTA) object detection methods by improving the mAP of 10.13% and 8.59%, respectively. With regards to UAVDT, the YOLO-Drone exhibits both high real-time inference speed of 53 FPS and a maximum mAP of 34.04%. Notably, YOLO-Drone achieves high performance under the silicon-based golden LEDs, with a mAP of up to 87.71%, surpassing the performance of YOLO series under ordinary light sources. To conclude, the proposed YOLO-Drone is a highly effective solution for object detection in UAV applications, particularly for night detection tasks where silicon-based golden light LED technology exhibits significant superiority.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge