Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Jessica S. Titensky

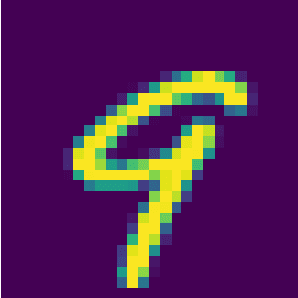

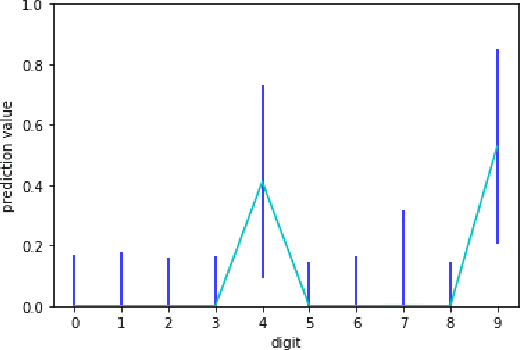

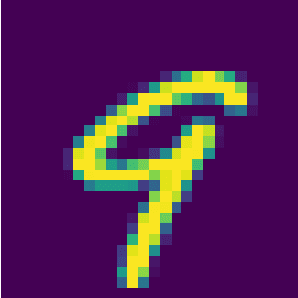

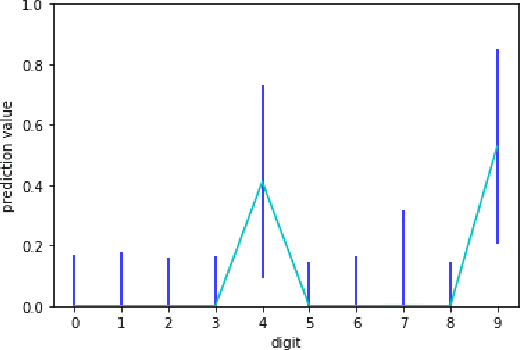

Uncertainty Propagation in Deep Neural Networks Using Extended Kalman Filtering

Sep 17, 2018Figures and Tables:

Abstract:Extended Kalman Filtering (EKF) can be used to propagate and quantify input uncertainty through a Deep Neural Network (DNN) assuming mild hypotheses on the input distribution. This methodology yields results comparable to existing methods of uncertainty propagation for DNNs while lowering the computational overhead considerably. Additionally, EKF allows model error to be naturally incorporated into the output uncertainty.

* 4 Pages, 8 figures. Accepted at MIT IEEE Undergraduate Research

Technology Conference 2018. Publication pending

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge