Jerome Gilles

Noisy image decomposition: a new structure, texture and noise model based on local adaptivity

Nov 13, 2024Abstract:These last few years, image decomposition algorithms have been proposed to split an image into two parts: the structures and the textures. These algorithms are not adapted to the case of noisy images because the textures are corrupted by noise. In this paper, we propose a new model which decomposes an image into three parts (structures, textures and noise) based on a local regularization scheme. We compare our results with the recent work of Aujol and Chambolle. We finish by giving another model which combines the advantages of the two previous ones.

* arXiv admin note: text overlap with arXiv:2411.05265

Choix d'un espace de représentation image adapté à la détection de réseaux routiers

Nov 13, 2024

Abstract:These last years, algorithms allowing to decompose an image into its structures and textures components have emerged. In this paper, we present an application of this type of decomposition to the problem road network detection in aerial or satelite imagery. The algorithmic procedure involves the image decomposition (using a unique property), an alignment detection step based on the Gestalt theory, and a refinement step using statistical active contours.

* in French language

Restoration algorithms and system performance evaluation for active imagers

Nov 13, 2024Abstract:This paper deals with two fields related to active imaging system. First, we begin to explore image processing algorithms to restore the artefacts like speckle, scintillation and image dancing caused by atmospheric turbulence. Next, we examine how to evaluate the performance of this kind of systems. To do this task, we propose a modified version of the german TRM3 metric which permits to get MTF-like measures. We use the database acquired during NATO-TG40 field trials to make our tests.

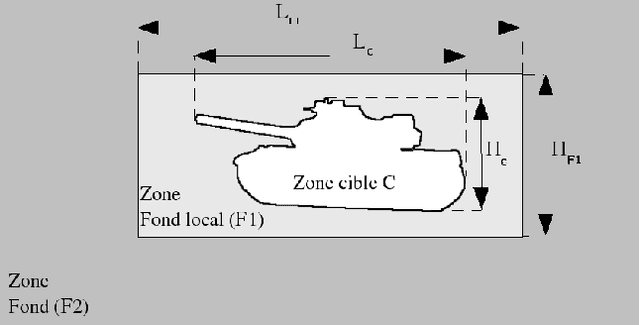

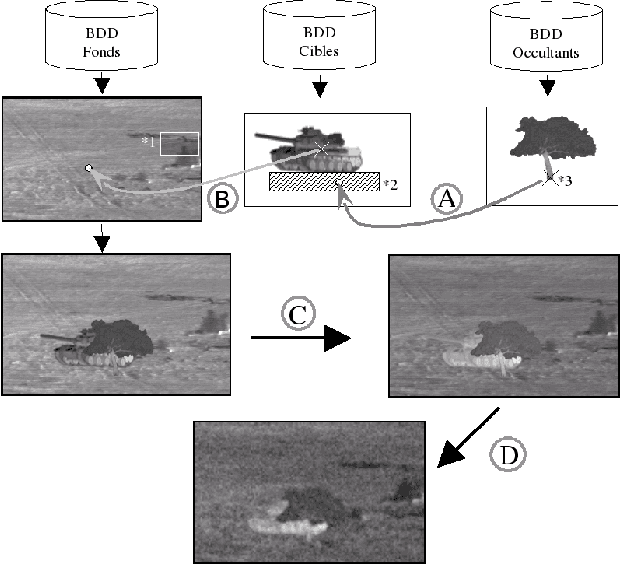

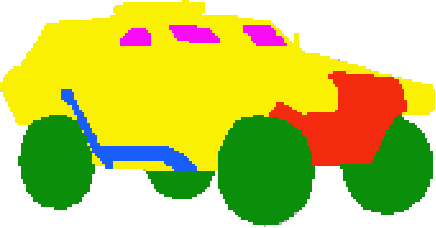

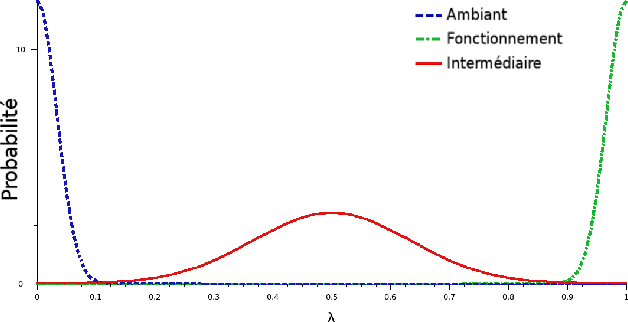

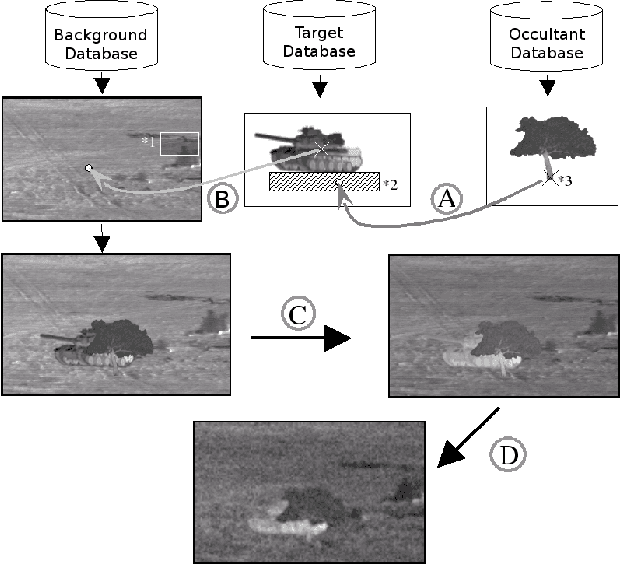

Génération de bases de données images IR sous contraintes avec variabilité thermique intrinsèque des cibles

Nov 12, 2024

Abstract:In this communication, we propose a method which permits to simulate images of targets in infrared imagery by superimposition of vehicle signatures in background, eventually with occultants. We develop a principle which authorizes us to generate different thermal configurations of target signatures. This method enables us to easily generate huge datasets for ATR algorithms performance evaluation.

Atmospheric turbulence restoration by diffeomorphic image registration and blind deconvolution

Nov 12, 2024Abstract:A novel approach is presented in this paper to improve images which are altered by atmospheric turbulence. Two new algorithms are presented based on two combinations of a blind deconvolution block, an elastic registration block and a temporal filter block. The algorithms are tested on real images acquired in the desert in New Mexico by the NATO RTG40 group.

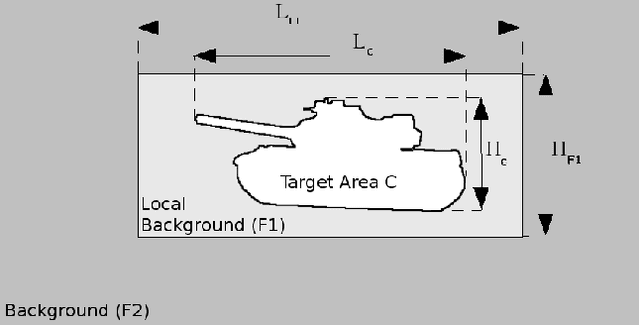

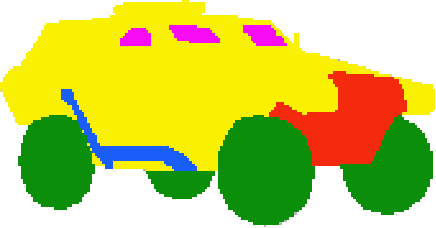

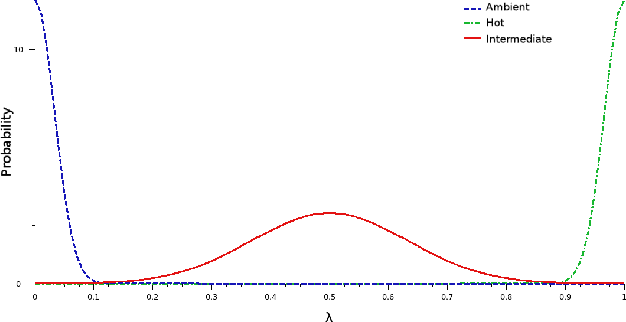

IR image databases generation under target intrinsic thermal variability constraints

Nov 12, 2024

Abstract:This paper deals with the problem of infrared image database generation for ATR assessment purposes. Huge databases are required to have quantitative and objective performance evaluations. We propose a method which superimpose targets and occultants on background under image quality metrics constraints to generate realistic images. We also propose a method to generate target signatures with intrinsic thermal variability based on 3D models plated with real infrared textures.

* arXiv admin note: substantial text overlap with arXiv:2411.06695

Séparation en composantes structures, textures et bruit d'une image, apport de l'utilisation des contourlettes

Nov 11, 2024

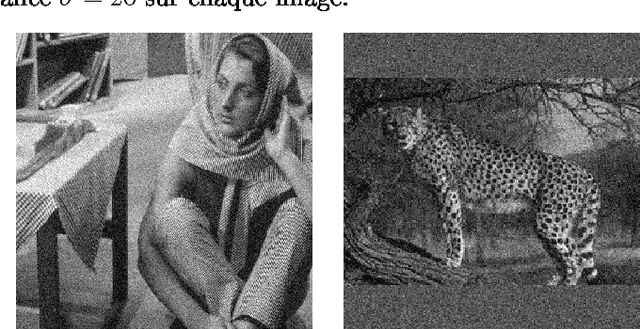

Abstract:In this paper, we propose to improve image decomposition algorithms in the case of noisy images. In \cite{gilles1,aujoluvw}, the authors propose to separate structures, textures and noise from an image. Unfortunately, the use of separable wavelets shows some artefacts. In this paper, we propose to replace the wavelet transform by the contourlet transform which better approximate geometry in images. For that, we define contourlet spaces and their associated norms. Then, we get an iterative algorithm which we test on two noisy textured images.

Image Decomposition: Theory, Numerical Schemes, and Performance Evaluation

Nov 08, 2024Abstract:This paper describes the many image decomposition models that allow to separate structures and textures or structures, textures, and noise. These models combined a total variation approach with different adapted functional spaces such as Besov or Contourlet spaces or a special oscillating function space based on the work of Yves Meyer. We propose a method to evaluate the performance of such algorithms to enhance understanding of the behavior of these models.

Properties of BV-G structures + textures decomposition models. Application to road detection in satellite images

Nov 07, 2024

Abstract:In this paper we present some theoretical results about a structures-textures image decomposition model which was proposed by the second author. We prove a theorem which gives the behavior of this model in different cases. Finally, as a consequence of the theorem we derive an algorithm for the detection of long and thin objects applied to a road networks detection application in aerial or satellite images.

Efficient single image non-uniformity correction algorithm

Nov 07, 2024Abstract:This paper introduces a new way to correct the non-uniformity (NU) in uncooled infrared-type images. The main defect of these uncooled images is the lack of a column (resp. line) time-dependent cross-calibration, resulting in a strong column (resp. line) and time dependent noise. This problem can be considered as a 1D flicker of the columns inside each frame. Thus, classic movie deflickering algorithms can be adapted, to equalize the columns (resp. the lines). The proposed method therefore applies to the series formed by the columns of an infrared image a movie deflickering algorithm. The obtained single image method works on static images, and therefore requires no registration, no camera motion compensation, and no closed aperture sensor equalization. Thus, the method has only one camera dependent parameter, and is landscape independent. This simple method will be compared to a state of the art total variation single image correction on raw real and simulated images. The method is real time, requiring only two operations per pixel. It involves no test-pattern calibration and produces no "ghost artifacts".

* arXiv admin note: substantial text overlap with arXiv:2411.03615

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge