Jens U. Kreber

Learning a Terrain- and Robot-Aware Dynamics Model for Autonomous Mobile Robot Navigation

Sep 17, 2024

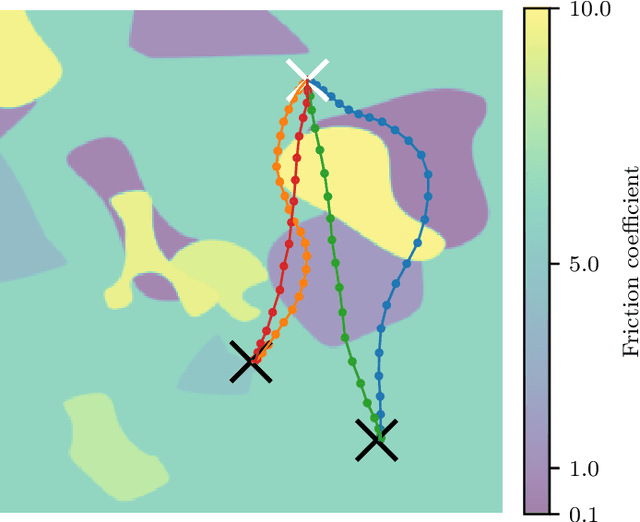

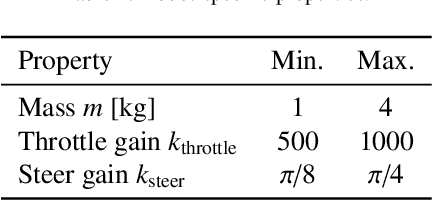

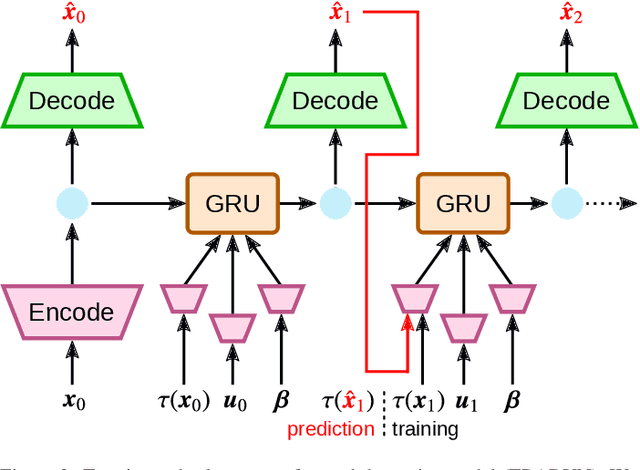

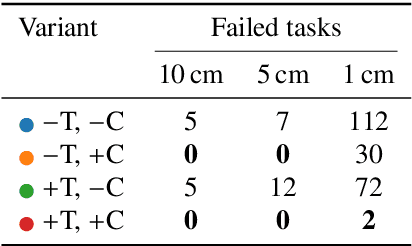

Abstract:Mobile robots should be capable of planning cost-efficient paths for autonomous navigation. Typically, the terrain and robot properties are subject to variations. For instance, properties of the terrain such as friction may vary across different locations. Also, properties of the robot may change such as payloads or wear and tear, e.g., causing changing actuator gains or joint friction. Autonomous navigation approaches should thus be able to adapt to such variations. In this article, we propose a novel approach for learning a probabilistic, terrain- and robot-aware forward dynamics model (TRADYN) which can adapt to such variations and demonstrate its use for navigation. Our learning approach extends recent advances in meta-learning forward dynamics models based on Neural Processes for mobile robot navigation. We evaluate our method in simulation for 2D navigation of a robot with uni-cycle dynamics with varying properties on terrain with spatially varying friction coefficients. In our experiments, we demonstrate that TRADYN has lower prediction error over long time horizons than model ablations which do not adapt to robot or terrain variations. We also evaluate our model for navigation planning in a model-predictive control framework and under various sources of noise. We demonstrate that our approach yields improved performance in planning control-efficient paths by taking robot and terrain properties into account.

Generating Symbolic Reasoning Problems with Transformer GANs

Oct 19, 2021

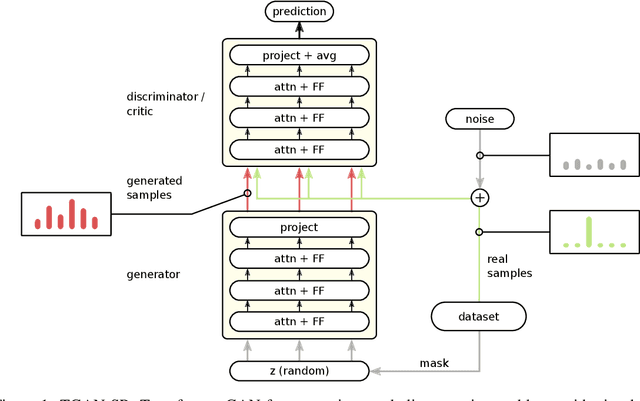

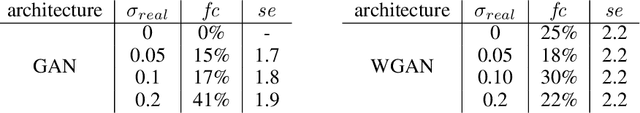

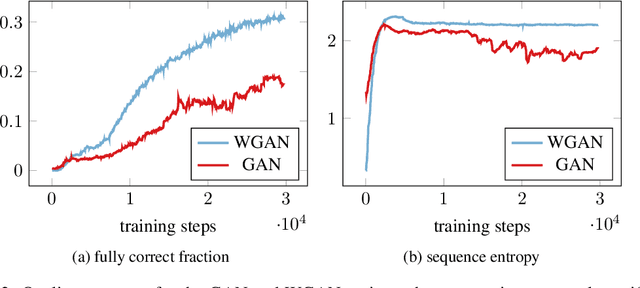

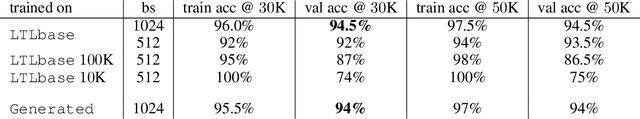

Abstract:Constructing training data for symbolic reasoning domains is challenging: Existing instances are typically hand-crafted and too few to be trained on directly and synthetically generated instances are often hard to evaluate in terms of their meaningfulness. We study the capabilities of GANs and Wasserstein GANs equipped with Transformer encoders to generate sensible and challenging training data for symbolic reasoning domains. We conduct experiments on two problem domains where Transformers have been successfully applied recently: symbolic mathematics and temporal specifications in verification. Even without autoregression, our GAN models produce syntactically correct instances. We show that the generated data can be used as a substitute for real training data when training a classifier, and, especially, that training data can be generated from a real dataset that is too small to be trained on directly. Using a GAN setting also allows us to alter the target distribution: We show that by adding a classifier uncertainty part to the generator objective, we obtain a dataset that is even harder to solve for a classifier than our original dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge